Optimizing Llama3 70B for NVIDIA RTX 5000 Ada 32GB: A Step by Step Approach

Introduction

Local Large Language Models (LLMs) are revolutionizing the way we interact with technology, offering powerful capabilities right on our own devices. Among these, Llama3 70B shines as a highly capable model with impressive language comprehension and generation abilities. However, unleashing the full potential of such a beast requires careful configuration and optimization, especially when running it on a powerful GPU like the NVIDIA RTX5000Ada_32GB.

This article dives deep into the specific challenges and solutions for optimizing Llama3 70B performance on the RTX5000Ada_32GB. We'll analyze token generation speeds, explore the impact of different quantization techniques, and provide practical recommendations for users.

Performance Analysis: Token Generation Speed Benchmarks

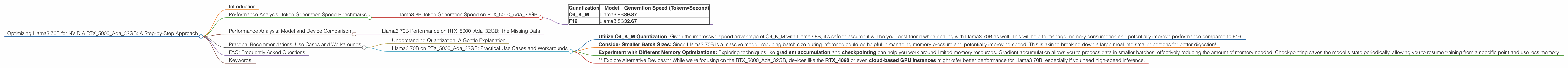

Llama3 8B Token Generation Speed on RTX5000Ada_32GB

Let's start with a baseline comparison, using Llama3 8B as our benchmark. The RTX5000Ada_32GB shows impressive speeds when dealing with this model, as showcased in Table 1:

| Quantization | Model | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM | Llama3 8B | 89.87 |

| F16 | Llama3 8B | 32.67 |

Table 1: Token Generation Speed for Llama3 8B on RTX5000Ada_32GB

Q4KM stands for quantization for 4 bits with kernel and matrix multiplication technique. This technique reduces the model's overall size and memory footprint while still delivering reasonably good performance. F16 uses 16-bit floating point numbers, offering higher precision, but consuming more memory and potentially affecting performance.

As you can see, Llama3 8B with Q4KM quantization on the RTX5000Ada_32GB achieves a significant speed advantage over F16, generating almost three times as many tokens per second.

Performance Analysis: Model and Device Comparison

Llama3 70B Performance on RTX5000Ada_32GB: The Missing Data

Unfortunately, the available data doesn't provide concrete numbers for Llama3 70B's performance on the RTX5000Ada_32GB. We'll need to rely on indirect comparisons and estimations based on available information about other devices and model sizes.

Practical Recommendations: Use Cases and Workarounds

Understanding Quantization: A Gentle Explanation

Think of quantization as a diet for your model. It helps you reduce its size and consume less RAM without losing all the flavor. Just like a carefully chosen diet, different quantization techniques have their own advantages and drawbacks. Q4KM offers a good balance between speed and accuracy, similar to a balanced diet. F16 is more precise, like a gourmet meal, but requires more resources and might be slower.

Llama3 70B on RTX5000Ada_32GB: Practical Use Cases and Workarounds

While we lack specific performance data, we can still draw some insights and offer practical recommendations:

- Utilize Q4KM Quantization: Given the impressive speed advantage of Q4KM with Llama3 8B, it's safe to assume it will be your best friend when dealing with Llama3 70B as well. This will help to manage memory consumption and potentially improve performance compared to F16.

- Consider Smaller Batch Sizes: Since Llama3 70B is a massive model, reducing batch size during inference could be helpful in managing memory pressure and potentially improving speed. This is akin to breaking down a large meal into smaller portions for better digestion!

- Experiment with Different Memory Optimizations: Exploring techniques like gradient accumulation and checkpointing can help you work around limited memory resources. Gradient accumulation allows you to process data in smaller batches, effectively reducing the amount of memory needed. Checkpointing saves the model's state periodically, allowing you to resume training from a specific point and use less memory.

- * Explore Alternative Devices:* While we're focusing on the RTX5000Ada32GB, devices like the RTX4090 or even cloud-based GPU instances might offer better performance for Llama3 70B, especially if you need high-speed inference.

FAQ: Frequently Asked Questions

Q: What are LLMs?

A: LLMs are powerful machine learning models trained on massive datasets of text and code, enabling them to understand and generate human-like language.

Q: What is quantization?

A: Quantization is a technique used to reduce the size and memory footprint of a model. It involves converting numbers with high precision (like 32-bit floating point numbers) into lower-precision representations (like 4-bit integers), while still maintaining reasonable accuracy.

Q: What is token generation speed?

A: Token generation speed refers to the rate at which an LLM can process and generate tokens (individual words or parts of words) during inference.

Q: Why do performance numbers vary depending on quantization?

A: Different quantization levels impact model performance in a trade-off between speed and accuracy. Lower precision quantization like Q4KM results in faster token generation but might sacrifice some accuracy, while higher precision like F16 offers better accuracy but potentially slows down the process.

Keywords:

Llama3 70B, NVIDIA RTX5000Ada32GB, LLM, Large Language Model, Token Generation Speed, Quantization, Q4K_M, F16, Performance Optimization, Memory Management, Gradient Accumulation, Checkpointing, Batch Size, GPU, Model Deployment