Optimizing Llama3 70B for NVIDIA RTX 4000 Ada 20GB x4: A Step by Step Approach

Introduction

In the ever-evolving world of artificial intelligence, Large Language Models (LLMs) are taking center stage. These powerful algorithms can understand and generate human-like text, revolutionizing various fields from chatbots and content creation to code generation and scientific research.

One of the most popular LLMs is Llama3, developed by Meta AI. Llama3 comes in various sizes, with the 70B parameter model boasting impressive capabilities. However, running such a massive model locally requires significant computational resources.

This article delves into the optimization of Llama3 70B for the NVIDIA RTX4000Ada20GBx4 GPU configuration. It aims to provide a practical guide for developers and geeks interested in harnessing the power of this giant AI on their own hardware.

Performance Analysis: Breaking Down the Numbers

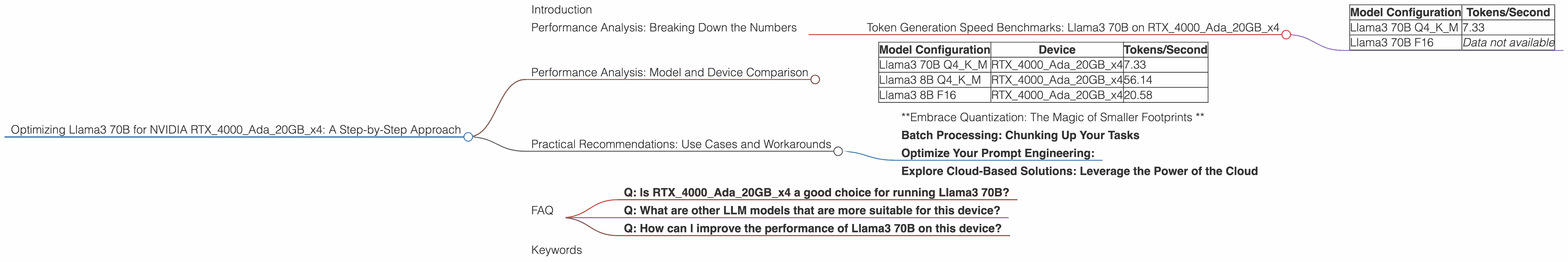

Token Generation Speed Benchmarks: Llama3 70B on RTX4000Ada20GBx4

Let's dive into the performance of Llama3 70B on the RTX4000Ada20GBx4 GPU configuration. It's important to note that the benchmark is based on the token generation speed for different model variations.

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM | 7.33 |

| Llama3 70B F16 | Data not available |

The table shows the token generation speed for the Llama3 70B model using both quantization (Q4KM) and floating-point precision (F16).

What does quantization mean?

Imagine you have a digital photo. Each pixel in the photo uses a certain amount of data to represent its color. Quantization is like reducing the amount of data used for each pixel—it makes the photo a bit smaller, but it might make the details a little less sharp. Similarly, quantization for LLMs reduces the memory needed to store the model, allowing for better use of resources.

As you can see, the Q4KM configuration delivers a token generation speed of 7.33 tokens/second on the RTX4000Ada20GBx4 setup. This is significantly slower than the generation speed of Llama3 8B models, indicating the computational demands of the larger model.

Important note: Unfortunately, there is no data available for the F16 precision for Llama3 70B on this specific hardware. This limitation highlights the need for further research and benchmarking in the future.

Performance Analysis: Model and Device Comparison

While we're focusing on the RTX4000Ada20GBx4 setup, it's helpful to compare the performance of Llama3 70B with other models and devices to get a broader perspective.

| Model Configuration | Device | Tokens/Second |

|---|---|---|

| Llama3 70B Q4KM | RTX4000Ada20GBx4 | 7.33 |

| Llama3 8B Q4KM | RTX4000Ada20GBx4 | 56.14 |

| Llama3 8B F16 | RTX4000Ada20GBx4 | 20.58 |

This comparison highlights a significant performance difference between the Llama 8B and Llama 70B models, even on the same device. As the model size increases, the computation demand becomes substantial, resulting in slower token generation speeds.

Think of it like this: Using a smaller model is like driving a compact car. It's efficient and quick to maneuver. Using a larger model is like driving a massive truck. It's powerful but requires more fuel and takes longer to accelerate.

Practical Recommendations: Use Cases and Workarounds

Despite the performance constraints of running Llama3 70B on the RTX4000Ada20GBx4 configuration, there are still practical use cases and workarounds to leverage this powerful language model effectively.

*Embrace Quantization: The Magic of Smaller Footprints *

The Q4KM quantization configuration offers a significant advantage in terms of resource utilization. By reducing the memory footprint of the model, it allows for more efficient processing on the GPU, even though the token generation speed is still relatively slow. This is particularly useful for tasks that don't require real-time generation but benefit from the power of a large model.

Batch Processing: Chunking Up Your Tasks

Instead of trying to generate the entire text at once, consider batch processing. Break down your input into smaller chunks, process each chunk individually, and then combine the results. This strategy can significantly improve the performance of LLMs on limited hardware.

Optimize Your Prompt Engineering:

A well-crafted prompt can dramatically improve the performance of any LLM. Avoid unnecessary words and complex syntax, and focus on clear and concise instructions. Use a prompt engineering tool like PromptBase to create a more efficient prompt for your specific use case. (https://www.promptbase.com/)

Explore Cloud-Based Solutions: Leverage the Power of the Cloud

If your application demands real-time performance with the Llama3 70B model, consider using cloud-based solutions like Google Colab or Amazon SageMaker. These platforms provide access to powerful GPUs and other resources, allowing you to run large LLMs without the limitations of local hardware.

FAQ

Q: Is RTX4000Ada20GBx4 a good choice for running Llama3 70B?

A: The RTX4000Ada20GBx4 GPU provides sufficient memory for running Llama3 70B, but the performance in terms of token generation speed is significantly limited. If you need real-time generation with Llama3 70B, it's recommended to explore cloud-based solutions or consider using smaller models.

Q: What are other LLM models that are more suitable for this device?

A: Llama3 8B models, especially with Q4KM quantization, offer significantly better performance on the RTX4000Ada20GBx4 GPU. You can also explore other LLMs like GPT-Neo or GPT-J, which have been optimized for local deployment.

Q: How can I improve the performance of Llama3 70B on this device?

A: Optimizing your prompt, using batch processing techniques, and leveraging quantization are effective strategies for enhancing performance. Consider exploring different implementations of Llama3 70B that are tailored for specific hardware configurations.

Keywords

Llama3, 70B, RTX4000Ada20GBx4, GPU, token generation, performance, quantization, Q4KM, F16, LLM, Large Language Model, prompt engineering, batch processing, cloud computing, optimization, practical recommendations, use cases, model comparison, limitations.