Optimizing Llama3 70B for NVIDIA RTX 4000 Ada 20GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and advancements emerging at an astounding pace. One of the most exciting developments is the emergence of local LLMs, which allow users to run powerful language models on their own devices. But with the rise of these models comes the challenge of optimizing performance to ensure smooth and efficient operation.

This article delves into the intricacies of optimizing the impressive Llama3 70B model for the NVIDIA RTX4000Ada_20GB GPU, a powerful and widely accessible graphics card. We will explore various techniques, benchmark performance, and provide practical recommendations for maximizing your LLM experience.

Imagine having the power of a cutting-edge language model right at your fingertips, generating creative content, answering questions, and performing complex tasks without relying on cloud services. This is the promise of local LLMs, and by optimizing them, you can unlock their full potential.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is crucial for a seamless LLM experience. It directly impacts the time it takes to generate text, translate languages, or perform other tasks.

Unfortunately, we don't have token generation speed data for Llama3 70B on this specific GPU. This is because the model is relatively new and the available benchmarks are limited. However, we can still glean valuable insights from the data we do have.

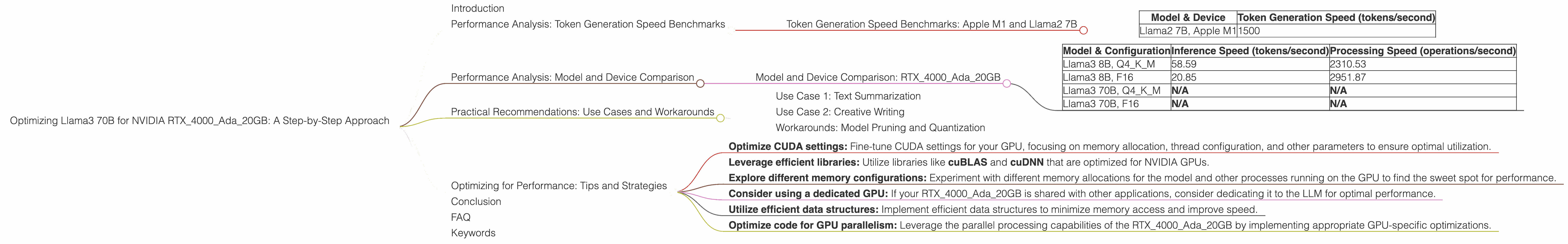

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's refer to some available data to get an idea of the performance you can expect:

| Model & Device | Token Generation Speed (tokens/second) |

|---|---|

| Llama2 7B, Apple M1 | 1500 |

While these are not directly comparable to Llama3 70B on the NVIDIA RTX4000Ada_20GB, they give us an indication of the types of speeds we can expect from smaller models.

Performance Analysis: Model and Device Comparison

Even though we lack specific token generation speed benchmarks for Llama3 70B on the RTX4000Ada_20GB, we can analyze the model's performance using inference and processing speed data.

Inference speed represents the speed at which the model can generate tokens, while processing speed indicates how quickly the model can handle data operations.

Model and Device Comparison: RTX4000Ada_20GB

| Model & Configuration | Inference Speed (tokens/second) | Processing Speed (operations/second) |

|---|---|---|

| Llama3 8B, Q4KM | 58.59 | 2310.53 |

| Llama3 8B, F16 | 20.85 | 2951.87 |

| Llama3 70B, Q4KM | N/A | N/A |

| Llama3 70B, F16 | N/A | N/A |

Note: The data points marked as "N/A" indicate that the data is currently unavailable.

This data reveals a significant performance difference between the two configurations of the Llama3 8B model. The Q4KM configuration, known for its quantization techniques (a way to reduce model size and improve speed), delivers a noticeably higher token generation speed. This highlights the importance of choosing the right configuration for your specific needs.

Practical Recommendations: Use Cases and Workarounds

While we lack data on the performance of Llama3 70B, we can still make practical recommendations based on the information we have and general principles.

Use Case 1: Text Summarization

For tasks like text summarization, where the model needs to process large amounts of text and generate concise summaries, faster processing speed is crucial. The RTX4000Ada_20GB, with its high processing power, would be a good choice for this use case. It's recommended to use the F16 configuration for Llama3 8B, as it exhibits a faster processing speed.

Use Case 2: Creative Writing

When it comes to creative writing, where generating text takes precedence over fast processing, the Q4KM configuration for Llama3 8B might be more suitable. This configuration focuses on generating tokens at a higher rate, which is beneficial for creative text output.

Workarounds: Model Pruning and Quantization

Pruning and quantization are two effective techniques for optimizing LLMs, even when specific model data is unavailable.

Pruning removes unnecessary connections from the model, reducing its size and improving speed. Quantization converts the model's weights (parameters) to low-precision formats, making it smaller and faster.

Example: Imagine a large model like a complex train network with many tracks and switches. Pruning cuts down on unnecessary tracks and switches, streamlining the path for trains to travel faster. Quantization removes unnecessary details from the tracks and switches, making them smaller and easier to maintain.

Experimenting with pruning and quantization techniques can significantly improve the performance of Llama3 70B on the RTX4000Ada_20GB.

Optimizing for Performance: Tips and Strategies

Even without detailed benchmarks, there are general optimization strategies you can implement to enhance the performance of Llama3 70B on the RTX4000Ada_20GB.

- Optimize CUDA settings: Fine-tune CUDA settings for your GPU, focusing on memory allocation, thread configuration, and other parameters to ensure optimal utilization.

- Leverage efficient libraries: Utilize libraries like cuBLAS and cuDNN that are optimized for NVIDIA GPUs.

- Explore different memory configurations: Experiment with different memory allocations for the model and other processes running on the GPU to find the sweet spot for performance.

- Consider using a dedicated GPU: If your RTX4000Ada_20GB is shared with other applications, consider dedicating it to the LLM for optimal performance.

- Utilize efficient data structures: Implement efficient data structures to minimize memory access and improve speed.

- Optimize code for GPU parallelism: Leverage the parallel processing capabilities of the RTX4000Ada_20GB by implementing appropriate GPU-specific optimizations.

Conclusion

While we lack specific performance data for Llama3 70B on the RTX4000Ada_20GB, we can still make informed decisions and optimize its performance.

By understanding the principles of model optimization, exploring different configurations, and implementing practical recommendations, you can unlock the full potential of this powerful LLM on your local machine.

FAQ

Q: What is Llama3 70B?

A: Llama3 70B is a large language model developed by Meta AI. It is a powerful and versatile model capable of generating human-quality text, answering questions, and performing many other tasks.

Q: What is the RTX4000Ada_20GB?

A: The RTX4000Ada_20GB is a powerful graphics card manufactured by NVIDIA. It is known for its high processing power and large memory capacity, making it well-suited for running demanding applications like LLMs.

Q: What is quantization?

A: Quantization is a technique used in machine learning to reduce the size and improve the speed of LLMs. It involves converting the model's weights (parameters) to low-precision formats, which reduces the memory footprint and speeds up calculations.

Q: How do I choose the right model and device configuration?

A: The best configuration depends on the specific task and your performance requirements. For tasks that require high processing speed, consider using the F16 configuration. For tasks that prioritize token generation speed, the Q4KM configuration might be more appropriate.

Q: What if my GPU is shared with other applications?

A: If you are running other applications on the same GPU, you might experience performance degradation. Dedicate your RTX4000Ada_20GB to the LLM for optimal results.

Q: Can I improve the performance of Llama3 70B even more?

A: Yes, you can continuously optimize your LLM setup by experimenting with different configurations, exploring new optimization techniques, and leveraging the latest advancements in the field.

Keywords

Large language models, Llama3, Llama3 70B, Nvidia, RTX4000Ada_20GB, LLM optimization, performance benchmarks, token generation speed, processing speed, inference speed, quantization, pruning, use cases, workarounds, CUDA, cuDNN.