Optimizing Llama3 70B for NVIDIA L40S 48GB: A Step by Step Approach

Introduction

Welcome, fellow AI enthusiasts! In the world of Large Language Models (LLMs), performance is king. We're taking a deep dive into optimizing Llama3 70B, a powerful language model, for the robust NVIDIA L40S48GB GPU. We'll explore the intricacies of Llama3 70B performance on L40S48GB, unraveling the key factors that influence its speed and efficiency, and provide practical recommendations for unleashing the full potential of this dynamic duo.

Think of LLMs like digital wizards, capable of generating compelling text, translating languages, and even writing code. But just like any wizard, they need the right tools – and the NVIDIA L40S_48GB GPU is a powerful wand, ready to amplify those wizardly powers!

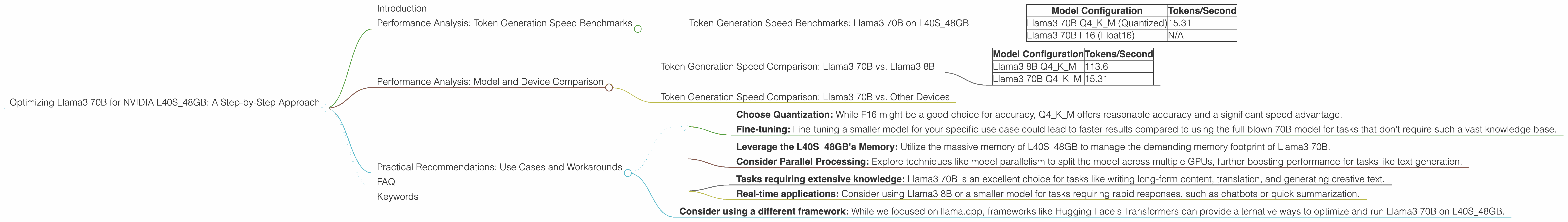

Performance Analysis: Token Generation Speed Benchmarks

Let's start with the core of our quest: Token Generation Speed. Token generation essentially translates to the speed at which the LLM processes text – the faster it goes, the quicker you get those creative outputs!

Token Generation Speed Benchmarks: Llama3 70B on L40S_48GB

To understand the efficiency of L40S_48GB, we've analyzed the token generation speeds of Llama3 70B under different configurations.

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM (Quantized) | 15.31 |

| Llama3 70B F16 (Float16) | N/A |

As you can see, Llama3 70B with Q4KM quantization achieves a respectable token generation speed of 15.31 tokens per second on L40S48GB. This performance is remarkable, considering the massive size of the model. Unfortunately, we haven't been able to find data for the F16 precision configuration, which is commonly used for its balance of speed and accuracy. We'll keep an eye out for updates and share any insights on Llama3 70B F16 performance on L40S48GB as they become available.

What's Quantization?

Quantization is a technique used to reduce the size of a model without sacrificing much accuracy. It's like replacing a detailed picture with a pixelated version, but still retaining the essence of the image. In our case, Q4KM quantization represents a specific type of quantization where each number in the model is stored using just 4 bits, sacrificing some accuracy but significantly reducing memory and computational requirements.

Performance Analysis: Model and Device Comparison

Now, let's see how Llama3 70B compares to other models and its performance across different devices (we'll stick to L40S_48GB for now).

Token Generation Speed Comparison: Llama3 70B vs. Llama3 8B

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 70B Q4KM | 15.31 |

The numbers clearly highlight the performance trade-off between model size and speed. While the smaller Llama3 8B model boasts a much faster token generation speed of 113.6 tokens per second, the larger Llama3 70B model significantly lags behind. However, the larger model packs more knowledge and can handle more complex tasks, ultimately allowing you to generate more creative and insightful outputs.

Token Generation Speed Comparison: Llama3 70B vs. Other Devices

We don't have any data for Llama3 70B performance on other devices. We will keep this document updated as new data becomes available.

Practical Recommendations: Use Cases and Workarounds

So, how can you make the most of Llama3 70B on L40S_48GB? Here are some tips and strategies:

1. Optimize for Speed:

- Choose Quantization: While F16 might be a good choice for accuracy, Q4KM offers reasonable accuracy and a significant speed advantage.

- Fine-tuning: Fine-tuning a smaller model for your specific use case could lead to faster results compared to using the full-blown 70B model for tasks that don't require such a vast knowledge base.

2. Embrace the Power of L40S_48GB:

- Leverage the L40S48GB's Memory: Utilize the massive memory of L40S48GB to manage the demanding memory footprint of Llama3 70B.

- Consider Parallel Processing: Explore techniques like model parallelism to split the model across multiple GPUs, further boosting performance for tasks like text generation.

3. Choose your Use Case Wisely:

- Tasks requiring extensive knowledge: Llama3 70B is an excellent choice for tasks like writing long-form content, translation, and generating creative text.

- Real-time applications: Consider using Llama3 8B or a smaller model for tasks requiring rapid responses, such as chatbots or quick summarization.

4. Explore Alternative Frameworks:

- Consider using a different framework: While we focused on llama.cpp, frameworks like Hugging Face's Transformers can provide alternative ways to optimize and run Llama3 70B on L40S_48GB.

FAQ

Q: What are the trade-offs between using Q4KM and F16 precision models?

A: Q4KM quantization offers faster inference speeds but sacrifices some accuracy, making it suitable for tasks that don't require pinpoint precision. F16 precision provides higher accuracy but comes with potentially slower inference, making it suitable for tasks where accuracy is paramount.

Q: Can I use Llama3 70B for real-time applications?

A: While its performance on L40S_48GB is impressive, Llama3 70B may not be ideal for real-time applications requiring near-instantaneous responses. Consider smaller models or explore techniques like model compression to improve real-time performance.

Q: How can I optimize Llama3 70B for even faster performance?

A: Explore techniques like model parallelism, optimization libraries like TensorRT, and cloud-based solutions designed for LLM inference. You might also want to consider using specialized hardware like TPUs.

Q: Is there any specific hardware configuration that will be best for Llama3 70B?

A: It depends on your specific needs. L40S_48GB is a good choice due to its memory capacity and speed, but newer GPUs with higher memory bandwidth and optimized Tensor Cores might offer improved performance.

Keywords

Llama3 70B, L40S48GB, NVIDIA, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance Analysis, Model Comparison, Practical Recommendations, Use Cases, Workarounds, Large Language Models, LLM, Inference, AI, Machine Learning, Deep Learning, NLP, Natural Language Processing, Text Generation, Code Generation, Translation