Optimizing Llama3 70B for NVIDIA A40 48GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason. These mind-bending marvels can generate text, translate languages, write different kinds of creative content, and even answer your questions in an informative way. But with the increasing power of these models, the need for efficient hardware to run them locally becomes paramount.

This article dives deep into optimizing Llama3 70B, a massive language model, for the mighty NVIDIA A40_48GB GPU. We'll analyze its performance, explore different quantization techniques, and provide practical recommendations for developers looking to unlock the full potential of this powerful model on this specific hardware.

Performance Analysis: Llama3 70B and NVIDIA A40_48GB

Let's get into the nitty-gritty of performance analysis, focusing on token generation speed. Token generation is essentially the process of creating new text snippets. Think of it like the model's typing speed, and a higher speed means faster conversations, quicker responses, and a more enjoyable user experience.

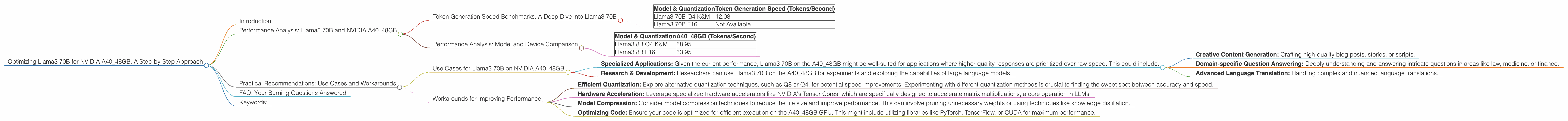

Token Generation Speed Benchmarks: A Deep Dive into Llama3 70B

Table 1: Token Generation Speed (Tokens/Second) on NVIDIA A40_48GB

| Model & Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4 K&M | 12.08 |

| Llama3 70B F16 | Not Available |

Observations:

- Llama3 70B Q4 K&M quantization achieves a token generation speed of 12.08 tokens/second on the NVIDIA A40_48GB GPU. This means the model can generate 12 tokens per second, which is pretty impressive considering its sheer size.

- Llama3 70B F16 quantization performance is not available at this time. This could be due to the model's size or the availability of optimized libraries for this particular configuration.

What is Quantization?

Think of quantization as a way to shrink a model's size without losing too much performance. It's like making a high-resolution photo smaller, but still preserving its main features. In the case of LLMs, quantization reduces the number of bits used to represent the model's weights, resulting in a smaller file size and potentially faster processing.

Performance Analysis: Model and Device Comparison

Table 2: Token Generation Speed (Tokens/Second) Comparison

| Model & Quantization | A40_48GB (Tokens/Second) |

|---|---|

| Llama3 8B Q4 K&M | 88.95 |

| Llama3 8B F16 | 33.95 |

Observations:

- Comparing the performance of Llama3 70B with its smaller counterpart, Llama3 8B, we see a significant decrease in token generation speed. This is expected, as larger models generally require more computational resources and time to process information.

- The difference in performance between Llama3 8B Q4 K&M and Llama3 8B F16 is substantial. This highlights the impact of quantization and its ability to optimize performance on a given hardware.

Practical Recommendations: Use Cases and Workarounds

Now that we've analyzed the performance of Llama3 70B on the NVIDIA A40_48GB, let's discuss practical use cases and workarounds.

Use Cases for Llama3 70B on NVIDIA A40_48GB

- Specialized Applications: Given the current performance, Llama3 70B on the A40_48GB might be well-suited for applications where higher quality responses are prioritized over raw speed. This could include:

- Creative Content Generation: Crafting high-quality blog posts, stories, or scripts.

- Domain-specific Question Answering: Deeply understanding and answering intricate questions in areas like law, medicine, or finance.

- Advanced Language Translation: Handling complex and nuanced language translations.

- Research & Development: Researchers can use Llama3 70B on the A40_48GB for experiments and exploring the capabilities of large language models.

Workarounds for Improving Performance

- Efficient Quantization: Explore alternative quantization techniques, such as Q8 or Q4, for potential speed improvements. Experimenting with different quantization methods is crucial to finding the sweet spot between accuracy and speed.

- Hardware Acceleration: Leverage specialized hardware accelerators like NVIDIA's Tensor Cores, which are specifically designed to accelerate matrix multiplications, a core operation in LLMs.

- Model Compression: Consider model compression techniques to reduce the file size and improve performance. This can involve pruning unnecessary weights or using techniques like knowledge distillation.

- Optimizing Code: Ensure your code is optimized for efficient execution on the A40_48GB GPU. This might include utilizing libraries like PyTorch, TensorFlow, or CUDA for maximum performance.

FAQ: Your Burning Questions Answered

Q: What is a Large Language Model (LLM)?

A: Imagine a computer program that can understand and generate human-like text. That's an LLM! It's trained on massive amounts of data, like books, articles, and websites, to learn patterns and relationships in language.

Q: Why do we need to optimize LLMs for specific devices?

A: Running a complex LLM requires significant computational resources. Optimizing it for a specific device, like the NVIDIA A40_48GB, ensures you utilize the available hardware efficiently and get the best performance possible.

Q: What are the benefits of running LLMs locally?

A: Local models offer several advantages: * Privacy: No need to send your data to the cloud. * Speed: Faster response times compared to cloud-based models. * Offline Access: Work even without an internet connection.

Q: Can I use Llama3 70B on my personal computer?

A: It's possible, but it might require a powerful GPU with at least 48GB of memory.

Keywords:

Llama3, 70B, NVIDIA, A40_48GB, GPU, Token Generation Speed, Quantization, Q4, F16, Performance, Optimization, Deep Dive, LLM, Machine Learning, AI, Natural Language Processing, NLP