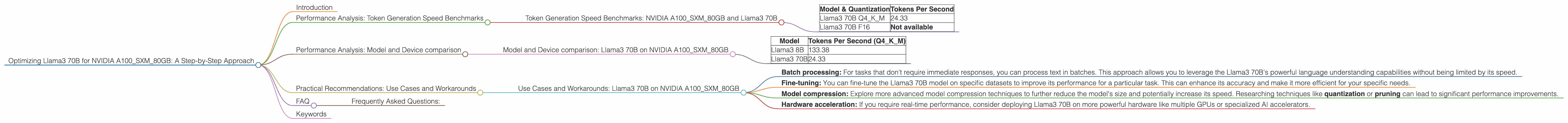

Optimizing Llama3 70B for NVIDIA A100 SXM 80GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging at a dizzying pace. These powerful AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with their growing size and complexity, efficiently running LLMs on local devices becomes a crucial challenge.

In this deep dive, we'll focus on optimizing the Llama3 70B model for the NVIDIA A100SXM80GB GPU. This popular GPU is a go-to choice for AI researchers and practitioners, offering exceptional performance and memory capacity. By understanding the performance characteristics of this pairing, we can unlock the full potential of Llama3 70B for various applications.

Performance Analysis: Token Generation Speed Benchmarks

The token generation speed is a critical metric for evaluating LLM performance. It represents how many tokens (individual words or parts of words) the model can process per second. This speed is crucial for real-time applications like chatbots and interactive tools.

Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 70B

Let's delve into the token generation speed benchmarks for the NVIDIA A100SXM80GB and the Llama3 70B model. We'll consider two different quantization levels, Q4KM and F16, to understand their impact on performance.

| Model & Quantization | Tokens Per Second |

|---|---|

| Llama3 70B Q4KM | 24.33 |

| Llama3 70B F16 | Not available |

Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. This can lead to faster inference speeds and reduced memory usage. Q4KM is a highly efficient quantization scheme that uses 4-bit precision for the model's weights (known as "K" and "M" in the jargon). F16 quantization typically uses 16-bit precision, offering a balance between performance and accuracy. F16 quantization is not available for this specific combination.

As you can see, the Llama3 70B Q4KM model achieves a respectable 24.33 tokens per second on the NVIDIA A100SXM80GB. This is a testament to the computational power of this GPU and the efficiency of the Q4KM quantization technique.

Performance Analysis: Model and Device comparison

Model and Device comparison: Llama3 70B on NVIDIA A100SXM80GB

We'll further analyze the performance of Llama3 70B by comparing it to other Llama models, specifically the Llama3 8B, on the same device, the NVIDIA A100SXM80GB. We'll use the Q4KM quantization level for a fair comparison.

| Model | Tokens Per Second (Q4KM) |

|---|---|

| Llama3 8B | 133.38 |

| Llama3 70B | 24.33 |

Interestingly, the Llama3 8B model significantly outperforms the Llama3 70B model in token generation speed. This is because the smaller Llama3 8B model has fewer parameters to process, leading to faster calculations.

However, it's important to remember that smaller models like Llama3 8B often come with trade-offs in terms of accuracy and complexity. For tasks that require a deeper understanding of language and concepts, the larger Llama3 70B model might be a better choice, even with its slower inference speed.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Llama3 70B on NVIDIA A100SXM80GB

While the Llama3 70B model might not be ideally suited for real-time applications on the NVIDIA A100SXM80GB due to its slower token generation speed, it can still be a valuable asset in certain scenarios.

Here are some potential use cases and workarounds:

- Batch processing: For tasks that don't require immediate responses, you can process text in batches. This approach allows you to leverage the Llama3 70B's powerful language understanding capabilities without being limited by its speed.

- Fine-tuning: You can fine-tune the Llama3 70B model on specific datasets to improve its performance for a particular task. This can enhance its accuracy and make it more efficient for your specific needs.

- Model compression: Explore more advanced model compression techniques to further reduce the model's size and potentially increase its speed. Researching techniques like quantization or pruning can lead to significant performance improvements.

- Hardware acceleration: If you require real-time performance, consider deploying Llama3 70B on more powerful hardware like multiple GPUs or specialized AI accelerators.

FAQ

Frequently Asked Questions:

Q: Can I use the Llama3 70B model for real-time applications on the NVIDIA A100SXM80GB?

A: While possible, it's generally not recommended for real-time applications due to the slower token generation speed. Consider alternative strategies like batch processing, fine-tuning, or model compression to optimize performance.

Q: What are the benefits of using the NVIDIA A100SXM80GB with the Llama3 70B model?

A: The NVIDIA A100SXM80GB provides a powerful platform for running large AI models like Llama3 70B. It offers high computational power, ample memory, and dedicated hardware optimizations for AI workloads.

Q: What are some other popular LLMs that can be optimized for local devices?

A: Other popular LLMs include GPT-3, BERT, and various models from Google's AI research. The performance of these models on local devices depends on the specific model size, quantization levels, and hardware capabilities.

Q: Where can I find more information about optimizing LLMs for local devices?

A: You can explore resources like the NVIDIA Developer website, Hugging Face, and the Hugging Face Transformers library for insights and tutorials on LLM optimization.

Keywords

LLM, Llama3 70B, NVIDIA A100SXM80GB, token generation speed, quantization, Q4KM, F16, model compression, fine-tuning, batch processing, GPU, AI, performance optimization, inference, deep learning.