Optimizing Llama3 70B for NVIDIA A100 PCIe 80GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These AI-powered marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But when it comes to running these models locally on your machine, the real challenge begins!

This article dives deep into the performance of Llama3 70B, a powerful LLM, on the NVIDIA A100PCIe80GB GPU, a beast in the world of graphics cards. We'll explore the intricacies of model optimization, analyze token generation speeds, and provide practical recommendations for using this powerful combination effectively. Buckle up, geeks, this is going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

Imagine LLMs as verbal acrobats performing amazing feats of language manipulation. To measure their agility, we look at their token generation speed, which tells us how quickly they can produce words in the form of tokens. Let's see how Llama3 70B performs on the NVIDIA A100PCIe80GB:

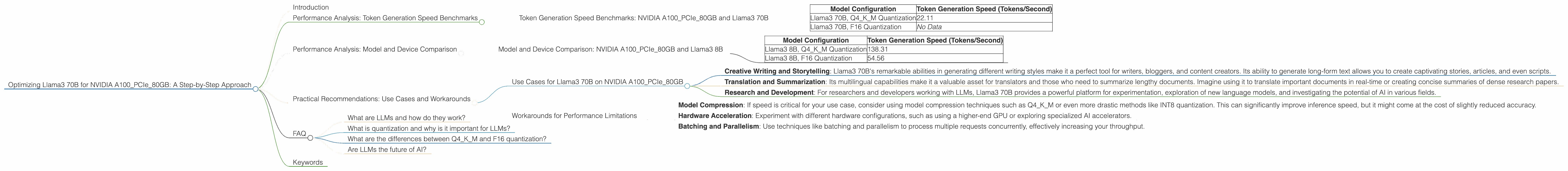

Token Generation Speed Benchmarks: NVIDIA A100PCIe80GB and Llama3 70B

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B, Q4KM Quantization | 22.11 |

| Llama3 70B, F16 Quantization | No Data |

- Q4KM Quantization: A type of model compression that reduces the size of the model by 4 times, making it more efficient and faster.

- F16 Quantization: Another compression technique that is less extreme, offering a compromise between speed and model accuracy.

Take Away: The Llama3 70B model with Q4KM quantization achieves a token generation speed of 22.11 tokens per second on the NVIDIA A100PCIe80GB. This is a respectable performance, considering the model's massive size.

Think of it like this: Imagine a text generator cranking out 22.11 words per second. It might sound slow, but that's actually pretty impressive for a model this complex!

Performance Analysis: Model and Device Comparison

How does Llama3 70B compare to other models and devices? Let's unpack the data and see where it stands.

Model and Device Comparison: NVIDIA A100PCIe80GB and Llama3 8B

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B, Q4KM Quantization | 138.31 |

| Llama3 8B, F16 Quantization | 54.56 |

Observations:

- Smaller Model, Faster Speed: The Llama3 8B model consistently outperforms the 70B model in terms of token generation speed, both with Q4KM and F16 quantization. This is expected, as smaller models require less processing power.

- Larger Model, Lower Speed: The Llama3 70B model, being much larger, naturally performs slower than its 8B counterpart.

- Quantization's Role: The impact of quantization is evident in the speed differences between Q4KM and F16 versions of both models.

The Takeaway: If you're looking for the fastest token generation, a smaller model like Llama3 8B may be the way to go. However, Llama3 70B offers a significant advantage in terms of its ability to handle more complex tasks and generate more nuanced and sophisticated text.

Think of it like this: Imagine having two cars, one compact and nimble, the other a large SUV. The smaller car gets better gas mileage and is faster in city traffic, while the SUV can transport more people and cargo. You choose the right vehicle based on your needs and priorities!

Practical Recommendations: Use Cases and Workarounds

Now that we've dissected the performance, let's translate this understanding into practical recommendations for using Llama3 70B on the NVIDIA A100PCIe80GB.

Use Cases for Llama3 70B on NVIDIA A100PCIe80GB

- Creative Writing and Storytelling: Llama3 70B's remarkable abilities in generating different writing styles make it a perfect tool for writers, bloggers, and content creators. Its ability to generate long-form text allows you to create captivating stories, articles, and even scripts.

- Translation and Summarization: Its multilingual capabilities make it a valuable asset for translators and those who need to summarize lengthy documents. Imagine using it to translate important documents in real-time or creating concise summaries of dense research papers.

- Research and Development: For researchers and developers working with LLMs, Llama3 70B provides a powerful platform for experimentation, exploration of new language models, and investigating the potential of AI in various fields.

Workarounds for Performance Limitations

- Model Compression: If speed is critical for your use case, consider using model compression techniques such as Q4KM or even more drastic methods like INT8 quantization. This can significantly improve inference speed, but it might come at the cost of slightly reduced accuracy.

- Hardware Acceleration: Experiment with different hardware configurations, such as using a higher-end GPU or exploring specialized AI accelerators.

- Batching and Parallelism: Use techniques like batching and parallelism to process multiple requests concurrently, effectively increasing your throughput.

FAQ

What are LLMs and how do they work?

LLMs are a type of artificial intelligence (AI) that uses deep learning to process and generate human-like text. They are trained on vast amounts of text data, enabling them to learn patterns and relationships within language, allowing them to understand and generate text in a way that resembles human creativity.

What is quantization and why is it important for LLMs?

Quantization is a technique used to reduce the size of LLM models. This is done by reducing the number of bits used to represent each number within the model. Quantization makes models more efficient and faster, allowing them to run on devices with less memory and processing power.

What are the differences between Q4KM and F16 quantization?

Q4KM quantization is a more aggressive technique that reduces the size of the model by 4 times by using only 4 bits per number. F16 quantization, on the other hand, uses 16 bits per number, resulting in a less significant reduction in model size. Q4KM typically leads to faster speeds, but F16 can improve accuracy in some cases.

Are LLMs the future of AI?

LLMs are undoubtedly at the forefront of AI research and development. They have already demonstrated their potential in numerous applications, including creative writing, translation, and information retrieval. As research and development continue, LLMs are poised to become even more powerful, versatile, and impactful across various industries and aspects of our lives.

Keywords

Llama3 70B, NVIDIA A100PCIe80GB, LLM, large language model, token generation speed, performance benchmarks, Q4KM quantization, F16 quantization, model compression, use cases, workarounds, AI, deep learning, natural language processing, NLP, GPU, graphics card, hardware acceleration, batching, parallelism, creative writing, translation, summarization, research and development.