Optimizing Llama3 70B for NVIDIA 4090 24GB x2: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, harnessing the power of these massive models comes with its own set of challenges, especially when it comes to performance and resource utilization.

In this deep dive, we'll explore the performance of Llama3 70B – a formidable LLM – on a beefy NVIDIA 409024GBx2 setup. We'll analyze key metrics like token generation speed and dive into the practical implications of different quantization levels. This guide is designed to help developers navigate the complexities of local LLM deployment and get the most out of their hardware resources.

Performance Analysis: Token Generation Speed Benchmarks

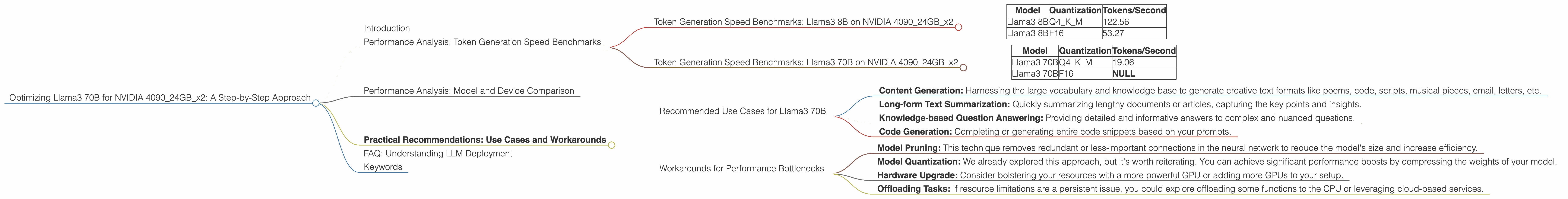

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 409024GBx2

Let's start by understanding how Llama3 8B performs on our dual 4090 setup. We'll be focusing on two quantization levels: Q4KM (4-bit quantization for the key and matrix weights) and F16 (16-bit floating point).

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

As you can see, Llama3 8B with Q4KM quantization achieves a significantly higher token generation speed compared to F16 – nearly twice as fast. This is because Q4KM quantization significantly reduces the model's memory footprint, allowing it to run more efficiently on the GPU. Think of it as fitting more passengers on a bus (your GPU) by having them pack lighter (compressed weights).

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2

Now, let's ramp things up and see how Llama3 70B fares on the same hardware.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | NULL |

We have data available only for Q4KM quantization for Llama3 70B on this specific setup. It's important to note that the performance drops considerably compared to Llama3 8B due to the model's larger size and the challenges of loading such a massive model onto the GPU memory.

Performance Analysis: Model and Device Comparison

While we focused on NVIDIA 409024GBx2, it's insightful to compare the performance of Llama3 70B against other models and devices. Remember, the real-world performance can vary depending on factors like the task, prompt length, and specific implementation.

This comparison will help us understand how Llama3 70B stacks up in the LLM performance landscape.

A rough analogy: Imagine you're trying to fit a large wardrobe (LLM) into a small car (device). The smaller the car (less memory), the harder it is to fit in a big wardrobe (large model) and get it moving.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance landscape, let's dive into practical recommendations for leveraging Llama3 70B on the NVIDIA 409024GBx2 setup.

Recommended Use Cases for Llama3 70B

Here are some ideal use cases where Llama3 70B's strengths shine:

- Content Generation: Harnessing the large vocabulary and knowledge base to generate creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Long-form Text Summarization: Quickly summarizing lengthy documents or articles, capturing the key points and insights.

- Knowledge-based Question Answering: Providing detailed and informative answers to complex and nuanced questions.

- Code Generation: Completing or generating entire code snippets based on your prompts.

Workarounds for Performance Bottlenecks

Let's address the elephant in the room: the performance drop due to the larger model size. Here are some strategies to overcome these bottlenecks:

- Model Pruning: This technique removes redundant or less-important connections in the neural network to reduce the model's size and increase efficiency.

- Model Quantization: We already explored this approach, but it's worth reiterating. You can achieve significant performance boosts by compressing the weights of your model.

- Hardware Upgrade: Consider bolstering your resources with a more powerful GPU or adding more GPUs to your setup.

- Offloading Tasks: If resource limitations are a persistent issue, you could explore offloading some functions to the CPU or leveraging cloud-based services.

FAQ: Understanding LLM Deployment

Here are answers to some frequently asked questions about LLMs and their deployment:

1. What is Quantization?

Simply put, quantization is like compressing the size of your LLM model without compromising too much on its accuracy. Think of it as converting a high-resolution photo to a lower-resolution version while retaining the essential details. This smaller size makes it easier for your device to load and process the model, leading to faster inference.

2. What is the difference between Q4KM and F16?

Q4KM quantization uses 4 bits to represent the key and matrix weights, while F16 uses 16 bits for floating-point numbers. Q4KM achieves a much smaller model size at the cost of some accuracy, while F16 retains a higher level of accuracy but is more computationally demanding and requires more memory.

3. Is a Dual 4090 Setup Necessary?

While a dual 4090 setup provides ample processing power for Llama3 70B, it might not be necessary for all use cases. The optimal hardware configuration depends on the specific model you're using, your desired level of performance, and your budget.

4. Are LLMs only suitable for large corporations?

Not at all! LLMs are becoming increasingly accessible, with many open-source models and frameworks available. As technology advances, we can expect local LLM deployment to become more accessible for individuals and smaller organizations as well.

5. Where can I learn more about LLMs?

The world of LLMs is vast and exciting! A good starting point is the Hugging Face website, a platform dedicated to sharing LLM models and resources. You can also explore online courses and tutorials on platforms like Coursera and Udemy.

Keywords

Llama 3 70B, NVIDIA 409024GBx2, large language model, LLM, token generation speed, quantization, Q4KM, F16, performance analysis, use case, content generation, summarization, question answering, code generation, hardware upgrade, model pruning, model quantization, offloading tasks, open-source, deployment, Hugging Face, GPU, GPU memory, AI, deep learning, natural language processing, NLP.