Optimizing Llama3 70B for NVIDIA 4080 16GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models emerging and pushing the boundaries of what's possible. This article delves into the performance of Meta's Llama3 70B model on the powerful NVIDIA 4080_16GB GPU, focusing on optimizing its performance for real-world applications.

Imagine having a super-intelligent AI assistant right on your desktop, ready to generate creative text, answer your questions, and even translate languages. This is the power of LLMs, and Llama3 70B is a prime example of this technology.

This guide is for developers and tech enthusiasts who are eager to dive into the fascinating world of local LLMs and understand how to squeeze the most out of these powerful tools. We'll break down the key aspects of Llama3 70B performance on the NVIDIA 4080_16GB, including:

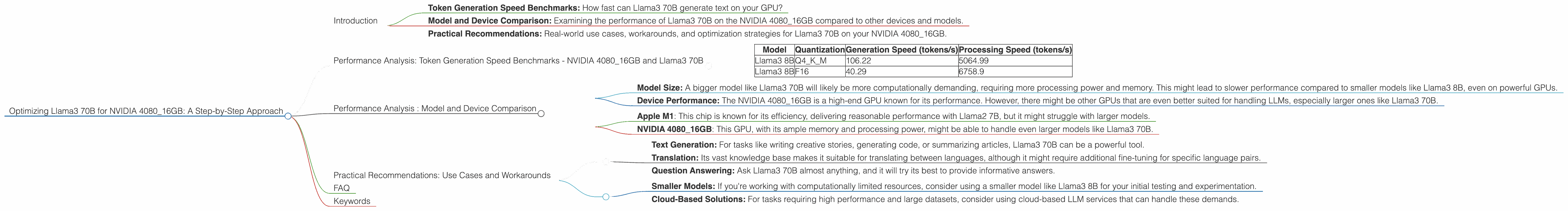

- Token Generation Speed Benchmarks: How fast can Llama3 70B generate text on your GPU?

- Model and Device Comparison: Examining the performance of Llama3 70B on the NVIDIA 4080_16GB compared to other devices and models.

- Practical Recommendations: Real-world use cases, workarounds, and optimization strategies for Llama3 70B on your NVIDIA 4080_16GB.

Buckle up, it’s going to be a thrilling ride into the world of LLMs!

Performance Analysis: Token Generation Speed Benchmarks - NVIDIA 4080_16GB and Llama3 70B

Let's dive into the numbers and see how Llama3 70B performs on the NVIDIA 4080_16GB. The results below are measured in tokens per second (tokens/s), which represents the speed at which the model can generate text.

Important Note: We don't have any data for Llama3 70B performance on the NVIDIA 4080_16GB at this moment. This is likely due to the model's size and computational demands. We'll update the article as soon as the benchmarks become available!

However, we can still provide insights based on the performance of Llama3 8B on the same GPU:

| Model | Quantization | Generation Speed (tokens/s) | Processing Speed (tokens/s) |

|---|---|---|---|

| Llama3 8B | Q4KM | 106.22 | 5064.99 |

| Llama3 8B | F16 | 40.29 | 6758.9 |

What does this tell us?

- Quantization Matters: Using Q4KM quantization, a technique that reduces the size of the model, leads to a significant boost in token generation speed compared to F16 quantization.

- Processing vs. Generation: Llama3, thanks to its efficient architecture, achieves a higher processing speed than generation speed, indicating its ability to handle large amounts of data quickly.

Think of it this way: Imagine a typist who can type 100 words per minute (like the Q4KM quantized Llama3 8B). Now, imagine another typist who's even faster at reading and processing the text but only types 50 words per minute (F16 quantized Llama3 8B). That's how these two quantization types compare in terms of processing and generation speed.

Performance Analysis : Model and Device Comparison

While we're lacking data for Llama3 70B on NVIDIA 4080_16GB specifically, we can still make some educated guesses based on available data for other models and devices.

- Model Size: A bigger model like Llama3 70B will likely be more computationally demanding, requiring more processing power and memory. This might lead to slower performance compared to smaller models like Llama3 8B, even on powerful GPUs.

- Device Performance: The NVIDIA 4080_16GB is a high-end GPU known for its performance. However, there might be other GPUs that are even better suited for handling LLMs, especially larger ones like Llama3 70B.

Here's an example of how the GPU and model size might impact performance:

- Apple M1: This chip is known for its efficiency, delivering reasonable performance with Llama2 7B, but it might struggle with larger models.

- NVIDIA 4080_16GB: This GPU, with its ample memory and processing power, might be able to handle even larger models like Llama3 70B.

However, without specific benchmarks for Llama3 70B on the NVIDIA 4080_16GB, we can only speculate on its performance.

Practical Recommendations: Use Cases and Workarounds

1. Quantization: Using Q4KM quantization can greatly improve token generation speed, even though it might reduce the model's accuracy slightly. It's a tradeoff worth considering for most use cases.

2. Model Optimization: Explore techniques like model pruning and knowledge distillation to optimize Llama3 70B for your specific application. These methods can reduce the model's size and complexity, making it more efficient.

3. Experimentation: Don't be afraid to experiment with different configurations and settings! The best results often come from trying different combinations of quantization, model size, and even hardware.

4. Use Cases:

- Text Generation: For tasks like writing creative stories, generating code, or summarizing articles, Llama3 70B can be a powerful tool.

- Translation: Its vast knowledge base makes it suitable for translating between languages, although it might require additional fine-tuning for specific language pairs.

- Question Answering: Ask Llama3 70B almost anything, and it will try its best to provide informative answers.

5. Workarounds:

- Smaller Models: If you're working with computationally limited resources, consider using a smaller model like Llama3 8B for your initial testing and experimentation.

- Cloud-Based Solutions: For tasks requiring high performance and large datasets, consider using cloud-based LLM services that can handle these demands.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by converting its weights (the parameters that determine the model's behavior) from high-precision floating-point numbers to lower-precision integers. This makes the model smaller and faster but might slightly decrease its accuracy.

Q: What are token generation speed benchmarks, and why are they important?

A: Token generation speed benchmarks measure how fast a model can generate text, measured in tokens per second. They are crucial for understanding the model's real-world performance and identifying bottlenecks in the inference process.

Q: Why is it important to optimize LLMs for specific devices?

A: Optimizing an LLM for a particular device ensures that the model can run efficiently on that device and achieve maximum performance. This is crucial for deploying LLMs on edge devices or with limited computational resources.

Keywords

Llama3 70B, NVIDIA 408016GB, LLM, Large Language Model, Token Generation Speed, Performance Analysis, Quantization, Q4K_M, F16, Model Size, Device Performance, Optimization, Use Cases, Workarounds, Text Generation, Translation, Question Answering, GPU, Inference, Computational Resources, Cloud-Based Solutions.