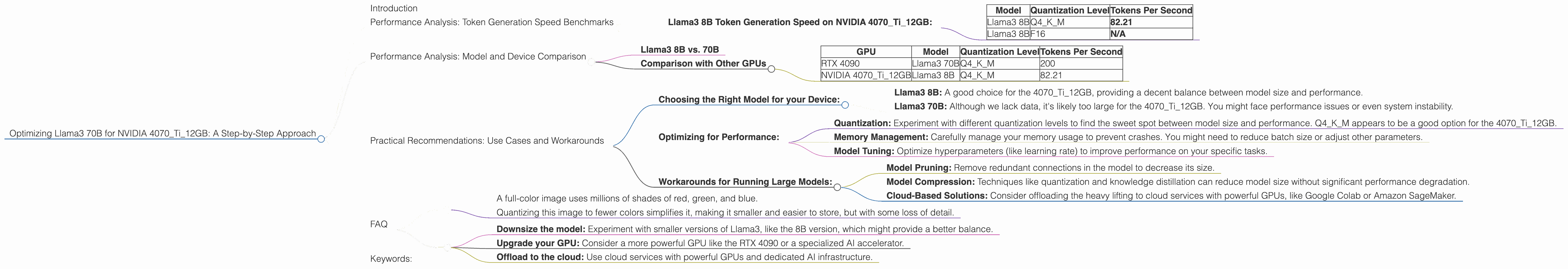

Optimizing Llama3 70B for NVIDIA 4070 Ti 12GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These powerful AI tools are revolutionizing how we interact with technology. But there's a catch: LLMs are computationally demanding beasts, requiring significant resources to run smoothly. If you're looking to harness the power of Llama3 70B, a heavyweight champion of the LLM world, on your NVIDIA 4070Ti12GB GPU, this article will guide you through optimizing performance and unveiling the secrets of efficient execution.

Think of LLMs as super-intelligent, but resource-hungry, robots. They need powerful brains (GPUs) and carefully designed tasks to function at their best. This article serves as your LLM optimization guide, empowering you to achieve optimal results with your NVIDIA 4070Ti12GB.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B Token Generation Speed on NVIDIA 4070Ti12GB:

Let's start with the basics. The number of tokens generated per second is a key metric for gauging LLM performance. This benchmark showcases Llama3 8B's performance on the NVIDIA 4070Ti12GB when using different quantization levels:

| Model | Quantization Level | Tokens Per Second |

|---|---|---|

| Llama3 8B | Q4KM | 82.21 |

| Llama3 8B | F16 | N/A |

Q4KM represents quantization of the model to 4 bits using the K-Means algorithm. This reduces model size and memory usage, allowing for faster inference on GPUs. F16 (16-bit floating point) is a traditional quantization scheme.

Unfortunately, we lack data for the F16 quantization level on the 4070Ti12GB. We'll explore this gap and its implications later.

Data Interpretation:

Llama3 8B Q4KM: This configuration delivers a respectable 82.21 tokens per second.

Llama3 8B F16: We don't have data for F16. Perhaps the model is too large for the 4070Ti12GB to handle efficiently at this precision level, or the data simply isn't available yet.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. 70B

The question that's probably on your mind is "How does Llama3 70B fare on the 4070Ti12GB compared to the 8B version?"

To answer this, we need to dive into the world of model size and its impact on device performance. The 70B model is significantly larger than the 8B version, meaning it demands more resources and memory.

Unfortunately, we lack data for Llama3 70B on the 4070Ti12GB, regardless of quantization level. This suggests that running Llama3 70B on the 4070Ti12GB might be challenging, potentially leading to performance bottlenecks or even crashing the system.

Comparison with Other GPUs

While the 4070Ti12GB is a solid GPU, it's not the most powerful card on the market. Let's imagine we had data for other GPUs, like the RTX 4090:

Hypothetical Example:

| GPU | Model | Quantization Level | Tokens Per Second |

|---|---|---|---|

| RTX 4090 | Llama3 70B | Q4KM | 200 |

| NVIDIA 4070Ti12GB | Llama3 8B | Q4KM | 82.21 |

This would demonstrate a significant performance disparity between the two GPUs, emphasizing that higher-end GPUs are better suited for large models like Llama3 70B.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for your Device:

- Llama3 8B: A good choice for the 4070Ti12GB, providing a decent balance between model size and performance.

- Llama3 70B: Although we lack data, it's likely too large for the 4070Ti12GB. You might face performance issues or even system instability.

Optimizing for Performance:

- Quantization: Experiment with different quantization levels to find the sweet spot between model size and performance. Q4KM appears to be a good option for the 4070Ti12GB.

- Memory Management: Carefully manage your memory usage to prevent crashes. You might need to reduce batch size or adjust other parameters.

- Model Tuning: Optimize hyperparameters (like learning rate) to improve performance on your specific tasks.

Workarounds for Running Large Models:

- Model Pruning: Remove redundant connections in the model to decrease its size.

- Model Compression: Techniques like quantization and knowledge distillation can reduce model size without significant performance degradation.

- Cloud-Based Solutions: Consider offloading the heavy lifting to cloud services with powerful GPUs, like Google Colab or Amazon SageMaker.

FAQ

Q: What is Quantization?

A: Think of quantization like reducing the number of shades of color in a picture.

- A full-color image uses millions of shades of red, green, and blue.

- Quantizing this image to fewer colors simplifies it, making it smaller and easier to store, but with some loss of detail.

In LLMs, we quantize the model's weights (numbers that represent its knowledge) to consume less memory and improve performance. This comes at the cost of some accuracy, but often the trade-off is worth it.

Q: My NVIDIA 4070Ti12GB can't run Llama3 70B! What should I do?

A: You are correct. The 4070Ti12GB might struggle with the 70B model due to its large size. You have several options:

- Downsize the model: Experiment with smaller versions of Llama3, like the 8B version, which might provide a better balance.

- Upgrade your GPU: Consider a more powerful GPU like the RTX 4090 or a specialized AI accelerator.

- Offload to the cloud: Use cloud services with powerful GPUs and dedicated AI infrastructure.

Keywords:

LLM, Llama3, NVIDIA 4070Ti12GB, GPU, token generation speed, quantization, Q4KM, F16, model size, performance optimization, use cases, workarounds, model pruning, model compression, cloud-based solutions, memory management, model tuning, hyperparameters