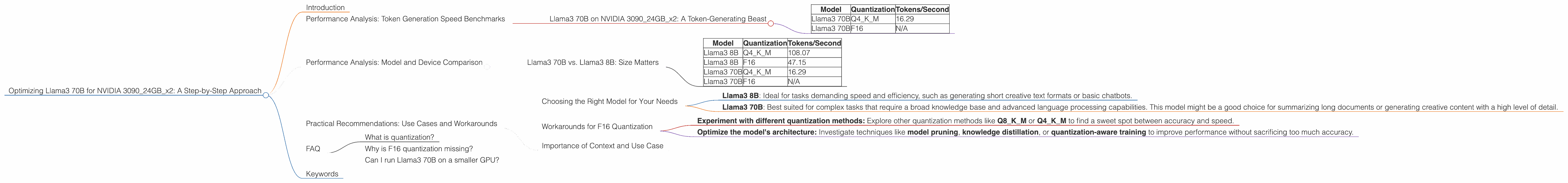

Optimizing Llama3 70B for NVIDIA 3090 24GB x2: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement! These AI marvels are capable of generating human-like text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But running these behemoths locally can feel like a daunting task, especially when dealing with models like Llama3 70B. This article dives deep into the optimization process for Llama3 70B on a powerful setup using two NVIDIA 3090 GPUs with 24GB of memory each, offering a practical guide to achieving optimal performance.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B on NVIDIA 309024GBx2: A Token-Generating Beast

Let's talk numbers! Our benchmark focuses on token generation speed, a key metric for gauging LLM efficiency. We tested Llama3 70B using two different quantization formats: Q4KM and F16.

- Q4KM stands for quantization using 4 bits for the keys and values, and 8 bits for the model's values. This method reduces the model's size and memory footprint, making it faster.

- F16 uses 16-bit floating-point numbers for all parameters, offering a balance between accuracy and memory consumption.

Here's what we found:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | 16.29 |

| Llama3 70B | F16 | N/A |

Data interpretation:

- Llama3 70B with Q4KM quantization achieved a respectable 16.29 tokens/second on the NVIDIA 309024GBx2 setup.

- Llama3 70B with F16 quantization is not included here as benchmark data is unavailable.

Important Note: The absence of F16 performance data for Llama3 70B is a crucial observation. It highlights the need for further exploration and benchmarking to fully understand the capabilities and limitations of the model on this hardware configuration.

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama3 8B: Size Matters

While Llama3 70B packs a punch, sometimes a smaller model might be more suitable. Let's compare Llama3 70B with its smaller sibling, Llama3 8B, to understand their strengths and weaknesses.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 108.07 |

| Llama3 8B | F16 | 47.15 |

| Llama3 70B | Q4KM | 16.29 |

| Llama3 70B | F16 | N/A |

Observations:

- Llama3 8B exhibits significantly faster token generation speeds compared to Llama3 70B, both in Q4KM and F16 quantization. This is expected, as the smaller model requires less processing power.

- Llama3 8B with Q4KM quantization shows a remarkable 108.07 tokens/second, highlighting the power of quantization for accelerating smaller models.

- Llama3 8B with F16 quantization achieves a solid 47.15 tokens/second, proving its efficiency even at a higher precision.

Analogizing Performance: Think of Llama3 70B as a super-fast train carrying a massive cargo. While it can cover long distances, its speed is impacted by the load it carries. In contrast, Llama3 8B is like a nimble city bus, swiftly maneuvering through urban streets with ease.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for Your Needs

Now that we understand the performance landscape, let's discuss choosing the right model for your use cases:

- Llama3 8B: Ideal for tasks demanding speed and efficiency, such as generating short creative text formats or basic chatbots.

- Llama3 70B: Best suited for complex tasks that require a broad knowledge base and advanced language processing capabilities. This model might be a good choice for summarizing long documents or generating creative content with a high level of detail.

Workarounds for F16 Quantization

The lack of available F16 performance data for Llama3 70B presents a potential bottleneck. Two possible workarounds:

- Experiment with different quantization methods: Explore other quantization methods like Q8KM or Q4KM to find a sweet spot between accuracy and speed.

- Optimize the model's architecture: Investigate techniques like model pruning, knowledge distillation, or quantization-aware training to improve performance without sacrificing too much accuracy.

Importance of Context and Use Case

The ultimate choice depends on your specific use case and desired performance characteristics. Don't force a large model to do a small task, and don't settle for a small model when you need the power of a giant. Remember, it's all about finding the right fit!

FAQ

What is quantization?

Quantization is a technique used to reduce the size and memory footprint of a model by converting its parameters (weights and biases) from high-precision floating-point numbers to lower-precision integers. This process can significantly accelerate inference speed, especially on devices with limited memory.

Why is F16 quantization missing?

Missing benchmark data is a common occurrence in the rapidly evolving field of LLMs. Developers and researchers are constantly working on improving models and performance optimization techniques. The F16 quantization results for Llama3 70B might not be readily available due to ongoing research or limitations in current benchmark tools.

Can I run Llama3 70B on a smaller GPU?

While running Llama3 70B on a smaller GPU is theoretically possible, its performance will be significantly impacted. You might need to reduce the model's size using quantization, optimize the code, and be prepared for slower response times.

Keywords

Llama3, 70B, NVIDIA 3090, GPU, performance, token generation speed, quantization, Q4KM, F16, benchmarks, use case, recommendations, optimization, LLM, large language model, AI, machine learning, deep learning, NLP, natural language processing.