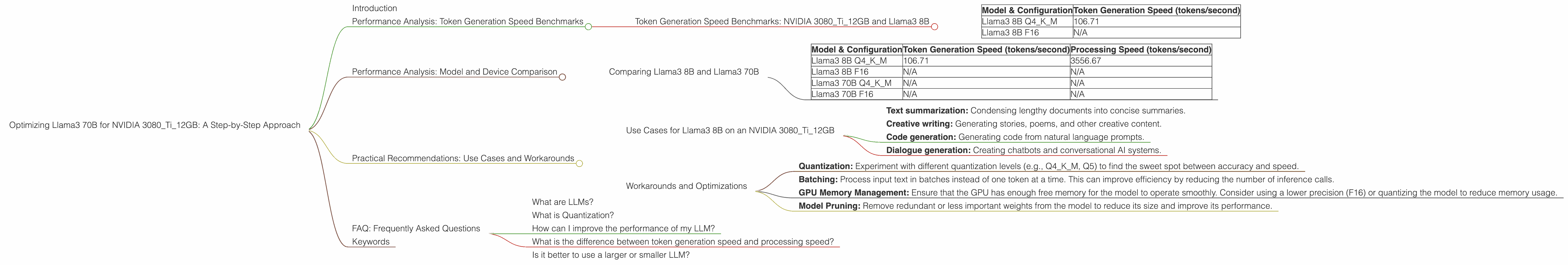

Optimizing Llama3 70B for NVIDIA 3080 Ti 12GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. LLMs are revolutionizing the way we interact with technology. From generating creative content to providing insightful summaries, LLMs are becoming indispensable tools for developers and everyday users alike.

But with the increasing complexity of LLMs comes the challenge of running them efficiently. Larger models require more computational power, and resource constraints can hinder their performance. This is where optimization comes in. In this guide, we'll delve into the world of Llama2 70B and explore ways to optimize its performance on a popular GPU, the NVIDIA 3080Ti12GB.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

The token generation speed is a crucial metric for evaluating the performance of an LLM. It measures how quickly the model can produce new text, which directly impacts the responsiveness and efficiency of your applications. Let's examine the token generation speeds of Llama3 8B on the NVIDIA 3080Ti12GB.

| Model & Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 106.71 |

| Llama3 8B F16 | N/A |

It's important to note that we lack the token generation speeds for Llama3 8B with F16 precision due to the absence of data. However, this data point underscores the importance of choosing the right configuration for your LLM and device.

Think of it like this: token generation speed is similar to how fast a car can get you from point A to point B. A faster car (higher token generation speed) gets you there quicker and allows for a smoother and more efficient journey (faster responses and better performance).

Here's what we can glean from these numbers:

- Llama3 8B with Q4KM quantization achieves a respectable token generation speed of 106.71 tokens per second on the NVIDIA 3080Ti12GB. This suggests that the model can handle a decent workload and provide reasonable inference latency.

Understanding the Metrics:

- Q4KM Quantization: This refers to a specific method of compressing the model, reducing its memory footprint and making it more efficient. The "Q4" signifies that each weight in the model is represented using 4 bits, leading to a significant reduction in memory usage. "K" and "M" denote the "kernel" and "matrix" components, respectively.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and Llama3 70B

Now, let's shift our focus to the larger Llama3 70B. The model size significantly impacts its performance, and while a larger model can offer more advanced capabilities, it will inevitably require more computational resources.

| Model & Configuration | Token Generation Speed (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | 106.71 | 3556.67 |

| Llama3 8B F16 | N/A | N/A |

| Llama3 70B Q4KM | N/A | N/A |

| Llama3 70B F16 | N/A | N/A |

We don't have the performance numbers for Llama3 70B on this specific device, but we can make some educated guesses.

It's likely that the 70B model would experience a significant performance drop due to its larger size and increased computational demands. This is a common trade-off in the LLM world—larger models are more powerful but come with the cost of slower performance.

Think of it like this: a larger LLM is like a large truck with a powerful engine but needs more fuel (memory and processing power) to operate. A smaller LLM like the 8B model is like a compact car—less powerful but more efficient.

We can also speculate about the impact of different precision levels (F16 vs. Q4KM):

- F16 precision typically provides higher accuracy but may negatively impact performance, especially on smaller devices.

- Q4KM quantization offers a trade-off between accuracy and performance. It can significantly improve the model's speed but sometimes may compromise accuracy.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on an NVIDIA 3080Ti12GB

The NVIDIA 3080Ti12GB coupled with Llama3 8B is a solid combination for a variety of use cases:

- Text summarization: Condensing lengthy documents into concise summaries.

- Creative writing: Generating stories, poems, and other creative content.

- Code generation: Generating code from natural language prompts.

- Dialogue generation: Creating chatbots and conversational AI systems.

Workarounds and Optimizations

Even if you don't have all the data points, there are still ways to optimize your LLM's performance:

- Quantization: Experiment with different quantization levels (e.g., Q4KM, Q5) to find the sweet spot between accuracy and speed.

- Batching: Process input text in batches instead of one token at a time. This can improve efficiency by reducing the number of inference calls.

- GPU Memory Management: Ensure that the GPU has enough free memory for the model to operate smoothly. Consider using a lower precision (F16) or quantizing the model to reduce memory usage.

- Model Pruning: Remove redundant or less important weights from the model to reduce its size and improve its performance.

FAQ: Frequently Asked Questions

What are LLMs?

LLMs are a specific type of artificial intelligence (AI) that excels at understanding and generating human-like text. They are trained on massive amounts of data and can perform a range of natural language processing tasks.

What is Quantization?

Quantization is like compressing the model to make it smaller and faster. It involves representing the model's weights using fewer bits, which reduces the memory usage and computational requirements.

How can I improve the performance of my LLM?

There are numerous ways to optimize an LLM's performance, including quantization, batching, GPU memory management, and model pruning. By experimenting with these techniques, you can find the right balance between accuracy and efficiency for your specific use case.

What is the difference between token generation speed and processing speed?

Token generation speed refers to how quickly the model can produce new text tokens, while processing speed refers to how quickly the model can handle the overall computation required for inference.

Is it better to use a larger or smaller LLM?

The choice between a larger or smaller LLM depends on your specific needs and resources. Larger models offer more advanced capabilities but require more computational power, while smaller models are more resource-efficient but may have limitations in their capabilities.

Keywords

LLM, Llama3, NVIDIA 3080Ti12GB, token generation speed, processing speed, quantization, Q4KM, F16, model size, performance analysis, optimization, use cases, workarounds, GPU memory management, batching, model pruning, natural language processing, AI, deep learning, inference, latency.