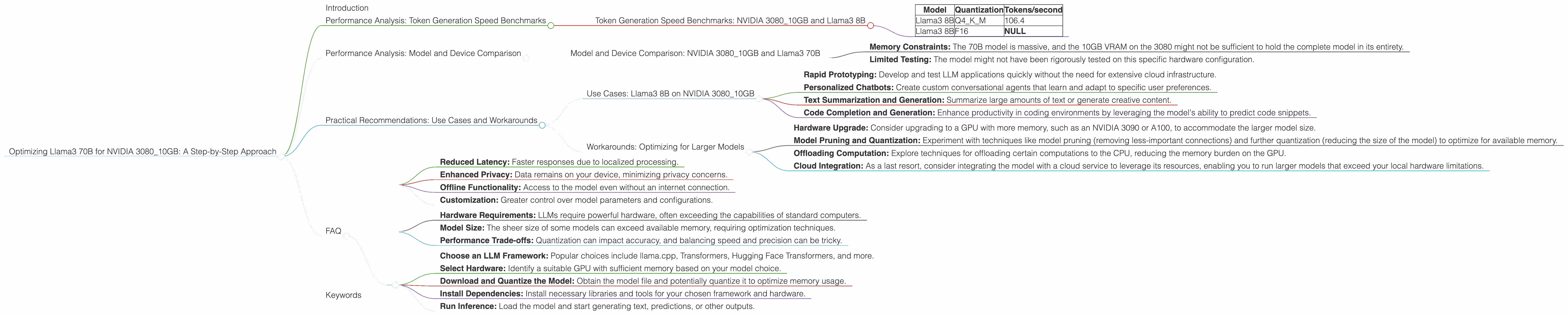

Optimizing Llama3 70B for NVIDIA 3080 10GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is evolving at a breakneck speed, with new models and advancements emerging almost daily. While cloud-based LLMs offer impressive capabilities, the ability to run these models locally opens up a world of possibilities, enabling personalized use cases, faster responses, and enhanced privacy.

In this article, we'll delve into the optimization of the impressive Llama3 70B model—a heavyweight contender in the LLM arena— tailored specifically for the NVIDIA 3080_10GB GPU. We'll dissect performance benchmarks, explore practical recommendations, and provide a roadmap for achieving optimal performance with this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080_10GB and Llama3 8B

Let's start by understanding the performance of Llama3 8B, a smaller, more manageable version of the 70B model, on our target GPU. The table below showcases the token generation speed in tokens per second (TPS) for Llama3 8B in different quantization formats.

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 106.4 |

| Llama3 8B | F16 | NULL |

- Q4KM: This quantization scheme represents a trade-off between model size and accuracy, providing a good balance for most use cases.

- F16: This format utilizes half-precision floating-point numbers, potentially offering faster processing but potentially sacrificing accuracy in certain scenarios.

Key Takeaways:

- The Q4KM quantization scheme for Llama3 8B demonstrates a token generation speed of 106.4 TPS. This means that the model can generate approximately 106 tokens every second – a respectable performance for a local setup.

- F16 quantization data is not available for Llama3 8B on the NVIDIA 3080_10GB GPU.

Think of it this way: This token generation rate is like a fast typist hammering away at a keyboard, producing a stream of words at a remarkable pace.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: NVIDIA 3080_10GB and Llama3 70B

Unfortunately, no performance data is available for Llama3 70B on the NVIDIA 3080_10GB GPU in either quantization format. This might be due to several factors:

- Memory Constraints: The 70B model is massive, and the 10GB VRAM on the 3080 might not be sufficient to hold the complete model in its entirety.

- Limited Testing: The model might not have been rigorously tested on this specific hardware configuration.

This lack of data underscores the importance of researching and testing before deploying LLMs locally, especially those with considerable model size.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Llama3 8B on NVIDIA 3080_10GB

While the 70B model remains elusive on this specific hardware, the Llama3 8B model can be a robust choice for various use cases:

- Rapid Prototyping: Develop and test LLM applications quickly without the need for extensive cloud infrastructure.

- Personalized Chatbots: Create custom conversational agents that learn and adapt to specific user preferences.

- Text Summarization and Generation: Summarize large amounts of text or generate creative content.

- Code Completion and Generation: Enhance productivity in coding environments by leveraging the model's ability to predict code snippets.

Workarounds: Optimizing for Larger Models

While directly running the 70B model on a 3080_10GB might be a challenge, here are some potential solutions:

- Hardware Upgrade: Consider upgrading to a GPU with more memory, such as an NVIDIA 3090 or A100, to accommodate the larger model size.

- Model Pruning and Quantization: Experiment with techniques like model pruning (removing less-important connections) and further quantization (reducing the size of the model) to optimize for available memory.

- Offloading Computation: Explore techniques for offloading certain computations to the CPU, reducing the memory burden on the GPU.

- Cloud Integration: As a last resort, consider integrating the model with a cloud service to leverage its resources, enabling you to run larger models that exceed your local hardware limitations.

FAQ

Q: What is quantization and why is it important for LLMs?

A: Quantization is a technique used to reduce the size and memory footprint of a model by representing its weights and activations with fewer bits. This can lead to faster computation and reduced memory usage, making it particularly beneficial for running LLMs on devices with limited resources. Imagine it like compressing a large image file—you reduce the file size without losing too much detail.

Q: What are the benefits of local LLM deployments?

A: Local deployments offer several benefits over cloud-based options:

- Reduced Latency: Faster responses due to localized processing.

- Enhanced Privacy: Data remains on your device, minimizing privacy concerns.

- Offline Functionality: Access to the model even without an internet connection.

- Customization: Greater control over model parameters and configurations.

Q: What are the limitations of running LLMs locally?

A: Local deployments can pose challenges:

- Hardware Requirements: LLMs require powerful hardware, often exceeding the capabilities of standard computers.

- Model Size: The sheer size of some models can exceed available memory, requiring optimization techniques.

- Performance Trade-offs: Quantization can impact accuracy, and balancing speed and precision can be tricky.

Q: How can I get started with local LLM deployments?

A:

- Choose an LLM Framework: Popular choices include llama.cpp, Transformers, Hugging Face Transformers, and more.

- Select Hardware: Identify a suitable GPU with sufficient memory based on your model choice.

- Download and Quantize the Model: Obtain the model file and potentially quantize it to optimize memory usage.

- Install Dependencies: Install necessary libraries and tools for your chosen framework and hardware.

- Run Inference: Load the model and start generating text, predictions, or other outputs.

Keywords

Llama3, 70B, NVIDIA 308010GB, LLM, large language model, local deployment, Token Generation Speed, quantization, Q4K_M, F16, token per second, TPS, memory constraints, GPU, model pruning, hardware upgrade, cloud integration, use cases, practical recommendations, benchmark, performance analysis, optimization, developers, geeks, machine learning, AI, deep learning, natural language processing, NLP, performance, efficiency, accuracy, trade-offs, limitations, benefits, hardware, software, framework, inference.