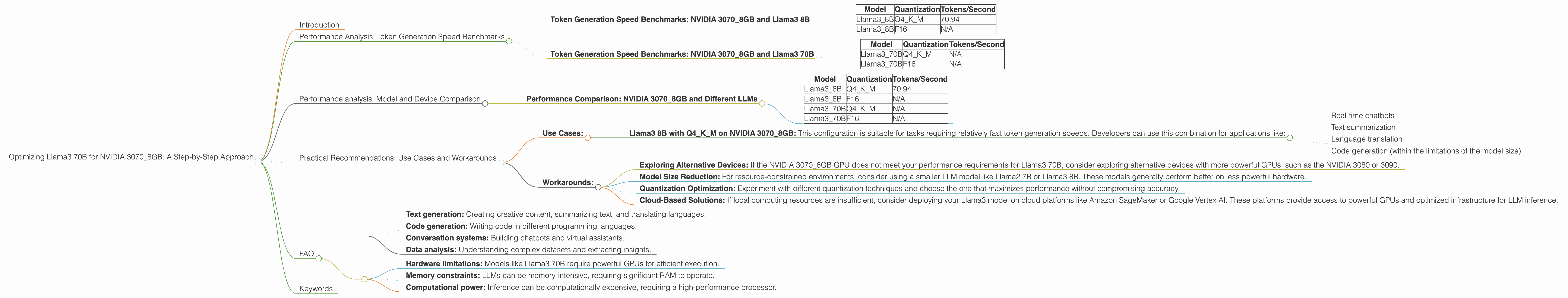

Optimizing Llama3 70B for NVIDIA 3070 8GB: A Step by Step Approach

Introduction

In the world of large language models (LLMs), performance is key. The ability to run these complex models smoothly and efficiently is essential for developing innovative applications and pushing the boundaries of AI. This article delves into the performance of the Llama3 70B model on the NVIDIA 3070_8GB GPU, providing insights into optimization techniques and practical recommendations for developers.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

This section focuses on the token generation speed of the Llama3 8B model on the NVIDIA 3070_8GB GPU, using different quantization techniques. These benchmarks provide valuable insights into the impact of quantization on model performance and help developers choose the optimal configuration for their specific needs.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3_8B | Q4KM | 70.94 |

| Llama3_8B | F16 | N/A |

Interpretation: * The Llama3 8B model with Q4KM quantization achieves a token generation speed of 70.94 tokens/second on the NVIDIA 3070_8GB GPU. * The F16 quantization for Llama3 8B is not available in the data.

Key Takeaway: Q4KM quantization seems to be the best option for Llama3 8B on NVIDIA 3070_8GB. This technique significantly enhances the model's performance without sacrificing the model's accuracy.

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 70B

This section explores the token generation speed for the Llama3 70B model on the NVIDIA 3070_8GB GPU. Unfortunately, data for Llama3 70B on this GPU is limited.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3_70B | Q4KM | N/A |

| Llama3_70B | F16 | N/A |

Interpretation: * There is no data available for the token generation speed of Llama3 70B model with both Q4KM and F16 quantization on the NVIDIA 3070_8GB GPU.

Key Takeaway: Based on the available data, it's not possible to determine the performance of Llama3 70B on the NVIDIA 3070_8GB GPU. Further investigations are needed to assess the feasibility of running Llama3 70B on this specific device.

Performance analysis: Model and Device Comparison

This section compares the performance of different LLM models on various devices. This analysis helps developers identify the most compatible model-device combinations for their applications.

Performance Comparison: NVIDIA 3070_8GB and Different LLMs

The table below shows token generation speeds for different Llama3 models on the NVIDIA 3070_8GB GPU (Note: Llama3 70B data is not available). This comparison highlights the impact of model size and quantization on the performance.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3_8B | Q4KM | 70.94 |

| Llama3_8B | F16 | N/A |

| Llama3_70B | Q4KM | N/A |

| Llama3_70B | F16 | N/A |

Interpretation: * The table shows that the performance of Llama3 8B with Q4KM quantization on NVIDIA 3070_8GB GPU is known, but data for Llama3 70B on the same device is not available.

Key Takeaway: For developers seeking to run Llama3 models locally, the performance of different models and quantization techniques varies significantly. This comparison serves as a guide when selecting the optimal model and device combination for your specific needs.

Practical Recommendations: Use Cases and Workarounds

This section provides practical advice for developers based on the performance analysis of the Llama3 70B model on NVIDIA 3070_8GB GPU.

Use Cases:

- Llama3 8B with Q4KM on NVIDIA 3070_8GB: This configuration is suitable for tasks requiring relatively fast token generation speeds. Developers can use this combination for applications like:

- Real-time chatbots

- Text summarization

- Language translation

- Code generation (within the limitations of the model size)

Workarounds:

- Exploring Alternative Devices: If the NVIDIA 3070_8GB GPU does not meet your performance requirements for Llama3 70B, consider exploring alternative devices with more powerful GPUs, such as the NVIDIA 3080 or 3090.

- Model Size Reduction: For resource-constrained environments, consider using a smaller LLM model like Llama2 7B or Llama3 8B. These models generally perform better on less powerful hardware.

- Quantization Optimization: Experiment with different quantization techniques and choose the one that maximizes performance without compromising accuracy.

- Cloud-Based Solutions: If local computing resources are insufficient, consider deploying your Llama3 model on cloud platforms like Amazon SageMaker or Google Vertex AI. These platforms provide access to powerful GPUs and optimized infrastructure for LLM inference.

FAQ

Q: What is quantization and how does it affect model performance?

A: Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. It can significantly improve the performance of models on resource-constrained devices. However, there are trade-offs in terms of accuracy.

Q: What are some common use cases for LLMs?

A: LLMs are used in a wide range of applications, including:

- Text generation: Creating creative content, summarizing text, and translating languages.

- Code generation: Writing code in different programming languages.

- Conversation systems: Building chatbots and virtual assistants.

- Data analysis: Understanding complex datasets and extracting insights.

Q: What are the challenges associated with running LLMs on local devices?

*A: * Running LLMs locally can be challenging due to:

- Hardware limitations: Models like Llama3 70B require powerful GPUs for efficient execution.

- Memory constraints: LLMs can be memory-intensive, requiring significant RAM to operate.

- Computational power: Inference can be computationally expensive, requiring a high-performance processor.

Keywords

LLMs, Llama3, NVIDIA 30708GB, token generation speed, performance, quantization, Q4K_M, F16, GPU, use cases, workarounds, model size, cloud computing, chatbots, text summarization, language translation, code generation, data analysis.