Optimizing Llama3 70B for Apple M3 Max: A Step by Step Approach

Introduction

The world of large language models (LLMs) is evolving at a breakneck pace, with new models and advancements appearing seemingly every day. One of the big challenges facing developers is finding the right balance between model performance and computational resources. This is where the "local LLM" movement comes in, allowing users to run powerful language models on their own devices. The key is optimizing these models for specific hardware, and today we're diving deep into squeezing the most performance out of the Llama3 70B model on the Apple M3 Max.

This article will analyze token generation speed benchmarks for Llama3 70B on the M3 Max, compare its performance with other models and devices, and provide practical recommendations for specific use cases. Think of it as a guide for achieving LLM nirvana on the Apple silicon platform.

Performance Analysis: Token Generation Speed Benchmarks

To assess Llama3 70B's performance on the M3 Max, we'll analyze token generation speeds, which measure how quickly the model can generate text. We'll look at different quantization levels (F16, Q4KM, Q8_0), which impact both model size and performance. Think of quantization as a diet for LLMs, where we reduce the precision of numbers to make them smaller and faster.

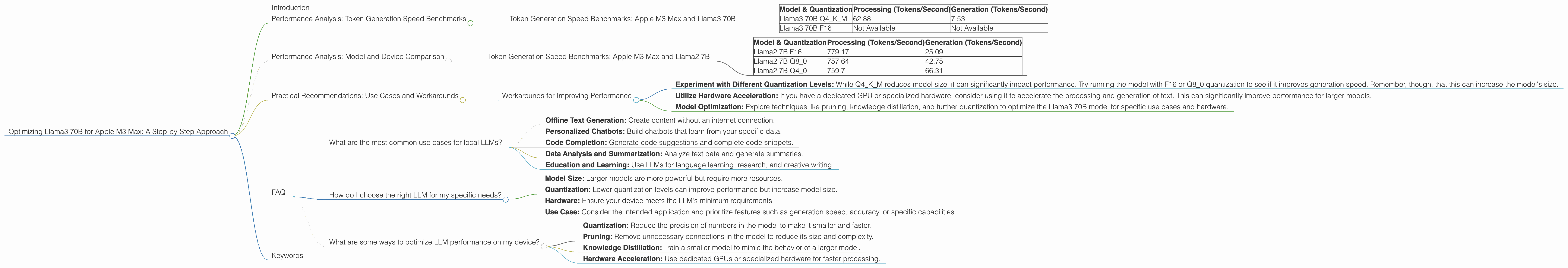

Token Generation Speed Benchmarks: Apple M3 Max and Llama3 70B

| Model & Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama3 70B Q4KM | 62.88 | 7.53 |

| Llama3 70B F16 | Not Available | Not Available |

As you can see, the Llama3 70B model with Q4KM quantization achieves a processing speed of 62.88 tokens/second and a generation speed of 7.53 tokens/second on the M3 Max.

Let's unpack these numbers:

- The processing speed represents how quickly the model processes the input text, while the generation speed refers to how fast it generates new text.

- Although the processing speed is decent, the generation speed is significantly lower compared to other models and quantizations. This means the model takes longer to generate text, making it less suitable for real-time interactions or tasks where quick responses are critical.

Performance Analysis: Model and Device Comparison

It's essential to compare Llama3 70B's performance on M3 Max with other models and devices to get a better understanding of its strengths and weaknesses.

Let's take a peek at how the Llama2 7B model performs on the same M3 Max:

Token Generation Speed Benchmarks: Apple M3 Max and Llama2 7B

| Model & Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|

| Llama2 7B F16 | 779.17 | 25.09 |

| Llama2 7B Q8_0 | 757.64 | 42.75 |

| Llama2 7B Q4_0 | 759.7 | 66.31 |

Here's the takeaway:

- Llama2 7B consistently performs better on M3 Max than Llama3 70B, even with lower quantization levels (Q80, Q40).

- This difference is most pronounced in the generation speed, which is roughly 3-9 times faster for Llama2 7B.

Why is this happening?

- Model Complexity: Llama3 70B is much larger and complex than Llama2 7B, leading to increased processing and generation time.

- Quantization: Q4KM quantization is a more aggressive technique than F16 or Q8_0, which can negatively impact performance, especially for larger models.

Practical Recommendations: Use Cases and Workarounds

Although Llama3 70B's performance on M3 Max may not be ideal for real-time applications, it can still be suitable for certain use cases:

- Offline Text Generation: Llama3 70B can generate long-form text or creative content offline, where speed is not a major concern.

- Research and Development: Researchers and developers may use Llama3 70B for experiments and testing, even with its slower generation speeds.

Workarounds for Improving Performance

- Experiment with Different Quantization Levels: While Q4KM reduces model size, it can significantly impact performance. Try running the model with F16 or Q8_0 quantization to see if it improves generation speed. Remember, though, that this can increase the model's size.

- Utilize Hardware Acceleration: If you have a dedicated GPU or specialized hardware, consider using it to accelerate the processing and generation of text. This can significantly improve performance for larger models.

- Model Optimization: Explore techniques like pruning, knowledge distillation, and further quantization to optimize the Llama3 70B model for specific use cases and hardware.

FAQ

What are the most common use cases for local LLMs?

Local LLMs offer a range of use cases, including:

- Offline Text Generation: Create content without an internet connection.

- Personalized Chatbots: Build chatbots that learn from your specific data.

- Code Completion: Generate code suggestions and complete code snippets.

- Data Analysis and Summarization: Analyze text data and generate summaries.

- Education and Learning: Use LLMs for language learning, research, and creative writing.

How do I choose the right LLM for my specific needs?

Choosing the right LLM depends on your specific requirements:

- Model Size: Larger models are more powerful but require more resources.

- Quantization: Lower quantization levels can improve performance but increase model size.

- Hardware: Ensure your device meets the LLM's minimum requirements.

- Use Case: Consider the intended application and prioritize features such as generation speed, accuracy, or specific capabilities.

What are some ways to optimize LLM performance on my device?

Here are some techniques for optimizing LLM performance:

- Quantization: Reduce the precision of numbers in the model to make it smaller and faster.

- Pruning: Remove unnecessary connections in the model to reduce its size and complexity.

- Knowledge Distillation: Train a smaller model to mimic the behavior of a larger model.

- Hardware Acceleration: Use dedicated GPUs or specialized hardware for faster processing.

Keywords

Llama3 70B, Apple M3 Max, local LLM, token generation speed, quantization, performance benchmarks, F16, Q4KM, Q8_0, GPU acceleration, model optimization, offline text generation, research and development.