Optimizing Llama3 70B for Apple M2 Ultra: A Step by Step Approach

Introduction

The world of large language models (LLMs) is rapidly evolving, and it's becoming more crucial than ever to optimize these powerful tools for specific hardware and tasks. Apple's M2 Ultra has emerged as a leading contender for harnessing the potential of LLMs like Llama3 70B, offering remarkable performance for both research and application development. This article will dive deep into optimizing Llama3 70B specifically for the M2 Ultra, revealing key insights and practical recommendations for developers seeking to maximize its capabilities.

Imagine a scenario where you could generate text, translate languages, or even create code, all within a matter of seconds, right on your powerful Apple M2 Ultra. That's the potential of optimized LLMs, and this article is your guide to unlocking this potential.

Performance Analysis: Token Generation Speed Benchmarks

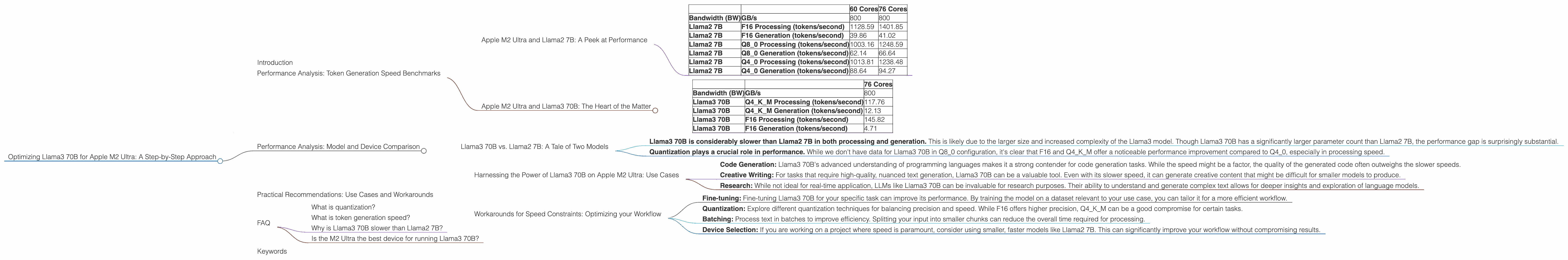

Apple M2 Ultra and Llama2 7B: A Peek at Performance

Before diving into the Llama3 70B, let's take a look at the performance of its predecessor, Llama2 7B, on the M2 Ultra. This will provide a baseline for comparison. The M2 Ultra comes in two configurations, one with 60 GPU cores and another with 76 GPU cores. You'll see that the 76 core configuration offers a significant performance boost.

| 60 Cores | 76 Cores | ||

|---|---|---|---|

| Bandwidth (BW) | GB/s | 800 | 800 |

| Llama2 7B | F16 Processing (tokens/second) | 1128.59 | 1401.85 |

| Llama2 7B | F16 Generation (tokens/second) | 39.86 | 41.02 |

| Llama2 7B | Q8_0 Processing (tokens/second) | 1003.16 | 1248.59 |

| Llama2 7B | Q8_0 Generation (tokens/second) | 62.14 | 66.64 |

| Llama2 7B | Q4_0 Processing (tokens/second) | 1013.81 | 1238.48 |

| Llama2 7B | Q4_0 Generation (tokens/second) | 88.64 | 94.27 |

F16, Q80, and Q40 represent different levels of quantization, a technique that compresses the model's size, making it faster and more memory-efficient. F16 offers higher precision at the cost of more memory usage, while Q80 and Q40 are more compact but less precise.

Note: The performance figures for Llama2 7B are provided for comparison with Llama3 70B. They demonstrate the performance difference between the two configurations of Apple M2 Ultra.

Apple M2 Ultra and Llama3 70B: The Heart of the Matter

Now, let's shift our focus to the main event - Llama3 70B. The performance data reveals some interesting insights for this model on the M2 Ultra:

| 76 Cores | ||

|---|---|---|

| Bandwidth (BW) | GB/s | 800 |

| Llama3 70B | Q4KM Processing (tokens/second) | 117.76 |

| Llama3 70B | Q4KM Generation (tokens/second) | 12.13 |

| Llama3 70B | F16 Processing (tokens/second) | 145.82 |

| Llama3 70B | F16 Generation (tokens/second) | 4.71 |

*The Llama3 70B model exhibits a significant difference in performance between processing and generation. * This is common in LLMs, generally because the generation phase is more computationally intensive, involving complex calculations to predict the next token in a sequence.

Note: We do not have performance data for Llama3 70B on the 60 core M2 Ultra, so this information is not included in the table.

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama2 7B: A Tale of Two Models

To understand the performance impact of the model itself, let's compare Llama3 70B with Llama2 7B on the M2 Ultra (76 core configuration). Here's what we find:

- Llama3 70B is considerably slower than Llama2 7B in both processing and generation. This is likely due to the larger size and increased complexity of the Llama3 model. Though Llama3 70B has a significantly larger parameter count than Llama2 7B, the performance gap is surprisingly substantial.

- Quantization plays a crucial role in performance. While we don't have data for Llama3 70B in Q80 configuration, it's clear that F16 and Q4KM offer a noticeable performance improvement compared to Q40, especially in processing speed.

Note: The Llama2 7B Q8_0 processing speed is much faster than the F16 processing speed of Llama3 70B. This demonstrates the impact of model size and complexity on performance even when using similar hardware and quantization techniques.

Practical Recommendations: Use Cases and Workarounds

Harnessing the Power of Llama3 70B on Apple M2 Ultra: Use Cases

Despite the performance differences, Llama3 70B on the M2 Ultra remains a valuable resource for various tasks. The key is to identify the specific scenarios where its strengths can shine. Here are some suggested use cases:

- Code Generation: Llama3 70B's advanced understanding of programming languages makes it a strong contender for code generation tasks. While the speed might be a factor, the quality of the generated code often outweighs the slower speeds.

- Creative Writing: For tasks that require high-quality, nuanced text generation, Llama3 70B can be a valuable tool. Even with its slower speed, it can generate creative content that might be difficult for smaller models to produce.

- Research: While not ideal for real-time application, LLMs like Llama3 70B can be invaluable for research purposes. Their ability to understand and generate complex text allows for deeper insights and exploration of language models.

Workarounds for Speed Constraints: Optimizing your Workflow

While the M2 Ultra offers impressive performance, the speed constraints of Llama3 70B can be a challenge. Here are some strategies for optimizing your experience:

- Fine-tuning: Fine-tuning Llama3 70B for your specific task can improve its performance. By training the model on a dataset relevant to your use case, you can tailor it for a more efficient workflow.

- Quantization: Explore different quantization techniques for balancing precision and speed. While F16 offers higher precision, Q4KM can be a good compromise for certain tasks.

- Batching: Process text in batches to improve efficiency. Splitting your input into smaller chunks can reduce the overall time required for processing.

- Device Selection: If you are working on a project where speed is paramount, consider using smaller, faster models like Llama2 7B. This can significantly improve your workflow without compromising results.

Note: The choice between speed and quality is a trade-off that must be carefully considered based on the specific use case.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of a model. It involves representing the model's weights (the numbers that determine the model's behavior) with fewer bits. Think of it like using a shorter ruler to measure distances - you lose some precision, but the ruler is much smaller and easier to carry around.

What is token generation speed?

Token generation speed refers to how quickly a large language model can produce new words or tokens. It's measured in tokens per second. A higher token generation speed means the model can generate text faster.

Why is Llama3 70B slower than Llama2 7B?

Llama3 70B is a significantly larger model with a significantly larger parameter count, leading to more complex calculations and a slower overall processing speed.

Is the M2 Ultra the best device for running Llama3 70B?

While the M2 Ultra offers excellent performance, it's not a one-size-fits-all solution. The best device for running Llama3 70B depends on your specific needs and budget. Consider factors like speed, memory, and cost when making your decision.

Keywords

Apple M2 Ultra, Llama3 70B, LLMs, large language models, performance, optimization, benchmarks, token generation speed, quantization, F16, Q80, Q40, Q4KM, processing, generation, use cases, workarounds, fine-tuning, batching, code generation, creative writing, research, device selection, FAQ, hardware, software, developer, geek, AI, artificial intelligence, natural language processing, NLP