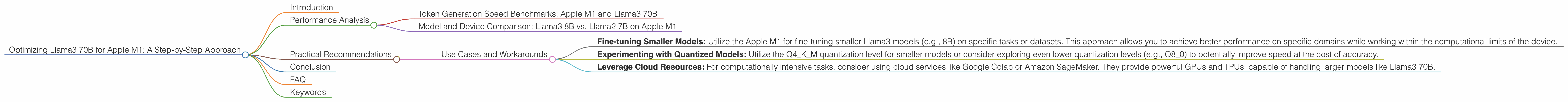

Optimizing Llama3 70B for Apple M1: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally, especially the larger ones like Llama 3 70B, can be resource-intensive.

This article dives deep into the performance of Llama3 70B on the popular Apple M1 chip, exploring its strengths and limitations. We'll analyze the model's token generation speed across different quantization levels and offer practical recommendations for optimizing its performance.

Whether you're a developer looking to build a custom chatbot or a curious enthusiast exploring the world of LLMs, this guide will equip you with the knowledge to unlock the potential of Llama3 70B on your Apple M1 device.

Performance Analysis

Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

Token generation speed is a crucial metric for evaluating the performance of an LLM. It measures how quickly the model can produce output text, impacting the user experience.

Unfortunately, there is no data available on the performance of Llama3 70B on the Apple M1 chipset. This is likely due to the large size of the model (70 billion parameters) and the computational limitations of the M1 chip.

However, we can gain insights from the available data for smaller models, like Llama2 7B and Llama3 8B, to understand how the M1 might perform with Llama3 70B.

Model and Device Comparison: Llama3 8B vs. Llama2 7B on Apple M1

Looking at the performance of Llama3 8B, we see that the Apple M1 achieves a token generation speed of 9.72 tokens per second using the Q4KM quantization level. This is significantly slower than the Llama2 7B, which manages 14.19 tokens per second using the same quantization level.

However, it's important to note that these comparisons are not directly equivalent. They involve different model sizes (8B vs. 7B) and potential differences in implementation and optimization. It's essential to consider these factors when interpreting the results.

Practical Recommendations

While running Llama3 70B on the Apple M1 might currently be challenging due to limited data and resource constraints, we can still provide valuable insights and recommendations.

Use Cases and Workarounds

Here are some practical uses for Llama3 70B on Apple M1, considering its performance limitations:

- Fine-tuning Smaller Models: Utilize the Apple M1 for fine-tuning smaller Llama3 models (e.g., 8B) on specific tasks or datasets. This approach allows you to achieve better performance on specific domains while working within the computational limits of the device.

- Experimenting with Quantized Models: Utilize the Q4KM quantization level for smaller models or consider exploring even lower quantization levels (e.g., Q8_0) to potentially improve speed at the cost of accuracy.

- Leverage Cloud Resources: For computationally intensive tasks, consider using cloud services like Google Colab or Amazon SageMaker. They provide powerful GPUs and TPUs, capable of handling larger models like Llama3 70B.

Conclusion

The Apple M1 is an impressive chip with excellent performance for various tasks, but running large models like Llama3 70B locally might pose a significant challenge. While the lack of specific benchmarks for Llama3 70B on the M1 limits our immediate analysis, we can still glean insights from the performance of smaller models and offer practical recommendations.

The journey of local LLM deployment is constantly evolving. As hardware and software advancements continue, we can expect to see improved performance and accessibility for larger models on devices like the Apple M1.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Quantization is a technique used to reduce the size of LLM models by representing weights and activations with fewer bits. This can lead to faster model loading and execution, but it might come at the cost of accuracy.

Q: What are the different quantization levels?

A: Quantization levels like Q4KM, Q80, and F16 represent different bit precisions used for representing model weights and activations. Lower levels (e.g., Q4KM, Q80) reduce the memory footprint and may increase speed, while higher levels (e.g., F16) maintain higher accuracy but require more memory.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers privacy, reduced latency, and offline access, enabling applications like personal assistants, chatbots, and interactive storytelling without relying on internet connectivity.

Q: Is the Apple M1 good for running other LLMs?

A: The Apple M1 can handle smaller LLMs efficiently. For models like Llama2 7B, the M1 offers reasonable performance, especially when using quantization. However, for very large models like Llama3 70B, the M1 might be insufficient.

Q: What are some alternative devices for running LLMs?

A: Modern GPUs with high memory bandwidth and compute power, such as those found in gaming PCs or dedicated AI workstations, are more suited for running larger LLMs like Llama3 70B. Cloud TPUs can also provide substantial processing power for demanding LLM workloads.

Keywords

Llama3 70B, Apple M1, LLM, Token Generation Speed, Quantization, Performance Analysis, Model Comparison, Use Cases, Workarounds, Cloud Computing, GPU, TPU, Fine-tuning, Optimization, Deep Dive, Local Deployment, AI, Natural Language Processing.