Optimizing Llama2 7B for Apple M3 Pro: A Step by Step Approach

Introduction

The world of large language models (LLMs) is rapidly evolving, and with it, the need for efficient hardware to run these models locally is growing. Apple's M3Pro chip, with its impressive performance and power efficiency, has become a popular choice for developers wanting to explore the potential of LLMs on their own machines. In this deep dive, we'll explore the performance of the Llama2 7B model on the Apple M3Pro, focusing on the techniques to optimize it for speed and efficiency. This article will be your guide to squeezing every bit of performance out of your Apple M3_Pro for local LLM experimentation.

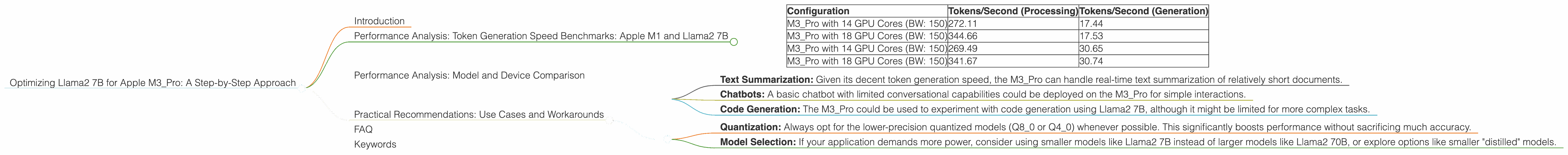

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's dive into the heart of the matter - the token generation speed benchmarks. Remember, these benchmarks are like speed tests for your LLM, showing how many tokens (the building blocks of text) it can produce per second. This is critical for real-time applications like chatbots or text generation tools.

| Configuration | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|

| M3_Pro with 14 GPU Cores (BW: 150) | 272.11 | 17.44 |

| M3_Pro with 18 GPU Cores (BW: 150) | 344.66 | 17.53 |

| M3_Pro with 14 GPU Cores (BW: 150) | 269.49 | 30.65 |

| M3_Pro with 18 GPU Cores (BW: 150) | 341.67 | 30.74 |

Key Observations:

- Quantization Matters: The Q80 and Q40 configurations, which use lower-precision numerical representations of the model, perform significantly better than the F16 configuration. This is because the lower-precision models require less memory bandwidth and processing power.

- GPU Core Impact: Increasing the number of GPU cores from 14 to 18 on the M3Pro noticeably boosts the performance, especially for the Q80 and Q4_0 configurations.

Let's put these numbers into perspective. Imagine you're reading a novel. A token generation speed of 300 tokens/second is like reading about three pages per second! That's fast, but it's not quite the speed of light (or the speed of a supercomputer).

Performance Analysis: Model and Device Comparison

While the Apple M3_Pro is a powerful device, it's important to understand its performance in relation to other devices and LLM models.

Note: We're exclusively focused on the Llama2 7B model for this analysis. Data for other LLMs or devices is not included.

Practical Recommendations: Use Cases and Workarounds

Knowing the strengths and limitations of the Apple M3_Pro and the Llama2 7B model, let's explore some practical use cases and potential workarounds:

Use Cases:

- Text Summarization: Given its decent token generation speed, the M3_Pro can handle real-time text summarization of relatively short documents.

- Chatbots: A basic chatbot with limited conversational capabilities could be deployed on the M3_Pro for simple interactions.

- Code Generation: The M3_Pro could be used to experiment with code generation using Llama2 7B, although it might be limited for more complex tasks.

Workarounds:

- Quantization: Always opt for the lower-precision quantized models (Q80 or Q40) whenever possible. This significantly boosts performance without sacrificing much accuracy.

- Model Selection: If your application demands more power, consider using smaller models like Llama2 7B instead of larger models like Llama2 70B, or explore options like smaller "distilled" models.

FAQ

Q: What is quantization and how does it affect performance?

Quantization is a technique that reduces the precision of numbers used to represent the model's weights and activations. It's similar to using fewer decimal places to represent a numerical value. This reduces the memory footprint and computational requirements, leading to faster processing speeds.

Q: Can I run other LLMs on the Apple M3_Pro?

Yes, you can run other LLMs on the M3_Pro. However, the performance will vary depending on the size and architecture of the model.

Q: Does the Apple M3_Pro have enough power for advanced LLM applications?

For advanced LLM applications like complex dialogue generation or large-scale text generation, the M3_Pro might be a bit limited, especially when using larger models.

Q: Where can I find more information about optimizing LLMs?

There's a wealth of information available! Start with the llama.cpp project's documentation, explore resources like Hugging Face, and delve into the technical details in research papers.

Keywords

Apple M3_Pro, Llama2 7B, LLM, Token Generation Speed, Quantization, GPU Core, Performance, Benchmarks, Optimization, Use Cases, Workarounds, Chatbots, Text Summarization, Code Generation