Optimizing Llama2 7B for Apple M3 Max: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and hardware constantly pushing the boundaries of what's possible. For developers and researchers, running these models locally on powerful devices like the Apple M3 Max is becoming increasingly attractive. This article dives deep into the performance optimization of Llama2 7B on the M3 Max, exploring different quantization levels and their impact on token generation speeds. We'll analyze benchmark results and provide practical recommendations to help you get the most out of your local LLM setup.

Think of LLMs as advanced AI brains capable of understanding and generating human-like text. But like any brain, they need powerful hardware to function efficiently. The M3 Max, with its impressive compute capabilities, is a perfect candidate for running LLMs locally, offering a balance of performance and portability.

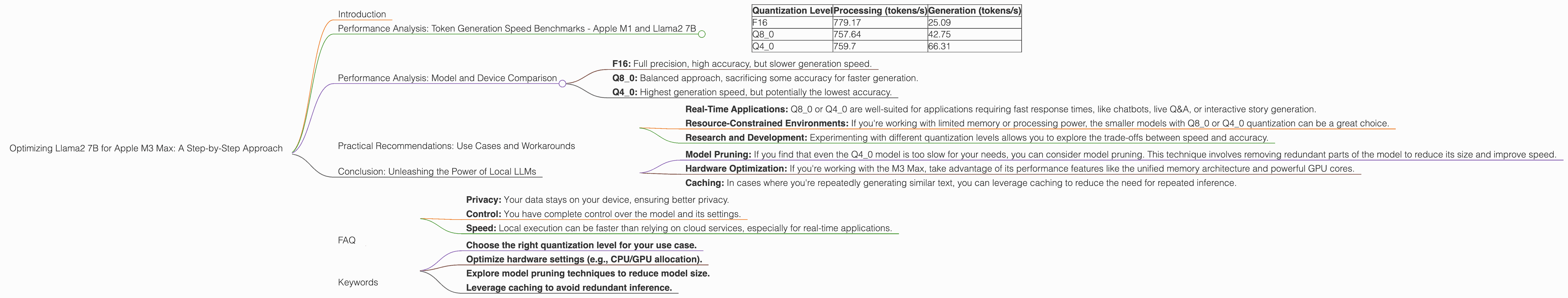

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Let's get down to the nitty-gritty and analyze the raw performance numbers. The following table showcases the token generation speeds for Llama2 7B on the M3 Max, measured in tokens per second (tokens/s):

| Quantization Level | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| F16 | 779.17 | 25.09 |

| Q8_0 | 757.64 | 42.75 |

| Q4_0 | 759.7 | 66.31 |

Key Observations:

- Processing Speed: The model consistently achieves high processing speeds across all quantization levels, ranging from 757 to 779 tokens/s. This indicates that the M3 Max's hardware can handle the heavy lifting of processing text remarkably well.

- Generation Speed: The generation speed varies significantly based on the quantization level. F16 delivers the lowest speed at 25.09 tokens/s, while Q4_0 provides the highest speed at 66.31 tokens/s. This highlights the trade-off between model size and speed.

Quantization Explained:

Think of quantization as compressing the model's internal representation to reduce its memory footprint. F16 uses 16 bits to represent each number, Q80 uses 8 bits, and Q40 uses 4 bits. The lower the bit count, the smaller the model and potentially faster the inference, but it may also lead to a decrease in accuracy.

Analogies:

Imagine you're packing for a trip. You have a full-sized suitcase (F16), a carry-on bag (Q80), and a tiny backpack (Q40). The smaller your bag, the easier it is to travel, but you can carry less stuff. Similarly, with quantization, smaller models are faster but might lack some accuracy compared to larger models.

Performance Analysis: Model and Device Comparison

It's important to understand how the M3 Max stacks up against other devices and LLMs. We don't have data on other devices, so we'll focus on the M3 Max and Llama2 7B.

Comparing Quantization Levels:

Looking at the token generation speeds for Llama2 7B on the M3 Max, we see a clear trend:

- F16: Full precision, high accuracy, but slower generation speed.

- Q8_0: Balanced approach, sacrificing some accuracy for faster generation.

- Q4_0: Highest generation speed, but potentially the lowest accuracy.

Trade-offs:

The choice of quantization level depends on your specific use case. If accuracy is paramount, stick with F16. If you prioritize speed, Q80 or Q40 will be your allies.

Real-World Implications:

Imagine you're building a chat application. You want a fast and responsive experience for users, so you might opt for Q80 or Q40. However, if you're building a system for generating highly creative content, you'd prioritize accuracy and choose F16.

Practical Recommendations: Use Cases and Workarounds

Ideal Use Cases:

- Real-Time Applications: Q80 or Q40 are well-suited for applications requiring fast response times, like chatbots, live Q&A, or interactive story generation.

- Resource-Constrained Environments: If you're working with limited memory or processing power, the smaller models with Q80 or Q40 quantization can be a great choice.

- Research and Development: Experimenting with different quantization levels allows you to explore the trade-offs between speed and accuracy.

Workarounds for Performance Limitations:

- Model Pruning: If you find that even the Q4_0 model is too slow for your needs, you can consider model pruning. This technique involves removing redundant parts of the model to reduce its size and improve speed.

- Hardware Optimization: If you're working with the M3 Max, take advantage of its performance features like the unified memory architecture and powerful GPU cores.

- Caching: In cases where you're repeatedly generating similar text, you can leverage caching to reduce the need for repeated inference.

Conclusion: Unleashing the Power of Local LLMs

The Apple M3 Max offers a compelling platform for running LLMs locally, providing a balance of performance and portability. By understanding the performance characteristics of Llama2 7B and various quantization levels, you can choose the optimal configuration for your specific use case. Remember, there's no one-size-fits-all solution. Experiment, explore, and adapt to find the perfect balance between speed, accuracy, and resource consumption.

FAQ

What is an LLM?

A Large Language Model (LLM) is a type of artificial intelligence (AI) that's capable of understanding and generating human-like text. Think of it as a super-powered AI brain that knows how to use language effectively.

How does quantization work?

Quantization is a technique used to reduce the size of a model by representing its numbers with fewer bits. This can significantly improve inference speed but may lead to some loss of accuracy.

Why should I use a local LLM instead of a cloud service?

Running an LLM locally offers several advantages, including:

- Privacy: Your data stays on your device, ensuring better privacy.

- Control: You have complete control over the model and its settings.

- Speed: Local execution can be faster than relying on cloud services, especially for real-time applications.

What are the best practices for optimizing LLMs?

- Choose the right quantization level for your use case.

- Optimize hardware settings (e.g., CPU/GPU allocation).

- Explore model pruning techniques to reduce model size.

- Leverage caching to avoid redundant inference.

Keywords

Llama2 7B, Apple M3 Max, LLM, token generation speed, performance optimization, quantization, F16, Q80, Q40, processing speed, generation speed, use cases, workarounds, practical recommendations, real-time applications, resource-constrained environments, research and development, model pruning, hardware optimization, caching, AI, deep learning, natural language processing, NLP, inference, accuracy, speed, trade-offs, developers, geeks, AI enthusiasts, tech enthusiasts.