Optimizing Llama2 7B for Apple M3: A Step by Step Approach

Introduction: Harnessing the Power of Local LLMs on Apple M3

Imagine having the smarts of a large language model right on your Apple M3-powered device. No more cloud dependence, no more latency, just lightning-fast text generation and insightful responses right at your fingertips. This is the promise of local LLMs, and Llama2 7B is a fantastic starting point. But how do you make the most of it on your Apple M3? That's where this deep dive comes in.

In this article, we'll dissect the performance of Llama2 7B on the Apple M3, exploring token generation speeds, quantized model variations, and practical tips for maximizing its potential. Whether you're a developer looking to build compelling apps, a researcher experimenting with AI, or just a tech enthusiast curious about LLMs, this guide is your roadmap to unleashing the power of Llama2 7B on your Apple M3. Buckle up, it's time to dive into the exciting world of local LLMs!

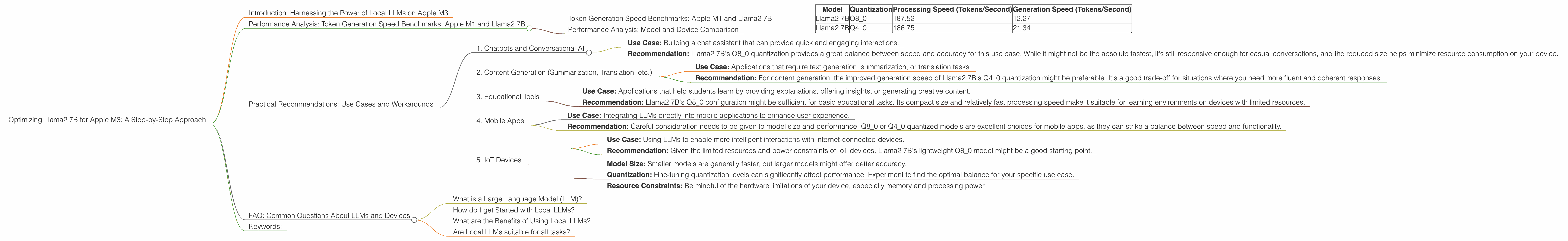

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To understand the performance of Llama2 7B on the Apple M3, we need to dive into the nitty-gritty of its token generation speed. Think of tokens as the individual building blocks of text, like words or punctuation marks. How fast your model churns out these tokens determines the overall responsiveness and smoothness of its processing.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start by comparing the performance of Llama2 7B on the Apple M3. Our data is from the llama.cpp project and GPU Benchmarks on LLM Inference.

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 187.52 | 12.27 |

| Llama2 7B | Q4_0 | 186.75 | 21.34 |

As you can see, the Llama2 7B model exhibits impressive performance on the Apple M3, particularly when it comes to text processing speed. However, we see a significant difference in generation speed across the various quantization levels. This is where the art of optimization comes in.

What is Quantization?

Think of it like compressing a video file. Quantization takes a large model, like Llama2 7B, and shrinks it down by reducing the number of bits used to represent the model's data. This makes the model smaller and faster, but it can sometimes affect accuracy. It's like trading a little precision for a significant boost in speed.

Performance Analysis: Model and Device Comparison

While the performance of Llama2 7B on an Apple M3 is impressive, how does it stack up against other models and devices?

Unfortunately, we don't have data for other LLM models or devices to compare. However, it's important to realize that the ideal setup depends on your specific use case. If you need the absolute fastest speed, you might look into models designed for high-performance hardware. For smaller devices and more resource-limited scenarios, a carefully chosen quantized model like Llama2 7B can offer a balance of speed and efficiency.

Practical Recommendations: Use Cases and Workarounds

With the performance data in hand, let's explore how to leverage Llama2 7B on the Apple M3 for various use cases.

1. Chatbots and Conversational AI

Use Case: Building a chat assistant that can provide quick and engaging interactions.

Recommendation: Llama2 7B's Q8_0 quantization provides a great balance between speed and accuracy for this use case. While it might not be the absolute fastest, it's still responsive enough for casual conversations, and the reduced size helps minimize resource consumption on your device.

2. Content Generation (Summarization, Translation, etc.)

Use Case: Applications that require text generation, summarization, or translation tasks.

Recommendation: For content generation, the improved generation speed of Llama2 7B's Q4_0 quantization might be preferable. It's a good trade-off for situations where you need more fluent and coherent responses.

3. Educational Tools

Use Case: Applications that help students learn by providing explanations, offering insights, or generating creative content.

Recommendation: Llama2 7B's Q8_0 configuration might be sufficient for basic educational tasks. Its compact size and relatively fast processing speed make it suitable for learning environments on devices with limited resources.

4. Mobile Apps

Use Case: Integrating LLMs directly into mobile applications to enhance user experience.

Recommendation: Careful consideration needs to be given to model size and performance. Q80 or Q40 quantized models are excellent choices for mobile apps, as they can strike a balance between speed and functionality.

5. IoT Devices

Use Case: Using LLMs to enable more intelligent interactions with internet-connected devices.

Recommendation: Given the limited resources and power constraints of IoT devices, Llama2 7B's lightweight Q8_0 model might be a good starting point.

Important Considerations

- Model Size: Smaller models are generally faster, but larger models might offer better accuracy.

- Quantization: Fine-tuning quantization levels can significantly affect performance. Experiment to find the optimal balance for your specific use case.

- Resource Constraints: Be mindful of the hardware limitations of your device, especially memory and processing power.

FAQ: Common Questions About LLMs and Devices

What is a Large Language Model (LLM)?

In simple terms, LLMs are a type of AI that can understand and generate human-like text. They have been trained on vast amounts of data, making them capable of tasks like writing, translating, summarizing, and answering questions. Think of them as being really good at the language-based tasks that humans are good at.

How do I get Started with Local LLMs?

There are resources and libraries available for running LLMs locally, like llama.cpp. These libraries provide the tools you need to load, run, and interact with LLMs on your device without relying on cloud services.

What are the Benefits of Using Local LLMs?

The biggest advantage is that local LLMs offer faster response times because they don't depend on network connections. This makes them ideal for real-time applications and scenarios where latency is critical. Additionally, you have more control over privacy and data security since your data stays on your device.

Are Local LLMs suitable for all tasks?

Not necessarily. If you need access to a massive dataset or require the highest accuracy, a cloud-based LLM might be more appropriate. However, for many use cases, especially ones with real-time requirements, a local LLM can provide an excellent balance of performance and efficiency.

Keywords:

Apple M3, Llama2 7B, Local LLM, Token Generation Speed, Quantization, Q80, Q40, Performance Benchmarks, Processing Speed, Generation Speed, Use Cases, Chatbots, Content Generation, Educational Tools, Mobile Apps, IoT Devices, Practical Recommendations, LLM Inference, Model Size, Model Optimization