Optimizing Llama2 7B for Apple M2 Ultra: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes the need to find the perfect balance between performance and cost. Running these models locally on your own hardware offers unparalleled control and privacy, but it also requires careful optimization to squeeze the most out of your gear. Today, we're diving deep into the fascinating world of Llama2 7B on the mighty Apple M2_Ultra, exploring its performance and potential for real-world applications.

Think of it as a high-performance race car; you have the engine (M2_Ultra), but you need to tune it for the specific race track (Llama2 7B) to achieve maximum speed and efficiency. This article will guide you through the process, providing actionable insights to unleash the full potential of this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

This section highlights the token generation speed of Llama2 7B on the Apple M2_Ultra, using various quantization levels and comparing them with other LLMs. Tokens are the basic building blocks of language models, much like words for humans. Generating them quickly is key for seamless interaction and complex tasks.

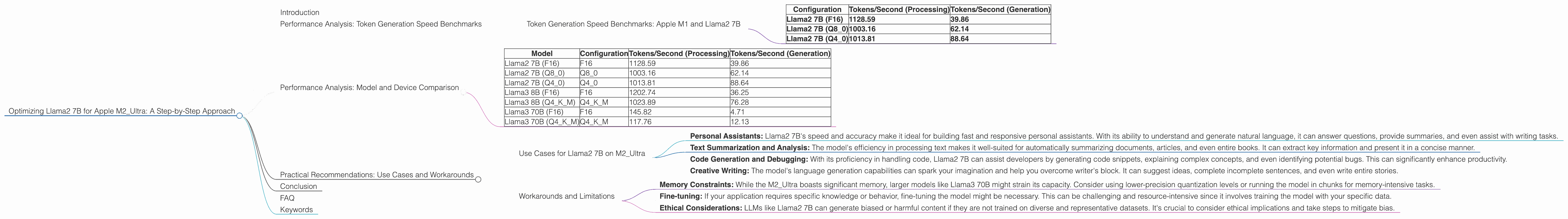

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Configuration | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|

| Llama2 7B (F16) | 1128.59 | 39.86 |

| Llama2 7B (Q8_0) | 1003.16 | 62.14 |

| Llama2 7B (Q4_0) | 1013.81 | 88.64 |

Note: The provided dataset doesn't include benchmarks for other devices, so we'll only focus on the M2_Ultra in this analysis.

Understanding the Numbers:

- F16: This represents the model's weights stored in a 16-bit floating-point format. It's a balance between accuracy and speed.

- Q8_0: This indicates that the model's weights are quantized to 8 bits, with 0 bits for the exponent. This offers a significant reduction in memory footprint and potentially faster inference.

- Q4_0: This is the most aggressive quantization level, with 4-bit weights and 0 exponent bits. This optimizes for memory but may impact accuracy.

Key Takeaways:

- Llama2 7B performs exceptionally well on the M2_Ultra, with impressive token generation speeds even with quantization.

- Quantization can significantly impact performance, especially for generation. While Q80 offers a good compromise, Q40 might be suitable for memory-constrained scenarios.

A Real-World Analogy: Imagine a speed-reading champion. They can process text (processing) at an incredible rate, but actually understanding the content (generation) takes longer. The same holds true for LLMs, with quantization acting like a speed-reading technique.

Performance Analysis: Model and Device Comparison

Now, let's take a step back and compare the performance of Llama2 7B with other models on the M2_Ultra. This comparison will help us understand its strengths and weaknesses relative to other LLMs.

| Model | Configuration | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama2 7B (F16) | F16 | 1128.59 | 39.86 |

| Llama2 7B (Q8_0) | Q8_0 | 1003.16 | 62.14 |

| Llama2 7B (Q4_0) | Q4_0 | 1013.81 | 88.64 |

| Llama3 8B (F16) | F16 | 1202.74 | 36.25 |

| Llama3 8B (Q4KM) | Q4KM | 1023.89 | 76.28 |

| Llama3 70B (F16) | F16 | 145.82 | 4.71 |

| Llama3 70B (Q4KM) | Q4KM | 117.76 | 12.13 |

Note: The provided dataset doesn't include benchmarks for all model and device combinations, so some entries are missing or have been excluded.

Key Observations:

- Llama2 7B delivers competitive performance compared to other models on the M2_Ultra. It shines with F16 quantization, offering a great blend of speed and accuracy.

- Larger models (Llama3 70B) are still significantly slower but offer greater accuracy. This trade-off is a recurring theme in the LLM landscape.

- Quantization benefits vary across models. While Q4 quantization generally helps with memory, it doesn't always translate directly to performance gains.

Think of it like this: You have different car models for various purposes. A compact car (Llama2 7B) is quicker in the city, while a luxury SUV (Llama3 70B) is better for long trips, even if it's slower.

Practical Recommendations: Use Cases and Workarounds

Now that we have a clear understanding of Llama2 7B's performance on the M2_Ultra, let's explore its potential use cases and address some practical considerations.

Use Cases for Llama2 7B on M2_Ultra

- Personal Assistants: Llama2 7B's speed and accuracy make it ideal for building fast and responsive personal assistants. With its ability to understand and generate natural language, it can answer questions, provide summaries, and even assist with writing tasks.

- Text Summarization and Analysis: The model's efficiency in processing text makes it well-suited for automatically summarizing documents, articles, and even entire books. It can extract key information and present it in a concise manner.

- Code Generation and Debugging: With its proficiency in handling code, Llama2 7B can assist developers by generating code snippets, explaining complex concepts, and even identifying potential bugs. This can significantly enhance productivity.

- Creative Writing: The model's language generation capabilities can spark your imagination and help you overcome writer's block. It can suggest ideas, complete incomplete sentences, and even write entire stories.

Workarounds and Limitations

- Memory Constraints: While the M2_Ultra boasts significant memory, larger models like Llama3 70B might strain its capacity. Consider using lower-precision quantization levels or running the model in chunks for memory-intensive tasks.

- Fine-tuning: If your application requires specific knowledge or behavior, fine-tuning the model might be necessary. This can be challenging and resource-intensive since it involves training the model with your specific data.

- Ethical Considerations: LLMs like Llama2 7B can generate biased or harmful content if they are not trained on diverse and representative datasets. It's crucial to consider ethical implications and take steps to mitigate bias.

Conclusion

Optimizing Llama2 7B for the Apple M2_Ultra is a rewarding journey that unlocks the potential of this powerful LLM for various applications. By understanding the performance benefits, considering trade-offs, and embracing practical recommendations, developers can leverage this combination to create innovative and impactful solutions.

Remember, the world of LLMs is constantly evolving, so stay informed about the latest advancements and adapt your strategies accordingly. As with any powerful tool, responsible use and ethical considerations are paramount.

FAQ

Q: What is the best way to run Llama2 7B on the M2_Ultra?

A: The most efficient setup depends on your specific needs. If speed is paramount, using F16 precision is recommended. If memory is a concern, consider Q80 or Q40 quantization. However, be aware that quantization can impact accuracy.

Q: What is quantization and how does it work?

A: Quantization is a technique for compressing the model's weights by reducing the number of bits used to represent them. This leads to smaller model sizes and potentially faster inference. However, it can also reduce accuracy. Imagine a picture: a full-color image uses more bits per pixel than a black-and-white photo.

Q: How can I fine-tune Llama2 7B for my specific needs?

A: Fine-tuning involves training the model with your specific data to improve its performance on a specific task. This requires a significant amount of data and computational resources. There are various libraries and tools available for fine-tuning LLMs.

Q: What are some ethical considerations when using LLMs?

A: LLMs can be biased or generate offensive content if they are not trained on diverse and representative datasets. It's crucial to consider the ethical implications and take steps to mitigate bias and harmful outputs.

Q: What are the future trends in the local LLM landscape?

A: Expect to see even more powerful LLMs with better performance and efficiency. The availability of optimized hardware and specialized libraries will further accelerate local LLM adoption.

Keywords

Llama2, Llama2 7B, M2Ultra, Apple Silicon, LLM, Large Language Model, Token Generation Speed, Performance Benchmarks, Quantization, F16, Q80, Q4_0, Processing, Generation, Local LLMs, Use Cases, Workarounds, Ethical Considerations, AI, Machine Learning