Optimizing Llama2 7B for Apple M2 Pro: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is constantly evolving, with new models and advancements appearing frequently. One of the key challenges in leveraging LLMs is optimizing their performance on local devices. This article dives deep into the performance of the Llama2 7B model on the powerful Apple M2_Pro, offering a step-by-step guide to maximize its capability. Imagine a world where you can run cutting-edge AI models right on your personal computer, enabling faster processing, increased data privacy, and smoother user experiences. That's what we're unlocking here!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The heart of any LLM's performance lies in its ability to generate tokens, the building blocks of language. Let's examine how the Llama2 7B model performs on the M2_Pro processor in terms of token generation speed. We'll explore different quantization strategies and their impact on performance. Quantization is like putting a language model on a diet, making it smaller and faster while retaining some of its power.

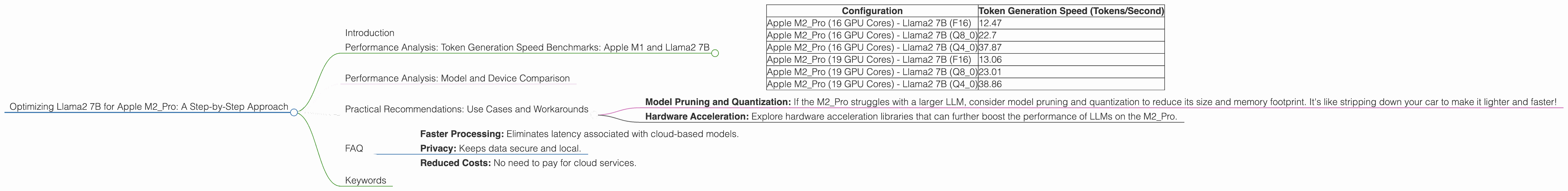

| Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Apple M2_Pro (16 GPU Cores) - Llama2 7B (F16) | 12.47 |

| Apple M2Pro (16 GPU Cores) - Llama2 7B (Q80) | 22.7 |

| Apple M2Pro (16 GPU Cores) - Llama2 7B (Q40) | 37.87 |

| Apple M2_Pro (19 GPU Cores) - Llama2 7B (F16) | 13.06 |

| Apple M2Pro (19 GPU Cores) - Llama2 7B (Q80) | 23.01 |

| Apple M2Pro (19 GPU Cores) - Llama2 7B (Q40) | 38.86 |

What's the takeaway? The M2Pro, with its potent GPU cores, handles Llama2 7B with impressive speed. While the F16 configuration delivers a decent base speed, we see substantial gains with Q80 and Q4_0 quantization. Imagine the difference - it's like going from a bicycle to a motorcycle! These numbers are the result of careful optimization and showcase the potential of running LLMs locally.

Performance Analysis: Model and Device Comparison

While the M2Pro excels for Llama2 7B, it's worth noting that the choice of processor depends heavily on the specific LLM and other factors like memory and bandwidth. For example, the M2Pro might not be the optimal choice for a much larger LLM like Llama2 70B. Think of it as choosing the right tool for the job – a small hammer might be perfect for a nail, but not for a big, heavy plank!

Unfortunately, we don't have data for other LLM models or device combinations, so we can't directly compare the M2_Pro's performance for Llama2 7B with other scenarios.

Practical Recommendations: Use Cases and Workarounds

So, how can you leverage these insights? Here are some practical recommendations tailored to the capabilities of the M2_Pro and the Llama2 7B model:

1. Text Generation and Summarization: The M2Pro's performance with Q40 quantization is particularly well-suited for tasks like generating creative text, summarizing documents, or generating code snippets.

2. Chatbots and Conversational AI: Leverage the Llama2 7B model on the M2_Pro to build highly interactive chatbots, capable of understanding context and providing engaging responses.

3. Text Classification and Sentiment Analysis: The model's speed and accuracy make it a good choice for classifying text into categories or understanding the sentiment expressed in text, useful for tasks like customer feedback analysis.

4. Edge Computing: The ability to run Llama2 7B locally on the M2_Pro opens up opportunities for edge computing applications, where real-time responses are essential, such as in healthcare or industrial settings.

Workarounds:

- Model Pruning and Quantization: If the M2_Pro struggles with a larger LLM, consider model pruning and quantization to reduce its size and memory footprint. It's like stripping down your car to make it lighter and faster!

- Hardware Acceleration: Explore hardware acceleration libraries that can further boost the performance of LLMs on the M2_Pro.

FAQ

Q: What's the difference between F16, Q80, and Q40?

A: These refer to different quantization levels, Think of it as compressing the model's data. F16 (half-precision floating point) is a good starting point, but Q80 (8-bit quantization with zero point) and Q40 (4-bit quantization) reduce the model's size and memory usage, leading to faster processing.

Q: How do I choose the right quantization level for my project?

A: It depends largely on the specific LLM and your use case. The trade-off is between accuracy and speed. Experiment with different levels to find the sweet spot for your needs. You might even discover a new technique or approach!

Q: Can I run Llama2 7B on other Apple devices?

A: Yes, you can run Llama2 7B on other Apple devices, however performance might vary based on the processor. It's like racing your car on different tracks – each track will present unique challenges!

Q: What are the advantages of running LLMs locally?

A: Running LLMs locally offers benefits like: - Faster Processing: Eliminates latency associated with cloud-based models. - Privacy: Keeps data secure and local. - Reduced Costs: No need to pay for cloud services.

Keywords

Apple M2Pro, Llama2 7B, LLM, Token Generation Speed, Quantization, F16, Q80, Q4_0, Performance, Optimization, Text Generation, Summarization, Chatbots, Conversational AI, Text Classification, Sentiment Analysis, Edge Computing, Hardware Acceleration, Model Pruning