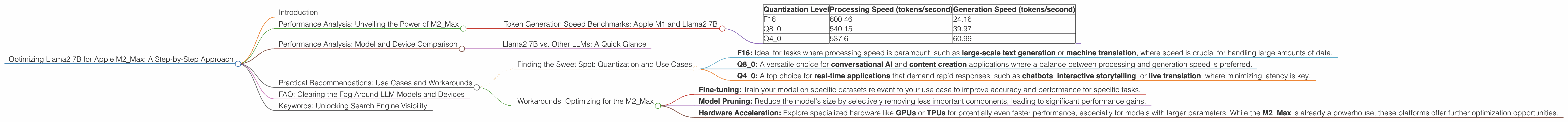

Optimizing Llama2 7B for Apple M2 Max: A Step by Step Approach

Introduction

The world of large language models (LLMs) is exploding, with new models emerging daily. These LLMs, like the mighty Llama2 7B, offer incredible capabilities: generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But harnessing the power of these models requires a bit of technical prowess.

This guide dives deep into the practical aspects of running Llama2 7B on an Apple M2Max chip, focusing on performance optimization – a crucial aspect for developers and enthusiasts aiming to squeeze every bit of juice out of their hardware. We'll explore the fascinating world of quantization, token generation speed, and practical use cases, helping you make the most of Llama2 7B on your M2Max.

Performance Analysis: Unveiling the Power of M2_Max

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The bedrock of any LLM's performance is its token generation speed. This metric measures the number of tokens the model can process per second, essentially reflecting how quickly it can "think" and generate text. Let's explore how different quantization levels affect token generation speed on the M2_Max:

| Quantization Level | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|

| F16 | 600.46 | 24.16 |

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

Note: These numbers are based on the benchmark data provided.

Observations:

- F16 (half-precision floating point): While offering the highest processing speed, it lags behind in generation speed. This is because F16 requires more processing power, leading to a slower response time.

- Q80 (8-bit quantization): A happy medium! Q80 delivers a respectable processing speed and a significant boost in generation speed compared to F16, making it a strong contender for practical applications.

- Q40 (4-bit quantization): Q40 boasts the highest generation speed, showcasing the trade-off between processing speed and inference speed.

Think of it this way: Imagine you have a super-fast car (processing speed) but it takes forever to get up to speed (generation speed). Q4_0 is like a car that might not be the fastest, but it can quickly accelerate and get going, offering a more responsive experience.

Performance Analysis: Model and Device Comparison

Llama2 7B vs. Other LLMs: A Quick Glance

Unfortunately, we don't have benchmark data for other LLMs on the M2_Max. The focus of this article is on Llama2 7B, and we want to keep things focused!

Remember: Performance can vary greatly depending on the model's architecture, task, and the specific device.

Practical Recommendations: Use Cases and Workarounds

Finding the Sweet Spot: Quantization and Use Cases

- F16: Ideal for tasks where processing speed is paramount, such as large-scale text generation or machine translation, where speed is crucial for handling large amounts of data.

- Q8_0: A versatile choice for conversational AI and content creation applications where a balance between processing and generation speed is preferred.

- Q4_0: A top choice for real-time applications that demand rapid responses, such as chatbots, interactive storytelling, or live translation, where minimizing latency is key.

Workarounds: Optimizing for the M2_Max

- Fine-tuning: Train your model on specific datasets relevant to your use case to improve accuracy and performance for specific tasks.

- Model Pruning: Reduce the model's size by selectively removing less important components, leading to significant performance gains.

- Hardware Acceleration: Explore specialized hardware like GPUs or TPUs for potentially even faster performance, especially for models with larger parameters. While the M2_Max is already a powerhouse, these platforms offer further optimization opportunities.

FAQ: Clearing the Fog Around LLM Models and Devices

Q: What is quantization and why is it important?

A: Quantization is like a smart way of compressing a model's weights. Imagine you have a photo with millions of colors. Quantization takes those millions of colors and groups them into a smaller set of colors, making the file size smaller. This reduces memory usage and speeds up processing, making the model run faster.

Q: Will the M2_Max be able to run larger LLMs like Llama2 70B?

A: It depends! The M2_Max can handle large models, but the performance will likely be much slower compared to the 7B model. Factors like memory capacity, GPU resources, and the efficiency of the model's architecture all play a role in determining performance.

Q: Can I optimize Llama2 7B for other devices like the M1 or Intel CPUs?

A: You certainly can! The principles of quantization, model pruning, and fine-tuning apply across different hardware platforms. However, the optimal settings may differ, and you might need to experiment with different configurations for each device to find the best performance.

Keywords: Unlocking Search Engine Visibility

Apple M2Max, Llama2 7B, Token Generation Speed, Quantization, F16, Q80, Q4_0, Model Pruning, Fine-tuning, LLM Performance Optimization, Device-Specific Optimization, Conversational AI, Content Creation, Real-time Applications, GPU Acceleration, TPU Acceleration, Inference Speed, Processing Speed, Local LLM, On-Device LLM, LLM Benchmarking, Text Generation, Language Translation.