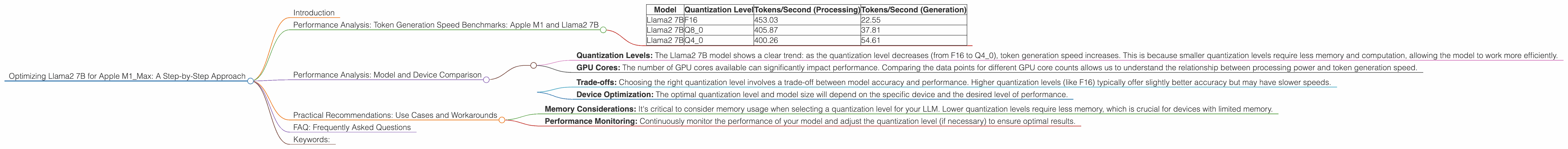

Optimizing Llama2 7B for Apple M1 Max: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These AI marvels are revolutionizing how we interact with information and technology, pushing the boundaries of what's possible. But harnessing the power of LLMs for local use requires careful optimization, especially when working with powerful yet resource-constrained devices like the Apple M1_Max.

This article dives into the nitty-gritty of optimizing the Llama2 7B model for the M1_Max chip, providing a detailed guide for developers and enthusiasts looking to unlock the full potential of these powerful tools. We'll explore the performance characteristics of different quantization levels, analyze throughput in terms of tokens per second, and offer practical recommendations for real-world applications. So buckle up, it's time for a deep dive into the fascinating world of local LLM optimization!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The magic of LLMs lies in their ability to generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But how efficiently do they operate? To understand this, we need to measure token generation speed, which essentially tells us how many words or parts of words (tokens) the model can churn out every second.

| Model | Quantization Level | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama2 7B | F16 | 453.03 | 22.55 |

| Llama2 7B | Q8_0 | 405.87 | 37.81 |

| Llama2 7B | Q4_0 | 400.26 | 54.61 |

Remember: These benchmark results are for a specific device with 24 GPU cores. The performance might vary slightly depending on the model and the specific configuration of your M1_Max.

Performance Analysis: Model and Device Comparison

It's important to remember that the Apple M1_Max is a powerful chip, but it's not the only game in town. Comparing performance across different device architectures can be tricky, but we can draw some general insights by looking at the available data.

Data Analysis:

The M1_Max chip is a multi-core processor with a significant number of cores. However, specific models like Llama2 7B have various configurations for processing and generation, including quantization levels. Quantization is a technique that reduces the size of the LLM by converting its numbers into smaller formats.

Key Observations:

- Quantization Levels: The Llama2 7B model shows a clear trend: as the quantization level decreases (from F16 to Q4_0), token generation speed increases. This is because smaller quantization levels require less memory and computation, allowing the model to work more efficiently.

- GPU Cores: The number of GPU cores available can significantly impact performance. Comparing the data points for different GPU core counts allows us to understand the relationship between processing power and token generation speed.

Practical Implications:

- Trade-offs: Choosing the right quantization level involves a trade-off between model accuracy and performance. Higher quantization levels (like F16) typically offer slightly better accuracy but may have slower speeds.

Device Optimization: The optimal quantization level and model size will depend on the specific device and the desired level of performance.

Practical Recommendations: Use Cases and Workarounds

Now that we've delved into the world of token generation speeds and quantization levels, it's time to put this knowledge into practice. Let's explore some real-world use cases and their corresponding optimization strategies.

1. Text Summarization:

Imagine you're dealing with a mountain of documents and need a quick digest of the key information. By using your optimized Llama2 7B model on the M1_Max, you can efficiently summarize the content, saving you precious time.

Recommendation: The Q8_0 quantization level provides a good balance between speed and accuracy for text summarization use cases.

2. Chatbots:

Chatbots are becoming increasingly popular, engaging users in natural conversations. For a smooth and responsive chatbot experience, you need a fast and efficient LLM.

Recommendation: You can use the Q4_0 quantization level to prioritize speed for chatbots, while maintaining reasonable accuracy.

3. Creative Writing:

Let's get creative! LLMs are incredibly powerful for generating different types of creative content like poems, stories, and even scripts.

Recommendation: Opt for higher quantization levels (F16) to achieve a slightly higher level of accuracy and finesse in your creative writing outputs.

4. Language Translation:

Need to translate a document or website on the fly? Your local LLM can do the job!

Recommendation: Consider using the Q4_0 quantization level for efficient translation, especially when dealing with longer texts.

5. Code Generation:

Many developers are exploring LLMs for code generation and debugging.

Recommendation: Use the F16 quantization level for code generation, as it offers the best balance between speed and accuracy, especially for tasks involving complex code structures.

Important Considerations:

- Memory Considerations: It's critical to consider memory usage when selecting a quantization level for your LLM. Lower quantization levels require less memory, which is crucial for devices with limited memory.

- Performance Monitoring: Continuously monitor the performance of your model and adjust the quantization level (if necessary) to ensure optimal results.

FAQ: Frequently Asked Questions

Q: What are LLMs?

A: LLMs are powerful AI models trained on vast amounts of text data, enabling them to understand and generate human-like text. They can perform tasks like translation, summarization, and even creative writing.

Q: What is quantization?

A: Quantization is a technique that reduces the size of an LLM by converting its numbers into smaller formats. This process can improve performance by reducing memory usage and computation, but it can also slightly decrease the model's accuracy.

Q: Why is token generation speed important?

A: Token generation speed determines how quickly the LLM can generate text. It's crucial for applications that require real-time responses or fast processing, such as chatbots and language translation tools.

Q: How do I choose the right quantization level for my LLM?

A: The optimal quantization level depends on your application's specific requirements for accuracy and speed. For tasks requiring high accuracy, consider using F16. For speed-critical applications, choose Q80 or Q40.

Q: Can I run LLMs on smaller devices?

A: Yes, you can! LLMs are constantly evolving, and there are now models with smaller sizes and reduced memory footprints suitable for running on less powerful devices.

Keywords:

Llama2 7B, Apple M1Max, LLM, token generation speed, quantization, F16, Q80, Q4_0, performance analysis, optimization, benchmarks, use cases, text summarization, chatbots, creative writing, language translation, code generation, memory considerations, performance monitoring.