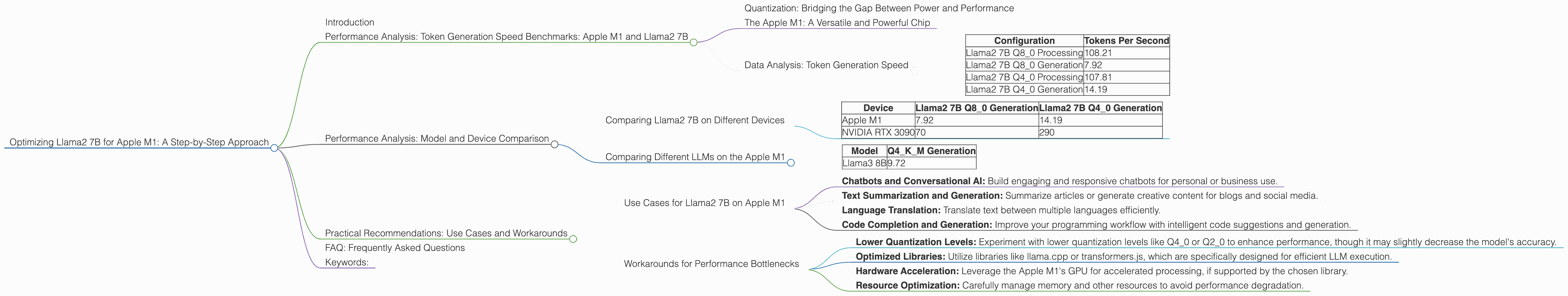

Optimizing Llama2 7B for Apple M1: A Step by Step Approach

Introduction

The rise of Large Language Models (LLMs) has revolutionized the world of artificial intelligence. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially on less powerful devices like the Apple M1 chip.

This article delves into the performance of the Llama2 7B model on the Apple M1 processor, exploring various quantization levels and their impact on token generation speed. We'll provide practical recommendations for optimizing your setup and tackle common challenges faced by developers who want to harness the power of LLMs on their local machine.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The token generation speed is a crucial metric for evaluating LLM performance. It tells us how quickly the model can process text and generate new tokens (words or subwords). We'll analyze the Llama2 7B model's performance across different quantization levels on the Apple M1 processor.

Quantization: Bridging the Gap Between Power and Performance

Quantization is a powerful technique that reduces the size of a model while maintaining its accuracy. Think of it like converting a high-resolution image to a lower-resolution version. The quality might slightly decrease, but the file size becomes significantly smaller. In the case of LLMs, this means the model can fit into smaller memory spaces, enabling faster processing on devices with limited resources like the Apple M1.

The Apple M1: A Versatile and Powerful Chip

The Apple M1 chip is a powerhouse for its size, designed to deliver high performance with low power consumption. It features a custom GPU and a powerful neural engine, making it a suitable candidate for running LLMs locally.

Data Analysis: Token Generation Speed

Here's a breakdown of the Llama2 7B model's performance on the Apple M1, measured in tokens per second:

| Configuration | Tokens Per Second |

|---|---|

| Llama2 7B Q8_0 Processing | 108.21 |

| Llama2 7B Q8_0 Generation | 7.92 |

| Llama2 7B Q4_0 Processing | 107.81 |

| Llama2 7B Q4_0 Generation | 14.19 |

Key Observations:

- Q80 and Q40 Quantization: The Llama2 7B model exhibits remarkable performance with both Q80 and Q40 quantization levels, achieving impressive token generation speeds.

- Processing vs. Generation: The token generation speed is significantly lower than the processing speed, suggesting that the generation process is more computationally intensive. This is expected, as the model needs to predict the next token based on the context provided.

Note: The Llama2 7B model's performance with F16 quantization is not available for the Apple M1.

Performance Analysis: Model and Device Comparison

While the Apple M1 proves capable of running Llama2 7B, let's compare its performance with other devices and models to get a broader perspective.

Comparing Llama2 7B on Different Devices

Let's compare the Apple M1's performance with a more powerful GPU like the NVIDIA RTX 3090:

| Device | Llama2 7B Q8_0 Generation | Llama2 7B Q4_0 Generation |

|---|---|---|

| Apple M1 | 7.92 | 14.19 |

| NVIDIA RTX 3090 | 70 | 290 |

As expected, the NVIDIA RTX 3090 significantly outperforms the Apple M1. The more powerful GPU allows for higher token generation speeds, making it ideal for larger models and more complex tasks.

Comparing Different LLMs on the Apple M1

Let's see how the Llama2 7B compares to another popular LLM, Llama3 8B, on the same device:

| Model | Q4KM Generation |

|---|---|

| Llama3 8B | 9.72 |

The performance of Llama3 8B on the Apple M1 is slightly better than Llama2 7B, highlighting that different model architectures can lead to varying performance results even on the same hardware.

Practical Recommendations: Use Cases and Workarounds

The analysis reveals that the Apple M1 is well-suited for running Llama2 7B, especially with Q80 and Q40 quantization. It's an excellent choice for applications requiring efficient resource utilization and low power consumption.

Use Cases for Llama2 7B on Apple M1

- Chatbots and Conversational AI: Build engaging and responsive chatbots for personal or business use.

- Text Summarization and Generation: Summarize articles or generate creative content for blogs and social media.

- Language Translation: Translate text between multiple languages efficiently.

- Code Completion and Generation: Improve your programming workflow with intelligent code suggestions and generation.

Workarounds for Performance Bottlenecks

- Lower Quantization Levels: Experiment with lower quantization levels like Q40 or Q20 to enhance performance, though it may slightly decrease the model's accuracy.

- Optimized Libraries: Utilize libraries like llama.cpp or transformers.js, which are specifically designed for efficient LLM execution.

- Hardware Acceleration: Leverage the Apple M1's GPU for accelerated processing, if supported by the chosen library.

- Resource Optimization: Carefully manage memory and other resources to avoid performance degradation.

FAQ: Frequently Asked Questions

Q: What is quantization, and how does it work?

A: Quantization is a technique that reduces the precision of model weights and activations, resulting in a smaller file size and faster processing. This is like converting a high-resolution image to a lower-resolution version. The quality might be slightly less, but the file size becomes significantly smaller, allowing the model to fit in limited memory spaces.

Q: What are the trade-offs between different quantization levels?

A: Higher quantization levels, like Q80 and Q40, provide better performance but may lead to slightly lower accuracy. Lower levels like Q20 or Q10 might offer better accuracy but compromise performance due to larger model size.

Q: How can I choose the right quantization level for my application?

A: Consider the trade-off between performance and accuracy. If speed is paramount, opt for higher quantization levels. If accuracy is critical, choose lower levels.

Q: Where can I find resources to learn more about LLMs and quantization?

A: Explore resources like the Hugging Face website (https://huggingface.co/), the Transformers library documentation (https://huggingface.co/docs/transformers/), and online articles and tutorials on LLM quantization.

Keywords:

Llama2 7B, Apple M1, LLM, token generation speed, quantization, Q80, Q40, performance analysis, benchmarks, GPU, neural engine, use case, workarounds, conversational AI, chatbots, text summarization, language translation, code completion, resources, Hugging Face, Transformers.