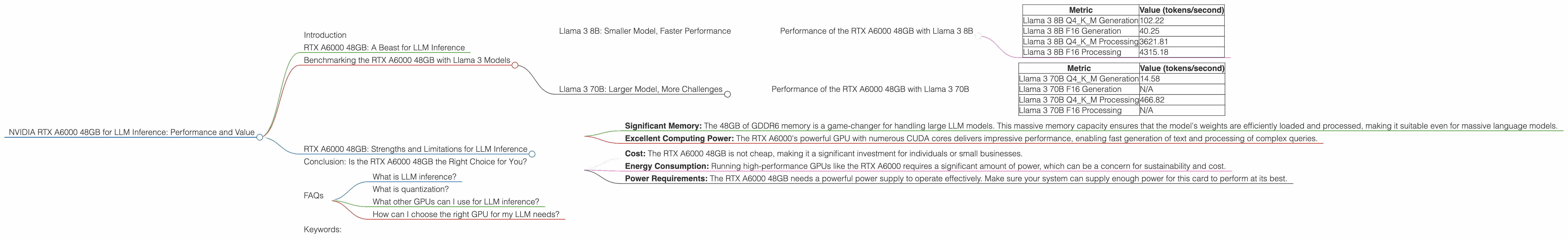

NVIDIA RTX A6000 48GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is buzzing with excitement - these powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be a challenge, requiring powerful hardware to handle the massive computational demands. That's where the NVIDIA RTX A6000 48GB comes in!

This article dives into the performance and value of the RTX A6000 48GB for running LLM inference locally, specifically focusing on the popular Llama 3 series. We'll explore its capabilities, analyze its performance metrics, and highlight its strengths and limitations. Buckle up, folks!

RTX A6000 48GB: A Beast for LLM Inference

The RTX A6000 48GB is a powerful graphics card aimed at professionals and power users, but it's also a beast when it comes to local LLM inference. Packed with 48GB of GDDR6 memory and boasting an impressive amount of CUDA cores, it offers the horsepower needed to push the boundaries of what's possible with LLMs.

Benchmarking the RTX A6000 48GB with Llama 3 Models

This article focuses on the NVIDIA RTX A6000 48GB's performance with the Llama 3 series of LLM models. These models, developed by Meta, are renowned for their impressive capabilities and are gaining widespread popularity. We'll analyze the performance of the RTX A6000 48GB for two Llama 3 models: Llama 3 8B and Llama 3 70B (we'll leave Llama 2 aside for this article).

Llama 3 8B: Smaller Model, Faster Performance

Let's start with the smaller Llama 3 8B model, which is a great choice for developers and enthusiasts. It's known for its impressive performance and relatively low resource requirements.

Performance of the RTX A6000 48GB with Llama 3 8B

| Metric | Value (tokens/second) |

|---|---|

| Llama 3 8B Q4KM Generation | 102.22 |

| Llama 3 8B F16 Generation | 40.25 |

| Llama 3 8B Q4KM Processing | 3621.81 |

| Llama 3 8B F16 Processing | 4315.18 |

Breaking Down the Numbers

- Q4KM Generation: This refers to the model's ability to generate text using quantized weights (Q4) with a smaller data type for the matrix multiplications (K and M). Quantization is a technique that reduces the size of the model, making it faster and more efficient. Think of it as using a smaller dictionary with only the essential words needed for a conversation. The RTX A6000 48GB delivers an outstanding 102.22 tokens/second generation speed with this configuration.

- F16 Generation: This configuration uses the standard 16-bit floating-point data type for matrix multiplications, making it slightly less efficient than the Q4KM configuration. The RTX A6000 still delivers a respectable speed of 40.25 tokens/second for this model.

- Q4KM Processing: These numbers show the RTX A6000's processing speed for the Llama 3 8B model using the smaller Q4KM format.

- F16 Processing: This metric shows the processing speed of the Llama 3 8B model using standard F16 format.

Llama 3 70B: Larger Model, More Challenges

The Llama 3 70B is a giant of the LLM world. This model is much larger than the 8B model, offering impressive text generation capabilities. But bigger models require significantly more resources, which puts pressure on your hardware.

Performance of the RTX A6000 48GB with Llama 3 70B

| Metric | Value (tokens/second) |

|---|---|

| Llama 3 70B Q4KM Generation | 14.58 |

| Llama 3 70B F16 Generation | N/A |

| Llama 3 70B Q4KM Processing | 466.82 |

| Llama 3 70B F16 Processing | N/A |

Understanding the Results

- Q4KM Generation: While the RTX A6000 can handle the Llama 3 70B model with the Q4KM configuration, you can see a significant drop in generation speed compared to the 8B model.

- F16 Generation and Processing: Unfortunately, the benchmarks for the F16 configuration of the Llama 3 70B model are not available for this specific hardware/software combination. However, you can expect a decrease in performance compared to the Q4KM configuration due to the larger data type and increased computational demands.

RTX A6000 48GB: Strengths and Limitations for LLM Inference

The RTX A6000 48GB is a powerful tool for LLM inference, but it's not without its limitations. Let's explore its strengths and weaknesses.

Strengths:

- Significant Memory: The 48GB of GDDR6 memory is a game-changer for handling large LLM models. This massive memory capacity ensures that the model's weights are efficiently loaded and processed, making it suitable even for massive language models.

- Excellent Computing Power: The RTX A6000's powerful GPU with numerous CUDA cores delivers impressive performance, enabling fast generation of text and processing of complex queries.

Limitations:

- Cost: The RTX A6000 48GB is not cheap, making it a significant investment for individuals or small businesses.

- Energy Consumption: Running high-performance GPUs like the RTX A6000 requires a significant amount of power, which can be a concern for sustainability and cost.

- Power Requirements: The RTX A6000 48GB needs a powerful power supply to operate effectively. Make sure your system can supply enough power for this card to perform at its best.

Conclusion: Is the RTX A6000 48GB the Right Choice for You?

The RTX A6000 48GB is a powerful tool for local LLM inference, particularly when working with larger models like Llama 3 70B. The significant memory capacity and robust computing power make it an efficient choice for handling complex tasks and generating high-quality text.

However, it's crucial to consider the cost and energy consumption before making a decision. If you prioritize performance and need to run large models locally, the RTX A6000 48GB can be a worthwhile investment. For users with a tighter budget or who prioritize energy efficiency, alternative options might be more suitable.

FAQs

What is LLM inference?

LLM inference is the process of using a pre-trained language model to generate text, translate languages, answer questions, and perform other tasks. It's like having a smart assistant that can understand your questions and generate relevant responses.

What is quantization?

Quantization is a technique used to reduce the size of a language model by simplifying its weights. Think of it as replacing detailed, complex words with simpler words while retaining the essential meaning. This makes the model smaller, faster, and more efficient to run on hardware.

What other GPUs can I use for LLM inference?

There are many other GPUs suitable for LLM inference, including the NVIDIA GeForce RTX 4090, the AMD RX 7900 XTX, and the AMD Radeon Pro W7900X. These GPUs offer different levels of performance and price points to suit various needs and budgets.

How can I choose the right GPU for my LLM needs?

Consider the size of the LLM you plan to run, the type of tasks you want to perform, and your budget. For smaller models like Llama 3 8B, a mid-range GPU might be sufficient. However, for larger models like Llama 3 70B, a high-end GPU like the RTX A6000 48GB is strongly recommended.

Keywords:

NVIDIA RTX A6000, LLM Inference, Llama 3, 8B, 70B, GPU, Graphics Card, Performance, Value, Quantization, Tokens per second, Memory, CUDA cores, Processing Speed, Generation Speed, Costs, Energy Consumption, LLM models, AI, Machine Learning.