NVIDIA RTX 6000 Ada 48GB for LLM Inference: Performance and Value

Introduction

Imagine a world where you can run powerful large language models (LLMs) directly on your local machine! Well, that world is getting closer, thanks to the incredible advances in GPUs like the NVIDIA RTX 6000 Ada 48GB. This beast of a card is packed with performance and memory, making it an ideal choice for running and experimenting with LLMs like Llama, especially if you're a developer, researcher, or enthusiast who likes to tinker with these cutting-edge models.

In this article, we'll dive deep into the performance of the RTX 6000 Ada 48GB for LLM inference, specifically looking at Llama models. We'll explore different configurations, look at the numbers, and analyze the potential benefits (and limitations).

Are you ready to unleash the power of LLMs locally? Let's get started!

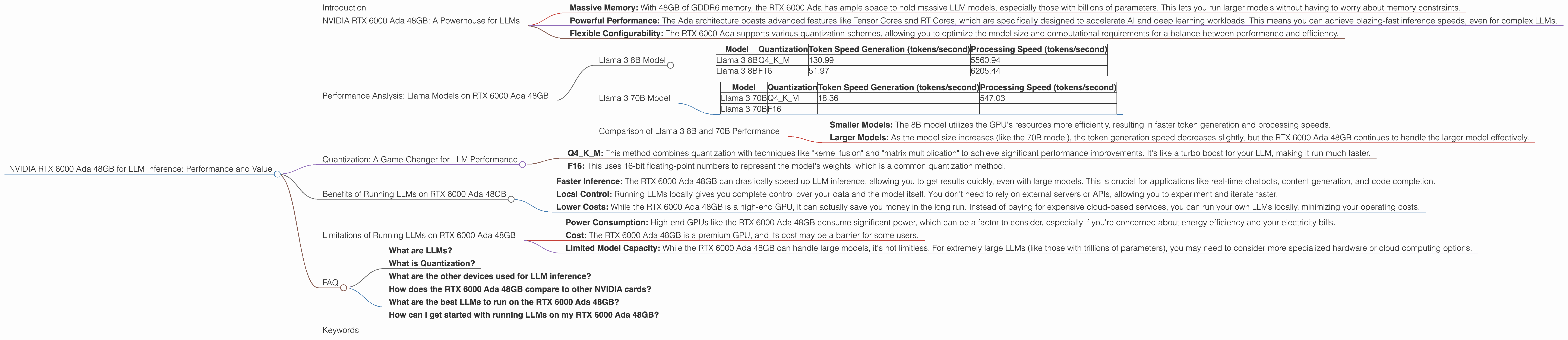

NVIDIA RTX 6000 Ada 48GB: A Powerhouse for LLMs

The NVIDIA RTX 6000 Ada 48GB is a high-end graphics card designed for demanding workloads such as deep learning and scientific computing. Here's why it's so well-suited for LLMs:

- Massive Memory: With 48GB of GDDR6 memory, the RTX 6000 Ada has ample space to hold massive LLM models, especially those with billions of parameters. This lets you run larger models without having to worry about memory constraints.

- Powerful Performance: The Ada architecture boasts advanced features like Tensor Cores and RT Cores, which are specifically designed to accelerate AI and deep learning workloads. This means you can achieve blazing-fast inference speeds, even for complex LLMs.

- Flexible Configurability: The RTX 6000 Ada supports various quantization schemes, allowing you to optimize the model size and computational requirements for a balance between performance and efficiency.

Performance Analysis: Llama Models on RTX 6000 Ada 48GB

To understand the performance of the RTX 6000 Ada 48GB for LLMs, we'll analyze its capability in running various Llama models with different quantization levels. We'll focus on two crucial metrics:

- Token Speed Generation: This measures how fast the model can create new tokens (words) during inference, directly impacting the speed of text generation.

- Processing Speed: This measures the overall speed at which the model can process input and produce output, encompassing the entire inference process.

Here's a breakdown of Llama 3 performance on the RTX 6000 Ada 48GB:

Llama 3 8B Model

| Model | Quantization | Token Speed Generation (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 130.99 | 5560.94 |

| Llama 3 8B | F16 | 51.97 | 6205.44 |

Observations:

- Q4KM Quantization: This configuration demonstrates impressive token generation speed, indicating the model is capable of creating a substantial amount of text in a short timeframe. The processing speed is also significantly high.

- F16 Quantization: While still achieving respectable token generation and processing speeds, the F16 quantized version shows that the Q4KM configuration provides a substantial performance improvement. This is likely due to the smaller size of the Q4KM weights, leading to faster computations.

Key Takeaway: For the Llama 3 8B model, the RTX 6000 Ada 48GB performs well, particularly with the Q4KM quantization scheme. The high token generation and processing speeds imply that this setup can handle real-time applications and generate text quickly.

Llama 3 70B Model

| Model | Quantization | Token Speed Generation (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|---|

| Llama 3 70B | Q4KM | 18.36 | 547.03 |

| Llama 3 70B | F16 |

Observations:

- Q4KM Quantization: The token generation speed for the 70B model is lower compared to the 8B model, but still reasonable. The processing speed also shows that the card handles this larger model efficiently.

- F16 Quantization: Data for F16 quantization is currently unavailable.

Key Takeaway: The RTX 6000 Ada 48GB can handle the Llama 3 70B model effectively, even with a Q4KM quantization scheme. The performance is still impressive, considering the significant increase in model size.

Comparison of Llama 3 8B and 70B Performance

Comparing the performance of the two Llama models, we see a clear trend:

- Smaller Models: The 8B model utilizes the GPU's resources more efficiently, resulting in faster token generation and processing speeds.

- Larger Models: As the model size increases (like the 70B model), the token generation speed decreases slightly, but the RTX 6000 Ada 48GB continues to handle the larger model effectively.

This comparison highlights the GPU's capability to handle a range of LLM sizes, but it also emphasizes that performance can be impacted by model complexity.

Quantization: A Game-Changer for LLM Performance

Quantization is like a secret weapon in the world of LLMs. It's a technique that reduces the size of the model's weights, making them lighter and faster to compute. Think of it like compressing a large image file to make it smaller without sacrificing too much quality.

- Q4KM: This method combines quantization with techniques like "kernel fusion" and "matrix multiplication" to achieve significant performance improvements. It's like a turbo boost for your LLM, making it run much faster.

- F16: This uses 16-bit floating-point numbers to represent the model's weights, which is a common quantization method.

The RTX 6000 Ada 48GB supports both Q4KM and F16 quantization, giving you the flexibility to choose the right balance between performance and model size. For example, if you need the absolute fastest inference speed, Q4KM might be the way to go. If you need to optimize the model size for limited memory resources, F16 might be a better option.

Benefits of Running LLMs on RTX 6000 Ada 48GB

- Faster Inference: The RTX 6000 Ada 48GB can drastically speed up LLM inference, allowing you to get results quickly, even with large models. This is crucial for applications like real-time chatbots, content generation, and code completion.

- Local Control: Running LLMs locally gives you complete control over your data and the model itself. You don't need to rely on external servers or APIs, allowing you to experiment and iterate faster.

- Lower Costs: While the RTX 6000 Ada 48GB is a high-end GPU, it can actually save you money in the long run. Instead of paying for expensive cloud-based services, you can run your own LLMs locally, minimizing your operating costs.

Limitations of Running LLMs on RTX 6000 Ada 48GB

- Power Consumption: High-end GPUs like the RTX 6000 Ada 48GB consume significant power, which can be a factor to consider, especially if you're concerned about energy efficiency and your electricity bills.

- Cost: The RTX 6000 Ada 48GB is a premium GPU, and its cost may be a barrier for some users.

- Limited Model Capacity: While the RTX 6000 Ada 48GB can handle large models, it's not limitless. For extremely large LLMs (like those with trillions of parameters), you may need to consider more specialized hardware or cloud computing options.

FAQ

What are LLMs?

LLMs are a type of artificial intelligence (AI) model that can understand and generate human-like text. They are trained on massive amounts of data and can perform a wide range of tasks, such as translating languages, writing different kinds of creative content, and answering your questions in an informative way.

What is Quantization?

Imagine you have a picture that's too big for your phone. You can make it smaller by reducing the number of pixels in the image. Quantization is like that for LLMs! It reduces the size of the model's "brain" by using fewer numbers (bits) to represent the information. This makes it easier to store and run the model on your computer, without losing too much accuracy.

What are the other devices used for LLM inference?

Besides the RTX 6000 Ada 48GB, other common devices used for LLM inference include CPUs, other GPUs (like the RTX 4090), and specialized AI accelerators. The choice of device depends on factors like model size, performance requirements, and budget.

How does the RTX 6000 Ada 48GB compare to other NVIDIA cards?

The RTX 6000 Ada 48GB is specifically designed for professional workloads, including LLM inference. While other NVIDIA cards like the RTX 4090 might offer similar performance for certain tasks, the RTX 6000 Ada 48GB excels in terms of memory capacity and specifically designed features for AI workloads.

What are the best LLMs to run on the RTX 6000 Ada 48GB?

Many LLMs can be run on the RTX 6000 Ada 48GB, including Llama, GPT-3, and BLOOM. The best choice depends on your needs and preferences.

How can I get started with running LLMs on my RTX 6000 Ada 48GB?

There are resources like the "llama.cpp" project that provide open-source code and tools for running LLMs on your local machine. You can find tutorials and guides online to help you set up your development environment and get started with LLM inference.

Keywords

NVIDIA RTX 6000 Ada 48GB, LLM Inference, Llama Models, Token Speed Generation, Processing Speed, Quantization, Q4KM, F16, GPU Performance, Local Inference, Cost, Power Consumption, AI, Deep Learning, Developers, Researchers, Geeks.