NVIDIA RTX 5000 Ada 32GB vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally requires powerful hardware.

Two popular choices for running LLMs are the NVIDIA RTX 5000 Ada 32GB and the NVIDIA RTX A6000 48GB. Both are high-performance GPUs, but which one reigns supreme when it comes to token generation speed? We'll dive deep into their performance to help you make an informed decision.

Understanding Token Generation Speed

Imagine each word in a sentence as a building block. These blocks are called "tokens" in the world of LLMs. Token generation speed measures how fast a GPU can process these blocks to produce text output. A higher token generation speed means faster responses from your LLM.

Comparing NVIDIA RTX 5000 Ada 32GB and NVIDIA RTX A6000 48GB for Token Generation

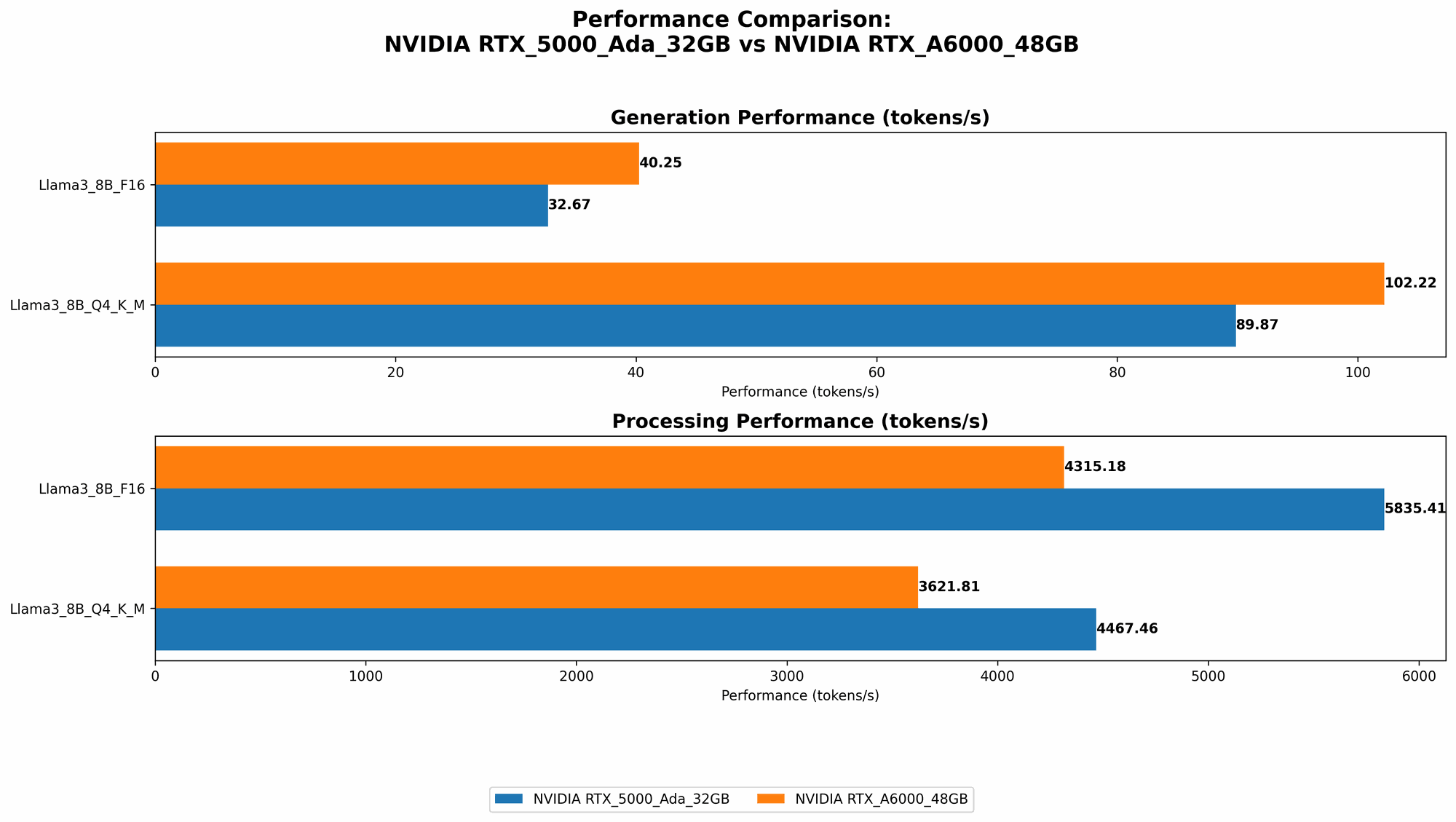

1. Llama 3 8B Model Comparison

Let's start by comparing the two GPUs using the Llama 3 8B model which is a smaller, but still powerful, LLM. We'll analyze both the Q4KM (quantized) and F16 (half-precision floating point) versions:

| Model | RTX 5000 Ada 32GB (tokens/second) | RTX A6000 48GB (tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 89.87 | 102.22 |

| Llama 3 8B F16 Generation | 32.67 | 40.25 |

Results: The RTX A6000 48GB consistently outperforms the RTX 5000 Ada 32GB for the Llama 3 8B model across both quantization levels. The A6000 manages to generate almost 13% more tokens per second in the Q4KM version and about 23% more tokens per second in the F16 version.

2. Llama 3 70B Model Comparison

Now let's move on to the bigger Llama 3 70B model. It's important to note that data for Llama 3 70B F16 on both GPUs is not available in the benchmark datasets used for this analysis.

| Model | RTX 5000 Ada 32GB (tokens/second) | RTX A6000 48GB (tokens/second) |

|---|---|---|

| Llama 3 70B Q4KM Generation | N/A | 14.58 |

Results: The RTX 5000 Ada 32GB lacks data for the Llama 3 70B Q4KM generation, making it impossible to compare the two GPUs directly in this scenario. However, the RTX A6000 48GB still demonstrates its power by managing to generate tokens at a respectable rate, despite the significantly larger model size.

Performance Analysis: Strengths & Weaknesses

NVIDIA RTX 5000 Ada 32GB:

Strengths:

- Power efficiency: The Ada architecture used in the RTX 5000 Ada 32GB boasts improved power efficiency compared to previous generations, making it a more budget-friendly option for prolonged use.

- Compact size: It has a smaller form factor compared to the A6000, making it ideal for workstations with limited space.

Weaknesses:

- Limited performance for larger models: It struggles to keep up with larger models like the Llama 3 70B, resulting in slower token generation speeds.

- Lower memory capacity: The 32GB of memory can be restrictive when running models demanding larger memory footprints.

NVIDIA RTX A6000 48GB:

Strengths:

- High-performance for larger models: It excels in handling larger models, delivering faster token generation speeds due to its larger memory capacity and greater compute power.

- Generous memory: The 48GB of memory is a significant advantage when dealing with demanding models and complex tasks.

Weaknesses:

- Higher power consumption: It consumes more power compared to the RTX 5000 Ada 32GB, potentially leading to higher electricity costs.

- Large form factor: Its size can be a challenge for workstations with limited space.

Practical Recommendations for Choosing the Right GPU

- For smaller models (e.g., Llama 3 8B) and budget-conscious needs, the RTX 5000 Ada 32GB is a suitable choice. It provides a balanced performance and power efficiency.

- For larger models (e.g., Llama 3 70B) and demanding tasks, the RTX A6000 48GB is the clear winner. Its high-performance and generous memory capacity make it ideal for handling complex tasks and large models.

- Consider your specific requirements: Think about the size and complexity of the LLM models you'll be running and the specific use cases you have in mind.

Token Generation Speed: A Visual Analogy

Think of it like this: You need to build a massive skyscraper using tiny blocks.

- The RTX 5000 Ada 32GB is a skilled builder with a decent-sized toolbox. It can efficiently build a smaller skyscraper, but it might struggle with the larger, more complex buildings.

- The RTX A6000 48GB is a super-powered construction crew with a massive warehouse of tools. They can effortlessly build even the tallest skyscrapers due to their powerful technology.

Quantization: A Simplified Explanation

When we talk about LLMs, "quantization" is a technique that shrinks the model's size without sacrificing too much accuracy. Think of it as compressing a large file to make it smaller, but still retaining most of the original content.

- Q4KM means the model has been heavily quantized, making it smaller and faster, but potentially less accurate.

- F16 indicates the model uses half-precision floating point numbers, leading to a balance between speed and accuracy compared to Q4KM.

FAQ: Frequently Asked Questions

Q1: Which GPU is better overall?

A1: There's no single "best" GPU. It depends on your specific requirements. The A6000 48GB outperforms the RTX 5000 Ada 32GB for larger models, but the latter is more budget-friendly for smaller models.

Q2: Can I run LLMs on my CPU?

A2: It's possible, but CPUs will be significantly slower for LLM tasks, especially with larger models. GPUs are designed for parallel processing, making them ideal for these computationally intensive tasks.

Q3: What about other GPUs?

A3: There are many other GPUs available, each with its own unique strengths and weaknesses. You can find benchmarks and comparisons online to help you choose the best GPU for your specific needs.

Q4: Is there a difference between token generation and processing?

A4: Yes, token generation refers to the output of text, while processing involves internal computations within the LLM. Both contribute to overall LLM performance.

Keywords

NVIDIA RTX 5000 Ada 32GB, NVIDIA RTX A6000 48GB, LLM, Large Language Model, Token Generation Speed, GPU, Llama 3 8B, Llama 3 70B, Q4KM, F16 Quantization, Benchmark Analysis, Performance Comparison, AI, Deep Learning, Natural Language Processing, Text Generation, Model Inference, GPU Benchmark, Machine Learning, Compute Power