NVIDIA RTX 5000 Ada 32GB vs. NVIDIA RTX 4000 Ada 20GB x4 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the world of large language models (LLMs), processing power is king. The ability to generate tokens quickly and efficiently is crucial for a seamless user experience. To deliver a smooth and responsive interaction, we need powerful GPUs that are specifically designed to handle the computational demands of LLMs.

This article delves into the token generation speed of two popular NVIDIA GPUs, the RTX5000Ada32GB and the RTX4000Ada20GB_x4, when running different LLM models, such as Llama 3. This comparison will help you choose the best GPU for your specific use case.

Comparison of NVIDIA RTX5000Ada32GB and NVIDIA RTX4000Ada20GB_x4 for Token Generation Speed

The Contenders:

- NVIDIA RTX5000Ada_32GB: A powerful single GPU with 32GB of memory.

- NVIDIA RTX4000Ada20GBx4: A multi-GPU setup with four RTX 4000 GPUs, each having 20GB of memory, totaling 80GB.

This comparison focuses on the token generation speed, comparing the performance of these GPUs on various LLM models including Llama 3 8B and 70B. It's important to note that the data we'll be discussing is specific to these two GPUs and LLM models.

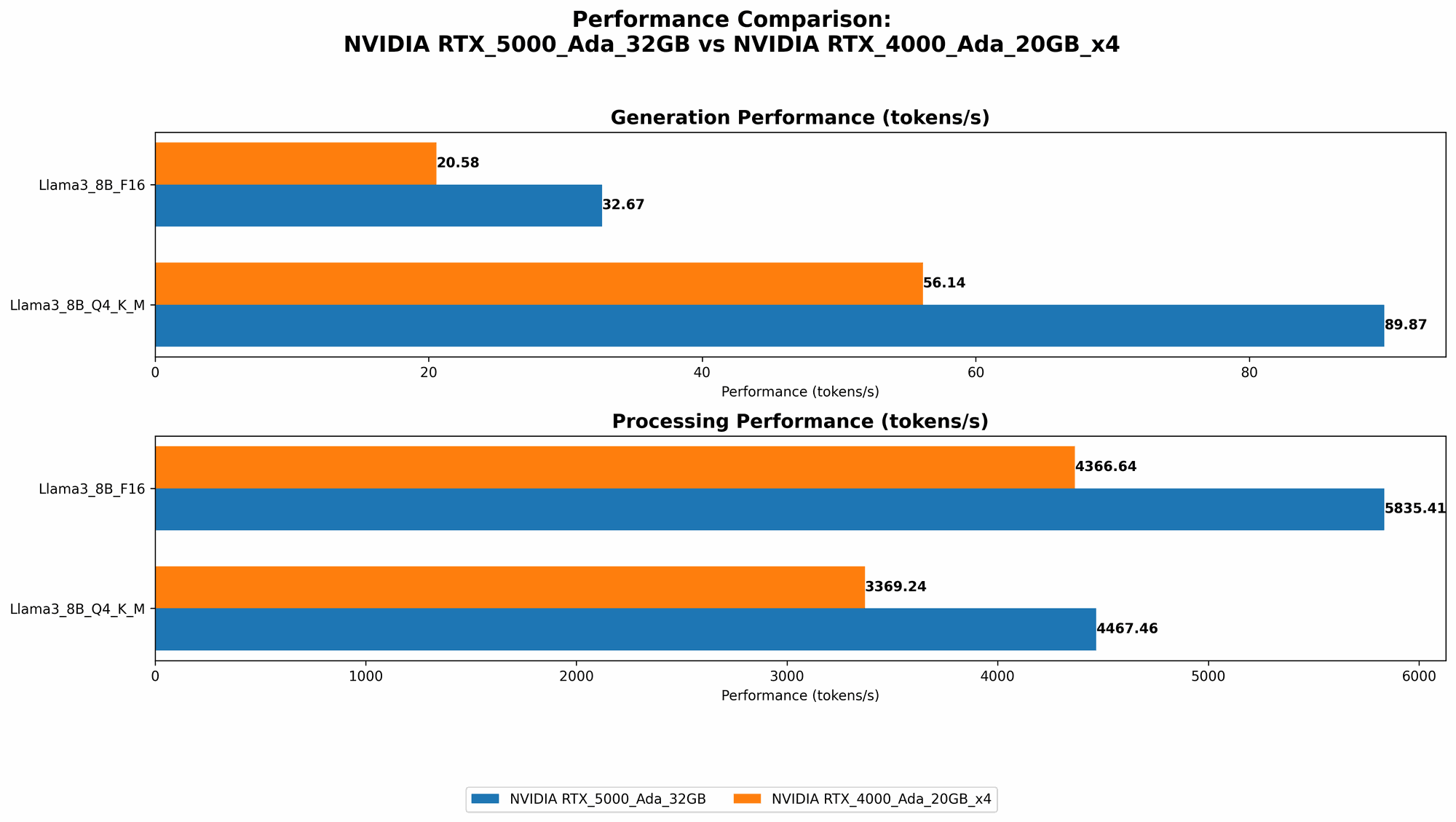

Benchmark Analysis: Token Generation Speed

The table below summarizes the token generation speed, measured in tokens per second, for each GPU configuration and LLM model.

| GPU Configuration | LLM Model | Token Generation Speed (Tokens/second) |

|---|---|---|

| RTX5000Ada_32GB | Llama 3 8B (Q4KM) | 89.87 |

| RTX5000Ada_32GB | Llama 3 8B (F16) | 32.67 |

| RTX4000Ada20GBx4 | Llama 3 8B (Q4KM) | 56.14 |

| RTX4000Ada20GBx4 | Llama 3 8B (F16) | 20.58 |

| RTX4000Ada20GBx4 | Llama 3 70B (Q4KM) | 7.33 |

Performance Analysis:

- Llama 3 8B: The RTX5000Ada32GB demonstrates a clear advantage in token generation speed over the RTX4000Ada20GBx4 configuration for both the Q4KM (quantized 4-bit) and F16 (half precision) models. This is likely due to the superior memory bandwidth and single-GPU architecture of the RTX5000Ada32GB.

- Llama 3 70B: The RTX4000Ada20GBx4 configuration achieves acceptable token generation speeds for the Llama 3 70B model in Q4KM format, while the RTX5000Ada_32GB lacks the required RAM to handle this larger model.

Key Takeaways:

- Single GPU vs. Multi-GPU: The single GPU RTX5000Ada32GB outperforms the four-GPU RTX4000Ada20GB_x4 configuration for smaller models like Llama 3 8B, demonstrating that a powerful single GPU can be a more efficient solution for some use cases.

- Memory Bandwidth: Memory bandwidth plays a significant role in token generation speed. The RTX5000Ada32GB excels here, offering a substantial advantage over the RTX4000Ada20GBx4, especially for the Q4K_M models.

- Model Size: The RTX4000Ada20GBx4 configuration is better suited for larger models like Llama 3 70B, particularly when using the quantized (Q4KM) format which reduces memory demands.

Comparing the CUDA Cores and Memory Bandwidth

Let's delve deeper into the underlying aspects of the GPUs that contribute to their performance.

CUDA Cores: The Brains of the Operation

The CUDA cores are the processing units that carry out the computations needed for LLM inference. The RTX5000Ada32GB boasts a larger number of CUDA cores than the RTX4000Ada20GB. However, the multi-GPU nature of the RTX4000Ada20GBx4 configuration significantly increases the overall CUDA core count, making it a more powerful option in terms of brute processing power.

Memory Bandwidth: The Data Highway

Memory bandwidth is crucial for LLM inference, as it determines how quickly data can be loaded and processed. The RTX5000Ada32GB has a significantly higher memory bandwidth compared to the RTX4000Ada20GB, which contributes to its faster token generation speed, particularly for smaller models. However, the RTX4000Ada20GBx4 has a larger total memory capacity.

Understanding Quantization: Why Less is More

Quantization is a technique that reduces the memory footprint of LLM models by representing model weights with fewer bits. In our case, we're comparing models using the Q4KM format, which means each weight is represented by 4 bits. This significantly reduces memory requirements while maintaining reasonable accuracy.

Quantization for the Less-Technical: Imagine a bookshelf

Think of a bookshelf with 200 books, each representing a weight in the LLM model. Each book is stored using 16 pages (representing bits). To reduce the space on the bookshelf, we use only 4 pages per book (representing 4 bits). We now have the same information, but in a much more compact format, just like with quantization.

Practical Recommendations: Choosing the Right GPU

Based on the benchmark results and the analysis of the GPUs' specifications, here are recommendations for selecting the best GPU for your LLM use case:

- For smaller LLMs (e.g., Llama 3 8B) and high speed token generation: NVIDIA RTX5000Ada_32GB is the better choice. Its high memory bandwidth and single-GPU architecture deliver exceptional performance.

- For larger LLMs (e.g., Llama 3 70B) and memory-intensive operations: NVIDIA RTX4000Ada20GBx4 is a more suitable option. The multi-GPU configuration provides a balance between processing power and memory capacity.

FAQ: Solving the Common Questions

How much do these GPUs cost?

The cost of GPUs is constantly changing, but you can expect the RTX5000Ada32GB to be more expensive than the RTX4000Ada20GB. However, since the RTX4000Ada20GBx4 configuration requires four GPUs, its total cost will be higher.

Can I run these GPUs on a standard desktop computer?

While these GPUs can be used on a desktop, they are often found in high-performance computing systems (HPCs) or specialized workstations. They require powerful power supplies and cooling solutions to handle their high power consumption.

What are the limitations of each GPU?

The RTX5000Ada32GB is limited in its ability to handle larger LLMs due to its memory capacity. The RTX4000Ada20GB_x4, however, can be challenging to set up and manage due to multi-GPU configurations.

Do I need to know a lot about deep learning to use these GPUs?

Basic knowledge of deep learning concepts is beneficial, but not essential. There are many resources and libraries available to help you get started with running LLMs on these GPUs, such as frameworks like PyTorch and TensorFlow.

Keywords

NVIDIA RTX5000Ada32GB, NVIDIA RTX4000Ada20GBx4, LLM, Large Language Model, Token Generation Speed, Benchmark, GPU, Llama 3, 8B, 70B, CUDA Cores, Memory Bandwidth, Quantization, Q4K_M, F16, Performance, Recommendation, Comparison