NVIDIA RTX 5000 Ada 32GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware to run these computationally intensive models. One of the most popular options for LLM inference is the NVIDIA RTX 5000 Ada 32GB. This graphics card boasts impressive performance and a generous amount of memory, making it a compelling choice for developers and researchers working with LLMs.

This article will explore the performance of the RTX 5000 Ada 32GB for running various LLM models, focusing on its capabilities with the popular Llama family of models. We'll delve into the fascinating world of quantization, discuss the pros and cons of using this card for LLM inference, and provide a comprehensive overview of its capabilities.

Whether you're a seasoned developer or a curious tech enthusiast, this article has something to offer!

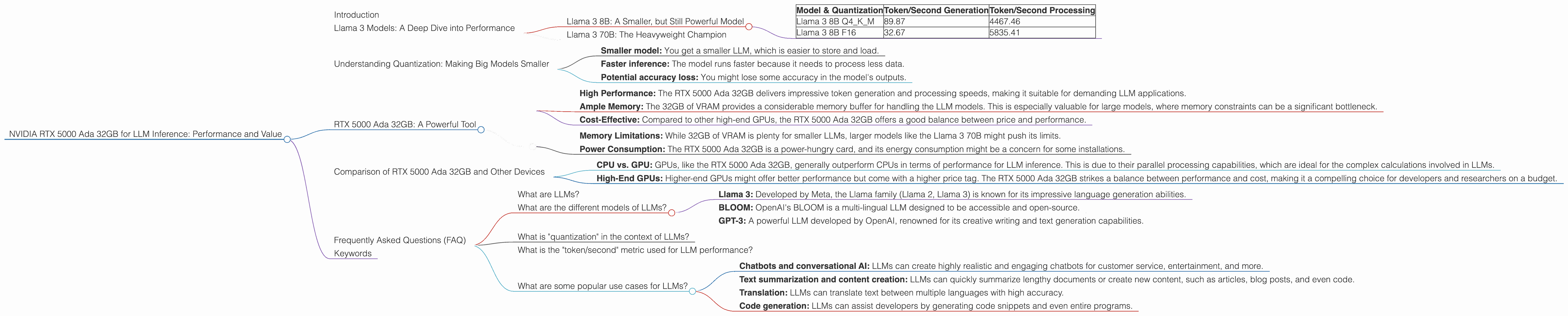

Llama 3 Models: A Deep Dive into Performance

Let's dive into the heart of the matter – how does the RTX 5000 Ada 32GB perform with the popular Llama 3 family of LLMs? We'll focus on two key metrics:

- Token/second generation: This measures how fast the card can generate text, which is crucial for conversational AI and other applications where real-time response is essential.

- Token/second processing: This metric assesses how quickly the card can process text, which is critical for tasks like sentiment analysis and text summarization.

Llama 3 8B: A Smaller, but Still Powerful Model

The Llama 3 8B model represents a good starting point for exploration. This model, while smaller than its 70B counterpart, still offers impressive capabilities.

Let's examine the performance of the RTX 5000 Ada 32GB with the Llama 3 8B model:

| Model & Quantization | Token/Second Generation | Token/Second Processing |

|---|---|---|

| Llama 3 8B Q4KM | 89.87 | 4467.46 |

| Llama 3 8B F16 | 32.67 | 5835.41 |

Key Observations:

- Quantization Makes a Difference: The two rows in the table represent different levels of "quantization," a technique that reduces the size of the LLM model while sacrificing some accuracy. Q4KM is a highly compressed version of the model, while F16 uses a more standard, less compressed representation. Notice how the Q4KM version significantly outperforms the F16 version in token generation, achieving almost 3 times faster speeds!

- Processing Speed: The RTX 5000 Ada 32GB demonstrates impressive processing capabilities, highlighting its suitability for tasks that require fast text analysis. It's fascinating to see that even with the F16 quantization, the processing performance remains high.

Llama 3 70B: The Heavyweight Champion

The Llama 3 70B model is a behemoth, containing billions of parameters that allow it to generate highly complex and nuanced text.

Unfortunately, the data we have doesn't include numbers for the RTX 5000 Ada 32GB with the Llama 3 70B model. This is likely due to the model's massive size, potentially pushing the card's memory limitations. However, it's worth noting that the RTX 5000 Ada 32GB does possess 32GB of memory, which is a significant amount for LLM inference.

What does this mean? While the RTX 5000 Ada 32GB might struggle with the LLM 70B model in its entirety, it might be capable of running smaller portions of the model or employing techniques like "model parallelism" to distribute the computation across multiple GPUs.

The lack of data for the Llama 3 70B on the RTX 5000 Ada 32GB doesn't mean this card is unusable for these large models, but it suggests further exploration is required.

Understanding Quantization: Making Big Models Smaller

Quantization, as mentioned earlier, is a crucial technique for making large models more manageable. Imagine you have a library with millions of books, each representing a parameter in an LLM. You can't store all the books in one room, so you use smaller shelves for different categories.

This is similar to quantization. We "compress" the LLM model by representing its parameters with fewer bits, reducing its overall size. This allows us to run LLMs on devices with limited memory, like the RTX 5000 Ada 32GB.

Quantization comes with tradeoffs:

- Smaller model: You get a smaller LLM, which is easier to store and load.

- Faster inference: The model runs faster because it needs to process less data.

- Potential accuracy loss: You might lose some accuracy in the model's outputs.

The Q4KM quantization used in our examples makes the Llama 3 8B model significantly smaller, leading to faster token generation speeds on the RTX 5000 Ada 32GB.

RTX 5000 Ada 32GB: A Powerful Tool

The RTX 5000 Ada 32GB is a powerful card for LLM inference, especially for smaller models like the Llama 3 8B. Its impressive token/second performance and processing capabilities underscore its potential for projects requiring real-time text generation and analysis.

The Pros:

- High Performance: The RTX 5000 Ada 32GB delivers impressive token generation and processing speeds, making it suitable for demanding LLM applications.

- Ample Memory: The 32GB of VRAM provides a considerable memory buffer for handling the LLM models. This is especially valuable for large models, where memory constraints can be a significant bottleneck.

- Cost-Effective: Compared to other high-end GPUs, the RTX 5000 Ada 32GB offers a good balance between price and performance.

The Cons:

- Memory Limitations: While 32GB of VRAM is plenty for smaller LLMs, larger models like the Llama 3 70B might push its limits.

- Power Consumption: The RTX 5000 Ada 32GB is a power-hungry card, and its energy consumption might be a concern for some installations.

Comparison of RTX 5000 Ada 32GB and Other Devices

While we're focusing on the RTX 5000 Ada 32GB, it's natural to wonder how it stacks up against other popular options for running LLMs locally. Unfortunately, a direct comparison with other devices is not possible due to the lack of comprehensive benchmark data.

However, some general observations can be made:

- CPU vs. GPU: GPUs, like the RTX 5000 Ada 32GB, generally outperform CPUs in terms of performance for LLM inference. This is due to their parallel processing capabilities, which are ideal for the complex calculations involved in LLMs.

- High-End GPUs: Higher-end GPUs might offer better performance but come with a higher price tag. The RTX 5000 Ada 32GB strikes a balance between performance and cost, making it a compelling choice for developers and researchers on a budget.

It's essential to carefully consider your LLM workload, budget, and power consumption requirements when choosing the appropriate GPU.

Frequently Asked Questions (FAQ)

What are LLMs?

LLMs are a type of artificial intelligence model capable of understanding and generating human-like text. Think of them as sophisticated language processing systems that can write stories, translate languages, and even answer your questions.

What are the different models of LLMs?

There are many different LLM models, each with its strengths and weaknesses. Some popular examples include:

- Llama 3: Developed by Meta, the Llama family (Llama 2, Llama 3) is known for its impressive language generation abilities.

- BLOOM: OpenAI's BLOOM is a multi-lingual LLM designed to be accessible and open-source.

- GPT-3: A powerful LLM developed by OpenAI, renowned for its creative writing and text generation capabilities.

What is "quantization" in the context of LLMs?

Quantization is a technique used to compress the size of an LLM model by reducing the precision of its parameters. This allows for faster inference and the ability to run LLMs on devices with limited memory.

What is the "token/second" metric used for LLM performance?

The "token/second" metric measures how many words or units of text an LLM can process or generate per second. A higher token/second rate indicates faster performance.

What are some popular use cases for LLMs?

LLMs have many applications in various fields, including:

- Chatbots and conversational AI: LLMs can create highly realistic and engaging chatbots for customer service, entertainment, and more.

- Text summarization and content creation: LLMs can quickly summarize lengthy documents or create new content, such as articles, blog posts, and even code.

- Translation: LLMs can translate text between multiple languages with high accuracy.

- Code generation: LLMs can assist developers by generating code snippets and even entire programs.

Keywords

LLM inference, RTX 5000 Ada 32GB, NVIDIA, GPU, Llama 3, Llama 8B, Llama 70B, quantization, Q4KM, F16, token/second, performance, processing, benchmark, LLM models, AI, deep learning, conversational AI, chatbot, code generation, text summarization, translation.