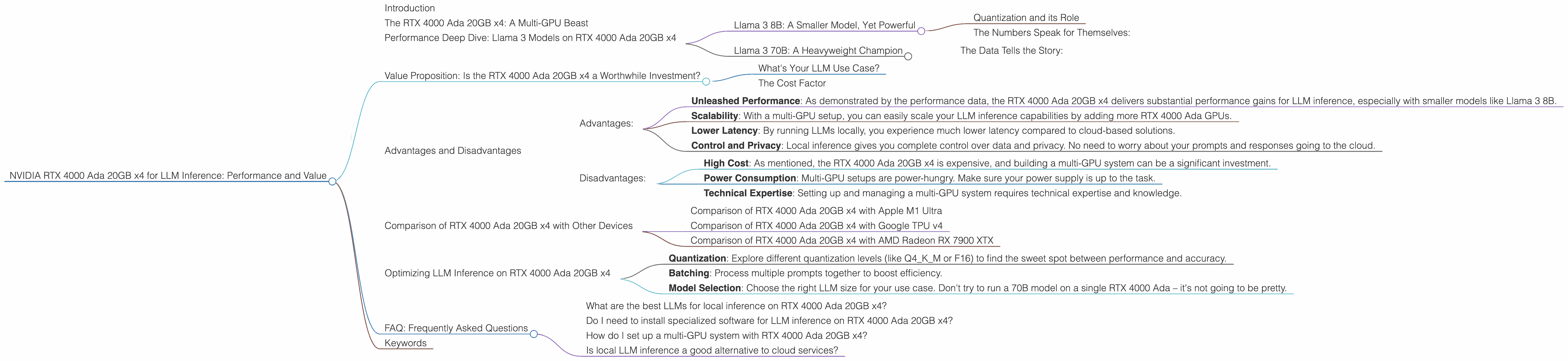

NVIDIA RTX 4000 Ada 20GB x4 for LLM Inference: Performance and Value

Introduction

The world of Large Language Models (LLMs) is exploding. From ChatGPT to Bard and everything in between, these powerful AI systems are revolutionizing the way we interact with computers. But with great power comes… well, a lot of computational power. Running LLMs locally requires a hefty hardware setup. For those looking to build a custom AI powerhouse, the NVIDIA RTX 4000 Ada 20GB x4 configuration emerges as a compelling contender. In this article, we'll deep dive into the performance and value of this NVIDIA card quartet for LLM inference, using real-world data to guide our exploration.

The RTX 4000 Ada 20GB x4: A Multi-GPU Beast

Imagine this: you're a developer who wants to run LLMs locally, not just play the latest games (although, you totally can!). You crave control and flexibility, and you want to push the boundaries of LLM inference. For you, the RTX 4000 Ada 20GB x4 with its 20GB of GDDR6 memory each – a total of 80GB of VRAM! – is a dream machine.

But why the x4 setup? Well, for LLMs, the idea is to throw more silicon at the problem. By combining four of these powerful GPUs in a single system, you unlock a tremendous surge in processing power, ideal for tackling the computationally intensive tasks involved in LLM inference. Think of it like having four brains working together – a true AI dream team!

Performance Deep Dive: Llama 3 Models on RTX 4000 Ada 20GB x4

Let's get down to brass tacks. We'll use the Llama 3 family of open-source LLMs as our test subjects, comparing the performance of the RTX 4000 Ada 20GB x4 with the help of real data.

Llama 3 8B: A Smaller Model, Yet Powerful

The Llama 3 8B model, despite being smaller than its larger counterparts, still packs a punch. Imagine it as a super-intelligent AI chihuahua – small, but mighty. On the RTX 4000 Ada 20GB x4, it displays impressive performance.

Quantization and its Role

Before diving into the numbers, let's quickly address a key concept: quantization. Think of it like a diet for LLMs – it reduces the size of the models, making them leaner and faster. Quantizing LLM weights reduces the precision of individual numbers, but in turn, allows for faster computation and less memory consumption. Imagine compressing a high-resolution image into a lower resolution – you lose some quality, but gain speed and space.

The Numbers Speak for Themselves:

| ** | Model | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) | ** |

|---|---|---|---|---|

| Llama 3 8B Q4KM | 56.14 | 3369.24 | ||

| Llama 3 8B F16 | 20.58 | 4366.64 |

Generation speed refers to the rate at which the model generates new tokens (words) in response to your prompts. Think of it as how fast your LLM can spit out text. Processing speed is a bit trickier. It refers to the rate at which the model processes information internally, regardless of what it outputs – like how fast your LLM is thinking.

Comparing the results: The Llama 3 8B model with Q4KM quantization (a type of compression) achieves a generation speed of 56.14 tokens per second on the RTX 4000 Ada 20GB x4, while the F16 model delivers 20.58 tokens/second. This indicates that quantization significantly boosts speed. However, the F16 model shows superior processing speed at 4366.64 tokens/second, hinting at its efficiency in internal calculations.

Llama 3 70B: A Heavyweight Champion

Now, let's step up to the Llama 3 70B model – a behemoth of a model with 70 billion parameters. This LLM is like a super-powered AI dinosaur, with vast knowledge and capabilities.

The Data Tells the Story:

| ** | Model | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) | ** |

|---|---|---|---|---|

| Llama 3 70B Q4KM | 7.33 | 306.44 |

Even with the power of the RTX 4000 Ada 20GB x4, the Llama 3 70B model (especially with F16 precision) requires more memory. It's like trying to stuff a giant elephant into a small car – it just won't work. Fortunately, the Q4KM quantization helps to shrink the model's footprint, allowing it to run on this setup.

Comparison: Comparing the Llama 3 70B Q4KM model with the Llama 3 8B model, we see a significant drop in generation speed, going from 56.14 tokens/second to 7.33 tokens/second. This drop is expected due to the model's larger size. However, the RTX 4000 Ada 20GB x4 still manages to deliver a respectable performance, handling the processing demands of the 70B model effectively.

Value Proposition: Is the RTX 4000 Ada 20GB x4 a Worthwhile Investment?

Now, the all-important question: is the RTX 4000 Ada 20GB x4 a worthwhile investment for LLM inference? The answer – it depends!

What's Your LLM Use Case?

If you're a developer who needs to run smaller LLM models locally – like the Llama 3 8B – the RTX 4000 Ada 20GB x4 provides excellent performance and cost-effectiveness. However, if your focus is on larger models like the Llama 3 70B, the RTX 4000 Ada 20GB x4 might not be the most efficient choice, especially for F16 precision. This is because running such large models can strain the available memory even with quantization, leading to performance bottlenecks.

The Cost Factor

Don't forget, the RTX 4000 Ada 20GB x4 isn't cheap. It's a premium GPU, and you'll need four of them. However, if you're committed to running LLMs locally and demand top-notch performance, the RTX 4000 Ada 20GB x4 could be the right solution for you.

Advantages and Disadvantages

Let's break down the pros and cons of the RTX 4000 Ada 20GB x4 for LLM inference.

Advantages:

- Unleashed Performance: As demonstrated by the performance data, the RTX 4000 Ada 20GB x4 delivers substantial performance gains for LLM inference, especially with smaller models like Llama 3 8B.

- Scalability: With a multi-GPU setup, you can easily scale your LLM inference capabilities by adding more RTX 4000 Ada GPUs.

- Lower Latency: By running LLMs locally, you experience much lower latency compared to cloud-based solutions.

- Control and Privacy: Local inference gives you complete control over data and privacy. No need to worry about your prompts and responses going to the cloud.

Disadvantages:

- High Cost: As mentioned, the RTX 4000 Ada 20GB x4 is expensive, and building a multi-GPU system can be a significant investment.

- Power Consumption: Multi-GPU setups are power-hungry. Make sure your power supply is up to the task.

- Technical Expertise: Setting up and managing a multi-GPU system requires technical expertise and knowledge.

Comparison of RTX 4000 Ada 20GB x4 with Other Devices

Let's compare the RTX 4000 Ada 20GB x4 with some other popular devices for LLM inference.

Comparison of RTX 4000 Ada 20GB x4 with Apple M1 Ultra

The Apple M1 Ultra is a powerful chip designed for AI and machine learning. While it's a formidable contender, the RTX 4000 Ada 20GB x4 generally outperforms it in terms of raw processing power, especially when it comes to larger models and higher-precision computations.

Comparison of RTX 4000 Ada 20GB x4 with Google TPU v4

Google's TPU v4 is a specialized AI accelerator optimized for machine learning tasks. It offers impressive performance, particularly with large-scale models. However, the RTX 4000 Ada 20GB x4 is more affordable, and its flexibility with various software frameworks makes it a versatile choice for developers.

Comparison of RTX 4000 Ada 20GB x4 with AMD Radeon RX 7900 XTX

The AMD Radeon RX 7900 XTX is a high-end GPU that delivers strong performance for both gaming and AI. However, the RTX 4000 Ada 20GB x4 generally offers better performance for LLM inference, especially with models that rely heavily on Tensor Cores.

Optimizing LLM Inference on RTX 4000 Ada 20GB x4

To get the most out of your RTX 4000 Ada 20GB x4 for LLM inference, consider these optimization tips:

- Quantization: Explore different quantization levels (like Q4KM or F16) to find the sweet spot between performance and accuracy.

- Batching: Process multiple prompts together to boost efficiency.

- Model Selection: Choose the right LLM size for your use case. Don't try to run a 70B model on a single RTX 4000 Ada – it's not going to be pretty.

FAQ: Frequently Asked Questions

What are the best LLMs for local inference on RTX 4000 Ada 20GB x4?

Smaller models like Llama 3 8B are a great starting point for local inference on the RTX 4000 Ada 20GB x4. Larger models like Llama 3 70B may require careful optimization and might not be feasible for F16 precision depending on your setup.

Do I need to install specialized software for LLM inference on RTX 4000 Ada 20GB x4?

Yes, you'll need software like "llama.cpp" (https://github.com/ggerganov/llama.cpp) to run LLMs on the RTX 4000 Ada 20GB x4. This software takes advantage of the GPU's capabilities for fast inference.

How do I set up a multi-GPU system with RTX 4000 Ada 20GB x4?

Setting up a multi-GPU system requires attention to motherboard compatibility, power supply, and drivers. You'll need a motherboard with sufficient PCIe slots, a high-wattage power supply, and the latest NVIDIA drivers to ensure smooth operation.

Is local LLM inference a good alternative to cloud services?

Local inference can be advantageous for control, privacy, and reduced latency, but it requires a substantial investment in hardware. Cloud services offer scalability and convenience but come with potential costs, latency, and privacy concerns.

Keywords

LLM, Large Language Model, NVIDIA RTX 4000 Ada, GPU, Inference, Performance, Value, Local Inference, Llama 3, Quantization, Processing Speed, Generation Speed, Tokens per Second, Multi-GPU, Optimization, Cost, Power Consumption, Apple M1 Ultra, Google TPU v4, AMD Radeon RX 7900 XTX.