NVIDIA RTX 4000 Ada 20GB vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the world of large language models (LLMs), speed is king. Whether you're a developer building a chatbot or a researcher exploring the boundaries of AI, the ability to generate text quickly is crucial. This is where powerful GPUs come into play, and two popular contenders for LLM inference are the NVIDIA RTX4000Ada20GB and the NVIDIA RTXA6000_48GB.

This article will delve into a head-to-head comparison of these two GPUs, focusing on their token generation speed for various LLM models. We'll break down the performance, analyze their strengths and weaknesses, and provide practical recommendations for different use cases. Join us as we explore the exciting world of LLM inference and discover which GPU reigns supreme in the quest for speed!

Performance Comparison of NVIDIA RTX4000Ada20GB and NVIDIA RTXA6000_48GB

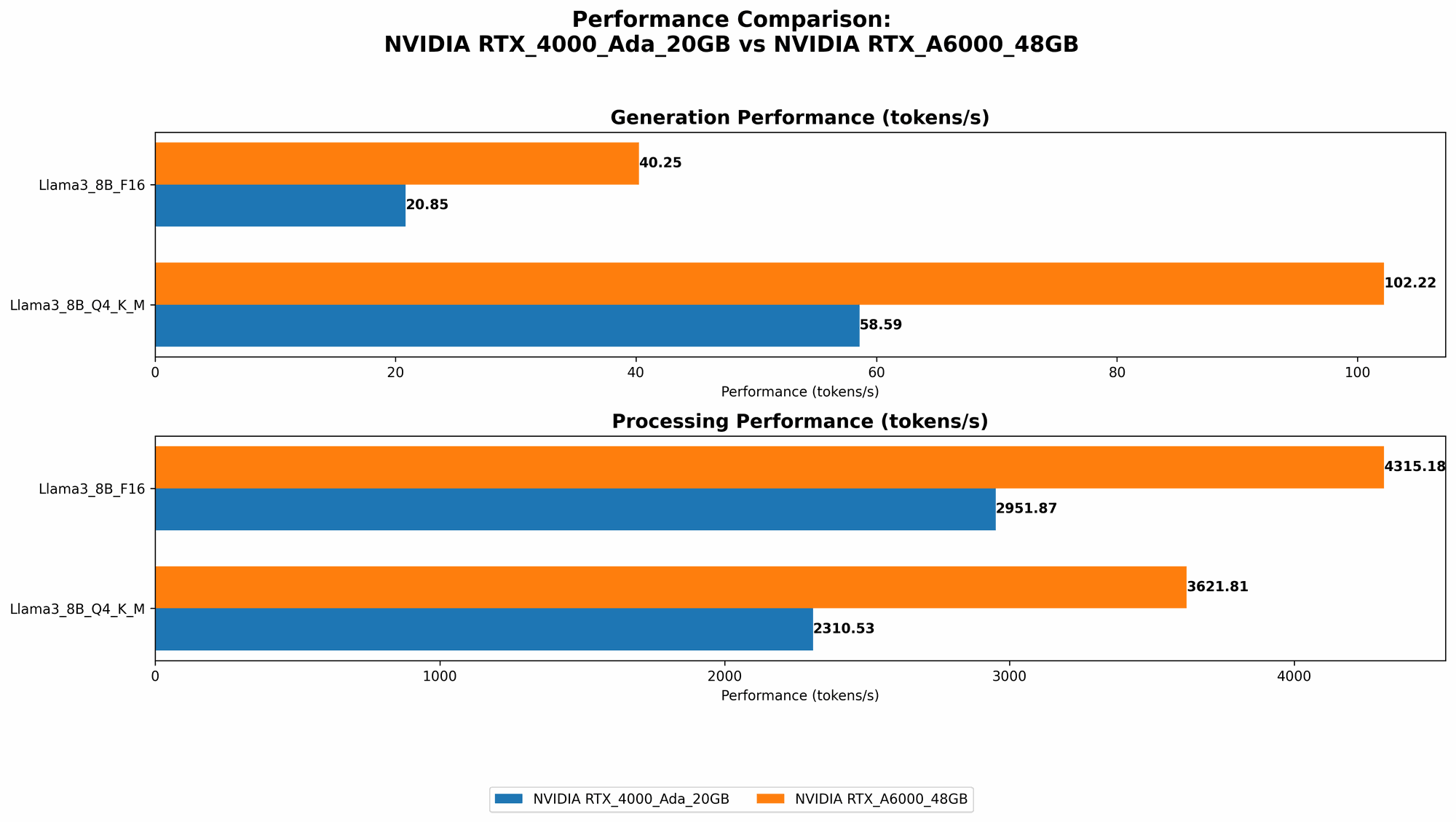

To understand the performance difference between the RTX4000Ada20GB and the RTXA6000_48GB, we'll analyze their token generation speed for various LLM models, measured in tokens per second (tokens/s). We'll consider two popular LLM models: Llama3 8B and Llama3 70B, both in quantized 4-bit (Q4) and 16-bit (F16) formats.

NVIDIA RTX4000Ada_20GB Performance

The RTX4000Ada_20GB offers a solid performance for the Llama3 8B model, but struggles with the larger Llama3 70B model.

Here's a breakdown of its performance:

- Llama3 8B Q4 KM: The RTX4000Ada20GB can generate around 58.59 tokens/s for the Llama3 8B model when quantized with 4-bit quantization (Q4) using the K_M method.

- Llama3 8B F16: For the same model in 16-bit floating point (F16) format, the RTX4000Ada_20GB manages to generate around 20.85 tokens/s.

- Llama3 70B: No data is available for the Llama3 70B model on this particular GPU, suggesting it might not be suitable for running larger models due to its limited memory.

The table below summarizes the performance of the RTX4000Ada_20GB:

| LLM Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4 K_M | 58.59 |

| Llama3 8B | F16 | 20.85 |

| Llama3 70B | Q4 K_M | N/A |

| Llama3 70B | F16 | N/A |

NVIDIA RTXA600048GB Performance

The RTXA600048GB, with its larger memory and powerful architecture, demonstrates exceptional prowess across both the Llama3 8B and 70B models.

Here's a breakdown of its performance:

- Llama3 8B Q4 KM: The RTXA600048GB achieves an impressive token generation speed of 102.22 tokens/s for the Llama3 8B model in Q4 format using the KM method.

- Llama3 8B F16: For the F16 format, the RTXA600048GB achieves 40.25 tokens/s.

- Llama3 70B Q4 KM: This is where the RTXA600048GB shines! It manages to generate around 14.58 tokens/s for the larger Llama3 70B model when quantized using Q4 and the KM method.

- Llama3 70B F16: No data is available for the F16 format of the Llama3 70B model on this GPU.

The table below summarizes the performance of the RTXA600048GB:

| LLM Model | Quantization | Tokens/s |

|---|---|---|

| Llama3 8B | Q4 K_M | 102.22 |

| Llama3 8B | F16 | 40.25 |

| Llama3 70B | Q4 K_M | 14.58 |

| Llama3 70B | F16 | N/A |

Performance Analysis: NVIDIA RTX4000Ada20GB vs. NVIDIA RTXA6000_48GB

Now let's dive deeper into the performance analysis and understand what makes the RTXA600048GB a superior choice for larger LLMs:

Token Generation Speed: A Clear Winner Emerges

When it comes to token generation speed, the RTXA600048GB outperforms the RTX4000Ada20GB across the board. For the Llama3 8B model, the RTXA600048GB achieves around double the speed of the RTX4000Ada20GB for both Q4 and F16 formats. This difference becomes even more pronounced with the Llama3 70B model, where the RTXA600048GB is able to handle it efficiently while the RTX4000Ada_20GB struggles.

Memory Considerations: The Power of 48GB

One of the key factors contributing to the RTXA600048GB's superior performance is its massive 48GB of HBM2e memory. Larger LLMs like the Llama3 70B require significant memory to store their parameters, and the RTXA600048GB comfortably accommodates this need. The RTX4000Ada20GB, with its 20GB of memory, might simply run out of memory when dealing with larger models, leading to performance issues or even crashes. This is why no data is available for the 70B model on the RTX4000Ada20GB.

Quantization and its Impact: A Balancing Act

Both GPUs work well with both Q4 (4-bit) and F16 (16-bit) quantization. Quantization is a technique used to reduce the size of LLM models by representing their weights using fewer bits. This allows LLMs to run on devices with limited memory, like the RTX4000Ada_20GB. However, Q4 quantization sometimes comes with a slight tradeoff in accuracy, as it might lead to a decrease in the quality of the generated text.

Here's an analogy to understand quantization: Imagine you're trying to describe a color using a limited palette of paints. 16-bit (F16) gives you a wider palette, allowing for more precise shades. 4-bit (Q4) uses a smaller palette, which might result in a less accurate representation of the color. However, it allows you to store more colors within the same space.

Therefore, choosing between Q4 and F16 depends on your priorities. If you need the maximum speed and memory efficiency, Q4 is the way to go. If accuracy takes precedence, F16 might be a better option.

Practical Recommendations and Use Cases

Now that we've analyzed the performance of the RTX4000Ada20GB and the RTXA6000_48GB, let's discuss when to use each GPU based on your specific needs:

NVIDIA RTX4000Ada_20GB: Ideal for Smaller Models

- Perfect for running smaller LLMs like Llama3 8B: If your primary focus is running models like Llama3 8B or even smaller ones, the RTX4000Ada_20GB can provide a cost-effective and efficient solution.

- Suitable for developers with budget constraints: It's a more affordable option, making it appealing for individuals or small teams with budget limitations.

- Great for experimentation and prototyping: If you're just experimenting with building your own LLMs, the RTX4000Ada_20GB might be sufficient for initial development and testing.

NVIDIA RTXA600048GB: For Large-Scale LLM Inference

- The go-to GPU for larger models: If you need to run larger LLMs like Llama3 70B or even models with billions of parameters, the RTXA600048GB is the clear choice with its ample memory and raw processing power.

- Perfect for production environments: The RTXA600048GB provides the performance and reliability needed for production-level LLM inference, ensuring smooth operation and fast response times.

- Suitable for high-performance research: Researchers pushing the boundaries of LLM development will benefit significantly from the RTXA600048GB's ability to handle massive models and complex computations.

Key Takeaways

Here are some key takeaways from our comparison:

- For larger LLMs, the RTXA600048GB is the superior choice: Its bigger memory and powerful architecture make it ideal for models with billions of parameters.

- The RTX4000Ada_20GB is a cost-effective solution for smaller models: Its performance is sufficient for LLMs like Llama3 8B or smaller.

- Quantization plays a crucial role: Choosing the right quantization method (Q4 or F16) depends on your specific application and the tradeoff between speed and accuracy.

- Both GPUs are highly capable and offer excellent performance for LLM inference.

FAQ: Frequently Asked Questions

What is Q4 and F16 quantization?

Quantization is a technique used to reduce the size of LLM models by representing their weights using fewer bits. F16 uses 16-bits to represent the weights, while Q4 uses only 4-bits. This allows LLMs to run on devices with limited memory, like the RTX4000Ada_20GB. However, Q4 quantization sometimes comes with a slight tradeoff in accuracy, as it might lead to a decrease in the quality of the generated text.

What is the difference between a CPU and a GPU?

CPUs (Central Processing Units) are designed for general-purpose computing tasks, like running operating systems and applications. GPUs (Graphics Processing Units) are optimized for parallel processing, making them ideal for tasks like machine learning and deep learning, which involve complex mathematical computations.

How do I choose the right GPU for my LLM?

Consider the size of your LLM, your budget, and your performance requirements. If you're running large models, the RTXA600048GB is the better choice. If you're working with smaller models and have a limited budget, the RTX4000Ada_20GB might be sufficient.

What are some other popular GPUs for LLM inference?

Some additional popular GPUs for LLM inference include:

- NVIDIA A100: A powerful GPU designed for high-performance computing and AI workloads.

- NVIDIA H100: The latest and most powerful GPU from NVIDIA, designed for exascale computing and AI.

- AMD MI250X: A powerful GPU designed for AI and high-performance computing.

Keywords

LLM, Large Language Model, Token Generation Speed, GPU, RTX4000Ada20GB, RTXA6000_48GB, Llama3 8B, Llama3 70B, Quantization, Q4, F16, Inference, Performance, Benchmark, Memory, Speed, Accuracy, Use Cases, Recommendation, AI, Machine Learning, Deep Learning.