NVIDIA RTX 4000 Ada 20GB vs. NVIDIA RTX 5000 Ada 32GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly expanding, fueled by advancements in deep learning and the availability of powerful hardware. Running these sophisticated models locally requires dedicated GPUs, and choosing the right one can be challenging. This article investigates the performance of two popular NVIDIA GPUs – the RTX4000Ada20GB and the RTX5000Ada32GB – in generating tokens for various LLM models. We will compare their strengths and weaknesses, providing you with insights to help you make informed decisions for your LLM projects.

Imagine you want to build your own AI chatbot or experiment with creative text generation using LLMs. Choosing the right GPU is crucial because it directly impacts the speed and quality of your AI creations. This guide will arm you with the knowledge to make the right hardware choice for your LLM needs.

Performance Analysis: Token Generation Speed Comparison

This analysis focuses specifically on token generation speeds for Llama3 models – Llama38B and Llama370B – running on the RTX4000Ada20GB and RTX5000Ada32GB GPUs. We will examine the performance under different quantization levels: Q4KM (4-bit quantization with a combination of Kernel and Matrix quantization) and F16 (16-bit floating-point precision).

Understanding Quantization for Non-Technical Readers

Think of quantization as a way to make your LLM model more efficient for running on a GPU. It's like using a smaller toolbox with fewer tools – but still getting the job done! Q4KM and F16 are like using different sets of tools, with Q4KM being a much smaller, streamlined toolbox than F16.

RTX4000Ada20GB vs. RTX5000Ada32GB: Token Generation Speed Showdown

The following table presents the token generation speeds in tokens/second (measured with the llama.cpp library) for the specified LLM models and quantization modes:

| GPU Model | LLM Model | Quantization Mode | Tokens/second |

|---|---|---|---|

| RTX4000Ada_20GB | Llama3_8B | Q4KM | 58.59 |

| RTX4000Ada_20GB | Llama3_8B | F16 | 20.85 |

| RTX5000Ada_32GB | Llama3_8B | Q4KM | 89.87 |

| RTX5000Ada_32GB | Llama3_8B | F16 | 32.67 |

| RTX4000Ada_20GB | Llama3_70B | Q4KM | N/A |

| RTX4000Ada_20GB | Llama3_70B | F16 | N/A |

| RTX5000Ada_32GB | Llama3_70B | Q4KM | N/A |

| RTX5000Ada_32GB | Llama3_70B | F16 | N/A |

Observations and Insights:

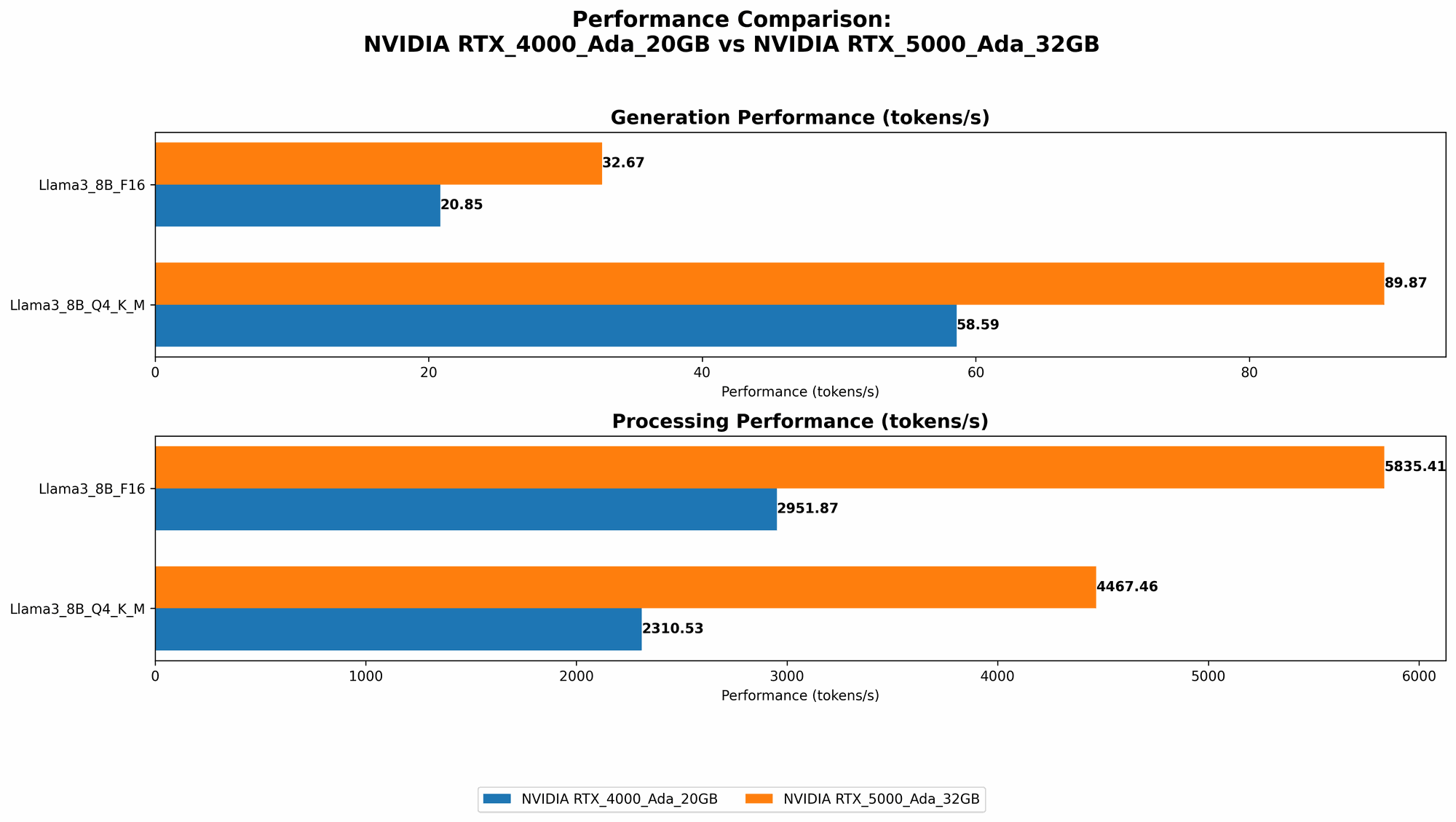

- RTX5000Ada32GB outperforms RTX4000Ada20GB: In both Q4KM and F16, RTX5000Ada32GB delivers significantly faster token generation speeds for the Llama38B model. This improvement is attributed to its larger memory capacity and enhanced processing capabilities.

- Q4KM significantly faster than F16: Across both GPUs, the Q4KM quantization mode consistently produces much faster token generation speeds compared to F16. This is due to the smaller size of the quantized model, enabling more efficient processing.

- Llama370B performance unavailable: Unfortunately, the data currently available does not include results for Llama370B on either GPU. It is essential to note that running such large models locally might require more powerful hardware, including specialized AI accelerators.

Let's delve deeper into the performance aspects of each GPU, highlighting their strengths and weaknesses.

RTX4000Ada_20GB: A Solid Budget Choice

The RTX4000Ada_20GB is a great option for developers and enthusiasts who are looking for a balance between performance and price. Its 20GB of memory is sufficient for running smaller LLM models, and its Ada architecture delivers respectable token speeds.

Strengths of RTX4000Ada_20GB:

- Value for money: This GPU provides a solid performance-to-price ratio, making it an attractive option for budget-conscious users.

- Sufficient for smaller LLMs: It handles Llama38B comfortably, especially with the Q4K_M quantization mode.

- Energy efficient: The Ada architecture improves efficiency by reducing power consumption while maintaining solid performance.

Weaknesses of RTX4000Ada_20GB:

- Limited by memory: The 20GB memory might be insufficient for running larger models like Llama3_70B.

- Slower token generation: While still capable, the RTX4000Ada20GB falls behind the RTX5000Ada32GB in token speeds.

RTX5000Ada_32GB: The Performance Powerhouse

The RTX5000Ada_32GB is the top-tier option for those who prioritize maximum performance and have no budget constraints. Its massive 32GB memory allows for running even the largest LLM models, and its processing power pushes token generation speeds to new heights.

Strengths of RTX5000Ada_32GB:

- Large memory: The 32GB memory provides sufficient headroom for handling even the largest LLMs, including Llama3_70B and beyond.

- Exceptional token generation: The RTX5000Ada_32GB delivers the highest token generation speeds, making it ideal for demanding tasks.

- Advanced architecture: The Ada architecture offers significant improvements over previous generations in performance and efficiency.

Weaknesses of RTX5000Ada_32GB:

- High cost: As a top-tier GPU, its price tag is significantly higher than the RTX4000Ada_20GB.

- Potential overkill: For smaller LLMs or limited workloads, the RTX5000Ada_32GB might be overkill and unnecessarily expensive.

Practical Recommendations for Use Cases

Here are recommendations for selecting the right GPU based on your LLM project and budget:

- Smaller LLM Models & Budget Constraints: If you're experimenting with Llama38B or similar models and are working on a tighter budget, the RTX4000Ada20GB offers an excellent balance of performance and affordability. Remember to use the Q4KM quantization mode for best performance.

- Larger LLM Models & Maximum Performance: If you are pushing the boundaries with Llama370B or even larger models and prioritizes the fastest possible token generation, the RTX5000Ada32GB is the optimal choice. The increased memory and processing power will make your LLM tasks run smoothly and quickly.

FAQ: Your LLM and GPU Questions Answered

Q: What is the best GPU for running LLMs locally?

A: The "best" GPU depends on your specific LLM model and your budget. For smaller models, the RTX4000Ada20GB provides a good value for money. For larger LLMs and maximum performance, the RTX5000Ada32GB is the ideal choice.

Q: What is the difference between F16 and Q4KM quantization?

A: Quantization is a technique for reducing the size of LLM models, enabling faster processing on GPUs. F16 uses 16-bit floating-point precision, while Q4KM uses 4-bit precision with a combination of Kernel and Matrix quantization. Q4KM is more efficient and faster, but might slightly reduce the accuracy of the model.

Q: Can I run Llama370B on the RTX4000Ada20GB?

A: It's highly unlikely. The RTX4000Ada20GB's memory capacity might be insufficient for the Llama370B model. It's recommended to use a GPU with at least 32GB of memory for such large models.

Q: Is there a difference between token generation and processing?

A: Yes, token generation refers to the speed at which the model generates new tokens (words) based on the input prompt. Token processing refers to the overall efficiency of the model's internal computations during execution.

Q: How can I choose the right GPU for my LLM needs?

A: Consider the size of the LLM model you plan to run, your budget, and the importance of token generation speed. If you need to run large models and prioritize performance, invest in the more powerful GPU. If you're working with smaller models and budget is a constraint, a more affordable option will suffice.

Keywords

NVIDIA RTX4000Ada20GB, RTX5000Ada32GB, Llama38B, Llama370B, LLM, token generation speed, quantization, Q4KM, F16, GPU, benchmark analysis, performance comparison, deep learning, AI, machine learning, chatbot, text generation, natural language processing, NLP.