NVIDIA RTX 4000 Ada 20GB vs. NVIDIA 3090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the world of Large Language Models (LLMs), speed is king. A faster inference speed means a smoother user experience, making LLMs more accessible and useful. But with a vast array of hardware options available, choosing the right device for optimal performance can be a daunting task.

Today, we dive into a head-to-head comparison between two popular GPU choices for running LLMs: the NVIDIA RTX 4000 Ada 20GB and two NVIDIA 3090 24GB cards in SLI configuration. We'll analyze their token generation speed, explore their strengths and weaknesses, and provide practical recommendations for different LLM use cases.

Think of it as a GPU drag race – who will win the token generation championship? Buckle up, folks, it's going to be a thrilling ride!

Comparison of NVIDIA RTX 4000 Ada 20GB and NVIDIA 3090 24GB x2 for Llama 3 Models

This benchmark delves into the performance of these devices running Llama 3 models in different quantization settings.

For this comparison, the data only covers the Llama3 8B and Llama3 70B models. Data for other models, including Llama 7B or Llama 70B, is not available.

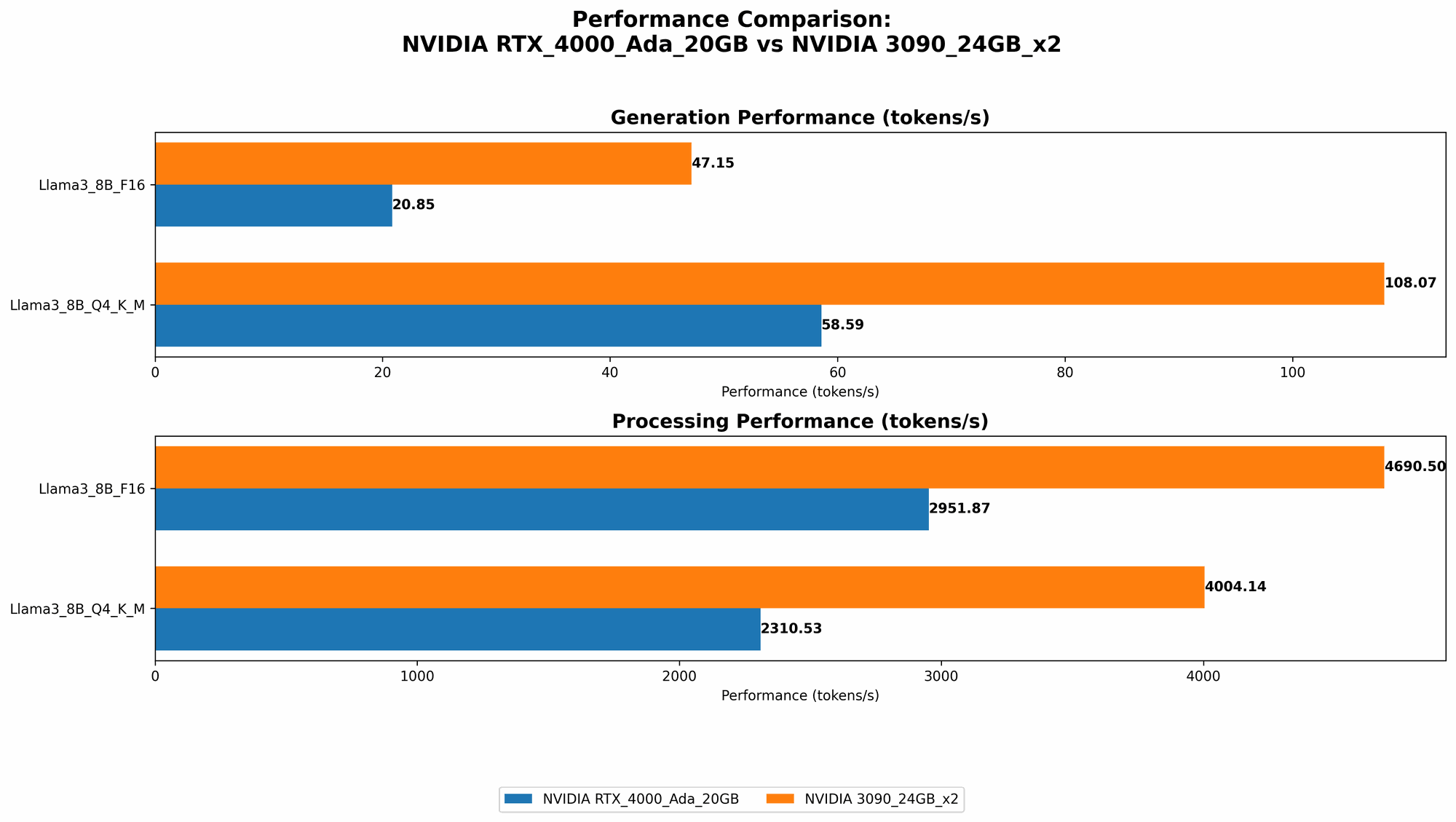

Token Generation Speed Comparison: NVIDIA RTX 4000 Ada 20GB vs. NVIDIA 3090 24GB x2

| Model | RTX 4000 Ada 20GB (Tokens/second) | 3090 24GB x2 (Tokens/second) |

|---|---|---|

| Llama3 8B Q4KM Generation | 58.59 | 108.07 |

| Llama3 8B F16 Generation | 20.85 | 47.15 |

| Llama3 70B Q4KM Generation | N/A | 16.29 |

| Llama3 70B F16 Generation | N/A | N/A |

Key Takeaways:

- For the Llama 3 8B model, the NVIDIA 3090 x2 configuration significantly outperforms the RTX 4000 Ada 20GB in token generation speed, achieving almost double the speed in both Q4KM and F16 quantization settings.

- In the larger Llama 3 70B model, the 3090 x2 configuration demonstrates a substantial advantage in the Q4KM setting, nearly reaching double the speed of the RTX 4000 Ada 20GB with Llama 3 8B. However, the data for the F16 setting is missing for both devices.

Performance Analysis of NVIDIA RTX 4000 Ada 20GB

Strengths:

- Cost-effective for smaller models. The RTX 4000 Ada 20GB offers a competitive price point and performs well with smaller models, making it a viable option for budget-conscious users.

- Lower power consumption. Compared to the 3090 x2 configuration, the RTX 4000 Ada 20GB consumes less power, making it a more energy-efficient choice for long-term use.

Weaknesses:

- Limited performance for larger models. The RTX 4000 Ada 20GB struggles with larger models like Llama 3 70B, experiencing a significant drop in performance.

Use Case Recommendations:

- Ideal for smaller models: The RTX 4000 Ada 20GB is well-suited for running smaller LLM models like Llama 3 8B or even smaller variants like Llama 7B, offering a balanced performance and cost-effectiveness.

- Resource-constrained environments: Its lower power consumption and compact form factor make it suitable for deployments with limited power or space constraints.

Performance Analysis of NVIDIA 3090 24GB x2

Strengths:

- Exceptional performance for large models. The 3090 x2 configuration shines with larger LLMs like Llama 3 70B, delivering impressive token generation speeds.

- Scalability for demanding tasks. It can handle more complex and demanding LLM tasks, such as generating longer texts or fine-tuning models, potentially using techniques like distributed training for large models.

Weaknesses:

- Higher cost and power consumption. The investment in two high-end GPUs comes with a significant cost and power consumption overhead compared to the RTX 4000 Ada 20GB.

- Complex setup and configuration. Setting up and configuring an SLI system with two GPUs can be more complex and challenging, requiring drivers and specific configurations.

Use Case Recommendations:

- High-performance computing: When speed is paramount, especially for large models, the 3090 x2 configuration provides the horsepower needed for demanding tasks like generating long texts, exploring complex prompts, or fine-tuning models.

- Production deployments: For mission-critical applications where performance is key, the 3090 x2 configuration offers the reliability and scalability needed for production environments.

- Research and experimentation: Researchers and experimenters pushing the boundaries of LLM performance will benefit from the powerful capabilities of the 3090 x2 configuration.

Conclusion

The choice between the NVIDIA RTX 4000 Ada 20GB and the NVIDIA 3090 24GB x2 for running LLMs ultimately depends on your specific needs and priorities.

For smaller models and budget-conscious users, the RTX 4000 Ada 20GB provides a cost-effective solution. For demanding tasks with larger models, the 3090 x2 configuration offers exceptional performance, but at a higher cost and power consumption.

Analyzing your requirements for model size, performance, and budget is crucial in making the right choice.

Note: The performance data presented in this article may vary depending on various factors such as the specific LLM model, configuration, and optimization techniques used. It is always recommended to conduct your own benchmark tests to assess the optimal performance for your specific use case.

FAQs

What are LLMs and why are they important?

LLMs, or Large Language Models, are powerful AI models trained on massive amounts of text data. They can understand and generate human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. Think of them like a superpower for text – they can do things with language that we never thought possible before!

What is quantization and how does it affect performance?

Think of quantization like a form of "data compression" for LLMs. It reduces the precision of the numbers used to represent the model, making it smaller and faster to run – but it also can slightly reduce the accuracy of the model's outputs. Think of it as trading a little bit of detail for a lot more speed!

Which device is suitable for production environments?

For production deployments, the NVIDIA 3090 24GB x2 configuration provides the reliability and scalability needed, especially for demanding LLM tasks. But remember, the RTX 4000 Ada 20GB could be a decent option for less demanding tasks or for deployments with budget constraints.

Can I use an RTX 4000 Ada 20GB for research and experimentation?

You can certainly use the RTX 4000 Ada 20GB for research, especially if you are exploring smaller LLM models. While it may not be the top choice for pushing the boundaries of LLM performance, it's a good starting point for exploring and experimenting with smaller models.

Keywords

LLM, Large Language Model, NVIDIA RTX 4000 Ada 20GB, NVIDIA 3090 24GB, Token Generation Speed, Benchmark Analysis, Llama 3, Quantization, Performance, Cost-Effectiveness, Power Consumption, Use Case Recommendations, Production Deployment, Research and Experimentation.