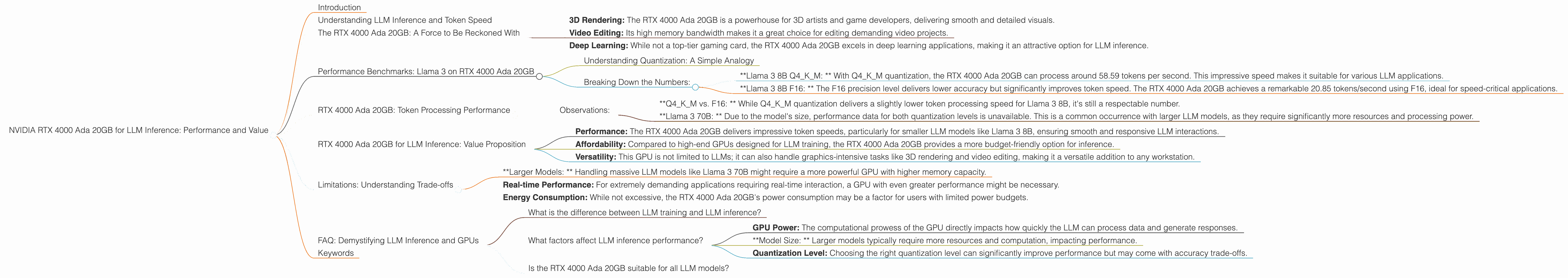

NVIDIA RTX 4000 Ada 20GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is exploding, and with it comes an ever-increasing demand for powerful hardware capable of handling their complex computations. If you're a developer or tech enthusiast exploring the exciting world of running LLMs locally, you've probably stumbled upon the NVIDIA RTX 4000 Ada 20GB. This powerful GPU, designed for professional 3D graphics, is also a stellar choice for LLM inference, offering a good balance of performance and affordability.

In this article, we'll explore the RTX 4000 Ada 20GB's capabilities for LLM inference, analyze its performance on various LLM models, and delve into its value proposition. We'll use real-world data to paint a clear picture of this GPU's strengths and limitations, helping you determine if it's the right fit for your LLM projects.

Understanding LLM Inference and Token Speed

Before diving into the RTX 4000 Ada 20GB, let's briefly clarify LLM inference. LLM inference is the process of using a trained LLM model to generate outputs, like text, code, summaries, or translations. Think of it like asking a trained expert a question and receiving an informed response.

One crucial metric for evaluating LLM inference performance is token speed. Imagine tokens as the building blocks of language, like words or parts of words. Token speed represents how many tokens per second (tokens/second) a GPU can process, which directly impacts the speed of your LLM's responses.

The RTX 4000 Ada 20GB: A Force to Be Reckoned With

The RTX 4000 Ada 20GB is a mid-range graphics card, a workhorse if you will, known for its remarkable performance in various applications, including:

- 3D Rendering: The RTX 4000 Ada 20GB is a powerhouse for 3D artists and game developers, delivering smooth and detailed visuals.

- Video Editing: Its high memory bandwidth makes it a great choice for editing demanding video projects.

- Deep Learning: While not a top-tier gaming card, the RTX 4000 Ada 20GB excels in deep learning applications, making it an attractive option for LLM inference.

Performance Benchmarks: Llama 3 on RTX 4000 Ada 20GB

Let's examine the RTX 4000 Ada 20GB's performance using real-world data. We'll focus on the Llama 3 family of LLMs, which are renowned for their high quality and versatility.

Here's a rundown of the RTX 4000 Ada 20GB's token speeds for various Llama 3 models and configurations:

| LLM Model | Quantization | Token Speed (Tokens / Second) | |

|---|---|---|---|

| Llama 3 8B | Q4KM | 58.59 | |

| Llama 3 8B | F16 | 20.85 | |

| Llama 3 70B | Q4KM | N/A | (Data not available) |

| Llama 3 70B | F16 | N/A | (Data not available) |

Understanding Quantization: A Simple Analogy

Quantization is a technique used to reduce the size of LLM models, making them easier to work with and faster to run. Imagine you have a giant library filled with books on every topic under the sun. To make it easier to navigate, you can organize the books into smaller categories, like "Fiction," "Science," and "History."

Similarly, quantization takes a complex LLM model and compresses its data into smaller, more manageable "categories" (using different precision levels). This trade-off between size and accuracy can significantly improve performance.

Breaking Down the Numbers:

- *Llama 3 8B Q4_K_M: * With Q4KM quantization, the RTX 4000 Ada 20GB can process around 58.59 tokens per second. This impressive speed makes it suitable for various LLM applications.

- *Llama 3 8B F16: * The F16 precision level delivers lower accuracy but significantly improves token speed. The RTX 4000 Ada 20GB achieves a remarkable 20.85 tokens/second using F16, ideal for speed-critical applications.

RTX 4000 Ada 20GB: Token Processing Performance

Let's shift our focus to token processing performance, which involves the actual computation involved in LLM inference. The higher this value, the faster your LLM model can process and respond to your prompts.

Here's what the data reveals:

| LLM Model | Quantization | Token Processing Speed (Tokens / Second) | |

|---|---|---|---|

| Llama 3 8B | Q4KM | 2310.53 | |

| Llama 3 8B | F16 | 2951.87 | |

| Llama 3 70B | Q4KM | N/A | (Data not available) |

| Llama 3 70B | F16 | N/A | (Data not available) |

Observations:

- *Q4_K_M vs. F16: * While Q4KM quantization delivers a slightly lower token processing speed for Llama 3 8B, it's still a respectable number.

- *Llama 3 70B: * Due to the model's size, performance data for both quantization levels is unavailable. This is a common occurrence with larger LLM models, as they require significantly more resources and processing power.

RTX 4000 Ada 20GB for LLM Inference: Value Proposition

The RTX 4000 Ada 20GB offers a compelling value proposition for those looking to run LLMs locally:

- Performance: The RTX 4000 Ada 20GB delivers impressive token speeds, particularly for smaller LLM models like Llama 3 8B, ensuring smooth and responsive LLM interactions.

- Affordability: Compared to high-end GPUs designed for LLM training, the RTX 4000 Ada 20GB provides a more budget-friendly option for inference.

- Versatility: This GPU is not limited to LLMs; it can also handle graphics-intensive tasks like 3D rendering and video editing, making it a versatile addition to any workstation.

Limitations: Understanding Trade-offs

While the RTX 4000 Ada 20GB is a powerful card for LLM inference, it's important to be aware of its limitations:

- *Larger Models: * Handling massive LLM models like Llama 3 70B might require a more powerful GPU with higher memory capacity.

- Real-time Performance: For extremely demanding applications requiring real-time interaction, a GPU with even greater performance might be necessary.

- Energy Consumption: While not excessive, the RTX 4000 Ada 20GB's power consumption may be a factor for users with limited power budgets.

FAQ: Demystifying LLM Inference and GPUs

What is the difference between LLM training and LLM inference?

LLM training is the process of creating an LLM model by feeding it massive amounts of data. Imagine teaching a student a subject by providing them with countless textbooks and exercises. Once trained, the LLM model can then be used for inference, where it responds to prompts and provides outputs. Think of this as the student using their gained knowledge to answer questions or solve problems.

What factors affect LLM inference performance?

Several factors influence LLM inference performance, including:

- GPU Power: The computational prowess of the GPU directly impacts how quickly the LLM can process data and generate responses.

- *Model Size: * Larger models typically require more resources and computation, impacting performance.

- Quantization Level: Choosing the right quantization level can significantly improve performance but may come with accuracy trade-offs.

Is the RTX 4000 Ada 20GB suitable for all LLM models?

While the RTX 4000 Ada 20GB performs admirably for smaller LLM models like Llama 3 8B, it may not be ideal for larger, more demanding models. For those, you might want to consider a more powerful GPU.

Keywords

LLM Inference, NVIDIA RTX 4000 Ada 20GB, Llama 3, Token Speed, Quantization, GPU, Deep Learning, Large Language Models, AI, Machine Learning