NVIDIA L40S 48GB vs. NVIDIA A100 PCIe 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and with them come questions about the best hardware for running them. For developers and enthusiasts looking to explore local LLM deployment, two popular choices are the NVIDIA L40S48GB and the NVIDIA A100PCIe_80GB GPUs. Both offer incredible processing power, but which one reigns supreme when it comes to token generation speed? This article dives deep into a benchmark analysis comparing the two GPUs for running Llama3 models, focusing on generation speed.

Imagine you're building a chatbot that needs to generate responses in real-time. The speed at which your GPU can spit out tokens (the building blocks of text) directly impacts user experience. A faster GPU means snappier responses and a more fluid interaction.

This article will shed light on the performance battle between the NVIDIA L40S48GB and the NVIDIA A100PCIe_80GB, helping you choose the best GPU for your LLM projects.

Comparing NVIDIA L40S48GB and NVIDIA A100PCIe_80GB for Llama3 Models

Let's dive into the numbers! We'll analyze the token generation speeds of these GPUs across different Llama3 models. Note: The results are based on benchmarks using llama.cpp and data provided by ggerganov and XiongjieDai.

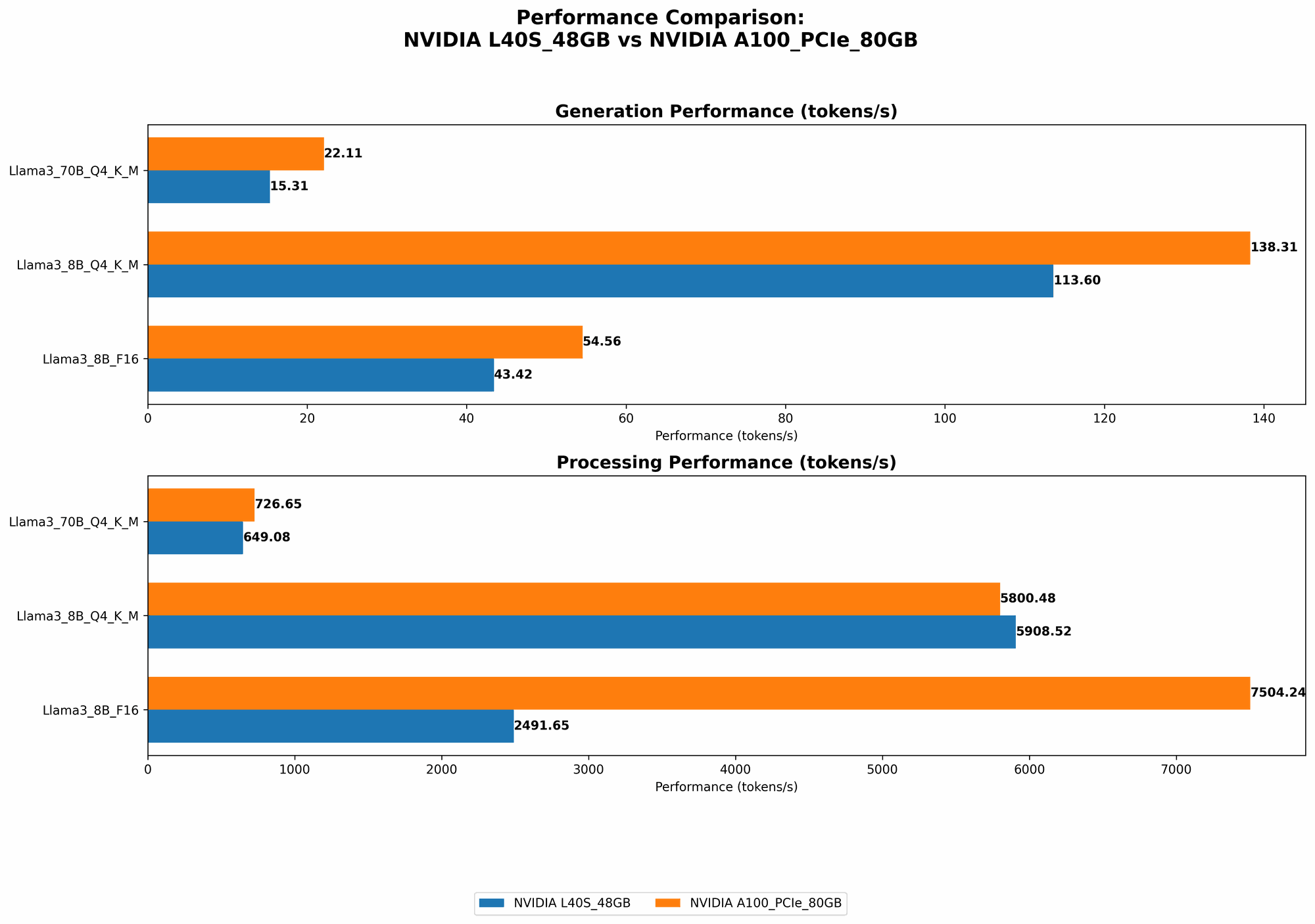

Token Generation Speed: Llama3 8B Models

- Llama3 8B Q4KM: This configuration represents quantized model with 4-bit quantization.

- Llama3 8B F16: This configuration uses 16-bit floating point representation.

| GPU | Llama3 8B Q4KM Generation (tokens/second) | Llama3 8B F16 Generation (tokens/second) |

|---|---|---|

| NVIDIA L40S_48GB | 113.6 | 43.42 |

| NVIDIA A100PCIe80GB | 138.31 | 54.56 |

The A100PCIe80GB comes out on top for both Q4KM and F16 configurations of Llama3 8B. It consistently generates tokens at a faster rate than the L40S48GB. The A100PCIe80GB achieves a 22% improvement in Q4K_M generation and a 25% improvement in F16 generation.

Token Generation Speed: Llama3 70B Models

- Llama3 70B Q4KM: Quantized model with 4-bit quantization

- Llama3 70B F16: Using 16-bit floating point representation

| GPU | Llama3 70B Q4KM Generation (tokens/second) | Llama3 70B F16 Generation (tokens/second) |

|---|---|---|

| NVIDIA L40S_48GB | 15.31 | N/A |

| NVIDIA A100PCIe80GB | 22.11 | N/A |

The A100PCIe80GB again emerges as the faster GPU for Llama3 70B Q4KM generation. It delivers a 45% performance improvement compared to the L40S_48GB. F16 generation results for Llama3 70B are not available, likely due to memory constraints for the 70B model.

Performance Analysis: Breaking Down the Numbers

Let's decipher the results and understand the factors behind the observed performance differences:

- Memory Bandwidth: The A100PCIe80GB boasts higher memory bandwidth compared to the L40S_48GB. This translates to faster data transfer between the GPU and the memory, crucial for the computationally intensive tasks involved in LLM inference. Think of it like a high-speed highway for data movement, enabling the A100 to process information quickly.

- GPU Cores: The A100PCIe80GB has more GPU cores than the L40S_48GB. These cores perform parallel processing, leading to faster token generation speeds. Imagine a team of skilled workers versus a smaller team; the larger team with more cores can complete tasks (in this case, token generation) faster.

- Power Consumption: The A100PCIe80GB has a higher power consumption compared to the L40S_48GB. This is a trade-off for the enhanced performance. You might see a slightly higher electricity bill if you choose the A100.

Strengths and Weaknesses

NVIDIA L40S_48GB

Strengths:

- Lower power consumption than the A100PCIe80GB.

- Offers a good balance between price and performance for smaller models.

Weaknesses:

- Lower memory bandwidth and fewer GPU cores compared to the A100PCIe80GB.

- May struggle with larger models due to memory constraints.

NVIDIA A100PCIe80GB

Strengths:

- Higher memory bandwidth and more GPU cores, leading to faster token generation speeds.

- Can comfortably handle large language models.

Weaknesses:

- Higher power consumption than the L40S_48GB.

- Can be more expensive than the L40S_48GB.

Practical Use Cases and Recommendations

- Smaller Models: For working with smaller Llama3 models like Llama3 8B, the L40S_48GB is a decent choice. It offers a good performance-to-price ratio.

- Larger Models: When working with larger models such as Llama3 70B, the A100PCIe80GB is the winner. Its high memory bandwidth and numerous cores provide the horsepower needed for these massive models.

- Budget-Conscious: If power consumption is a concern, the L40S_48GB is a more budget-friendly option.

- Performance-Driven: If speed is paramount, the A100PCIe80GB will get you there faster.

Conclusion

Choosing the right GPU for your LLM projects requires a careful consideration of factors like model size, performance requirements, and budget constraints. In this head-to-head comparison, the NVIDIA A100PCIe80GB emerged as the winner in terms of token generation speed, especially for larger Llama3 models. While the L40S_48GB provides a good balance for smaller models, the A100's impressive performance capabilities make it the go-to choice for ambitious LLM projects.

FAQ: Answering Your Burning Questions

What is quantization?

Quantization is a technique used to compress LLM models, making them smaller and faster to run. Think of it like compressing a large movie file into a smaller version to fit on your phone! The process involves converting the model's numbers (which typically use 32 bits to represent a value) into a smaller number of bits (like 4 or 8 bits). This reduces the size of the model and speeds up inference.

How does memory bandwidth impact performance?

Memory bandwidth refers to the rate at which a GPU can transfer data between its memory and its processing units. Imagine a conveyor belt moving data; a faster conveyor belt (higher memory bandwidth) means more data can be transferred quickly, leading to improved performance.

What are GPU cores?

GPU cores are the processing units within a GPU that perform calculations. Think of them like individual workers on a team; more workers (GPU cores) mean you can accomplish more tasks (like token generation) in parallel, leading to faster execution.

Is the NVIDIA A100PCIe80GB always the best choice?

It depends! While the A100PCIe80GB excels in performance, its higher cost and power consumption might make it less suitable for budget-constrained projects or those where power consumption is a major concern. The L40S_48GB offers a good balance for smaller models and may be a more practical choice for certain applications.

Keywords:

NVIDIA L40S48GB, NVIDIA A100PCIe80GB, LLM, Llama3, token generation speed, benchmark analysis, GPU, memory bandwidth, GPU cores, performance, quantization, F16, Q4K_M, inference, large language models.