NVIDIA L40S 48GB for LLM Inference: Performance and Value

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run them locally. But how do you choose the right GPU for your LLM needs? Enter the NVIDIA L40S 48GB, a powerhouse designed to handle the demanding computations of LLM inference.

This article dives deep into the L40S 48GB's performance for various Llama model sizes, comparing the speed of different quantization levels (Q4KM vs. F16) and exploring the real-world implications of these numbers. Whether you're a developer building a custom LLM application or simply curious about the evolving landscape of AI hardware, this guide will shed light on the L40S 48GB's capabilities and help you decide if it's the right fit for your LLM journey.

NVIDIA L40S 48GB: A Hardware Overview

The NVIDIA L40S 48GB is a high-performance GPU designed specifically for AI workloads. It packs 48GB of HBM3e memory, offering massive bandwidth for handling the intricate language models. Its 80 Streaming Multiprocessors (SMs) provide a staggering 10240 CUDA cores, capable of churning through complex mathematical operations with blazing speed.

Think of the L40S 48GB as a super-powered brain, optimized to fuel the cognitive fire of LLMs. It's like having a dedicated team of super-fast mathematicians working tirelessly to unravel the mysteries of language, churning out responses and insights at an exhilarating pace.

Quantization: Bridging the Gap Between Power and Efficiency

LLMs rely on complex mathematical operations, but they're not always as efficient as we'd like them to be. That's where quantization steps in. It's a technique that reduces the precision of the model's weights and activations, making it smaller and faster while sacrificing a tiny bit of accuracy.

Think of it like using a simplified map instead of a detailed one. You still get to your destination, but you lose some of the finer details.

We'll explore two popular quantization levels:

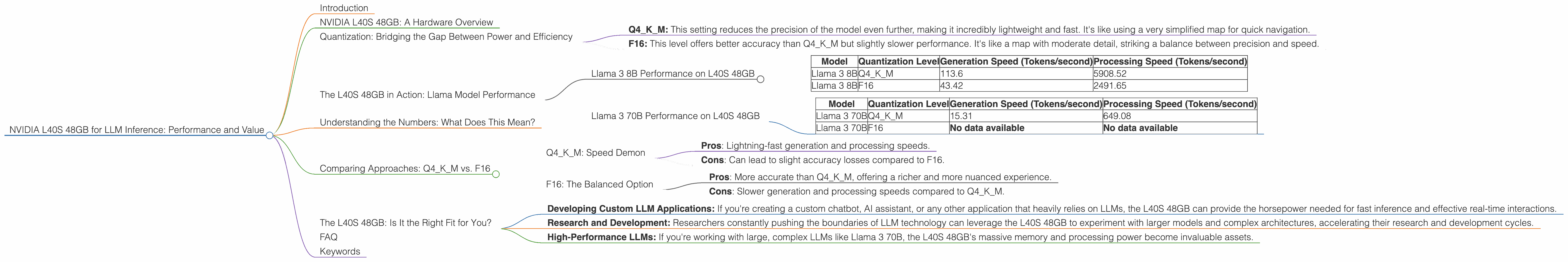

- Q4KM: This setting reduces the precision of the model even further, making it incredibly lightweight and fast. It's like using a very simplified map for quick navigation.

- F16: This level offers better accuracy than Q4KM but slightly slower performance. It's like a map with moderate detail, striking a balance between precision and speed.

The L40S 48GB in Action: Llama Model Performance

Let's dive into the heart of the matter: how does the L40S 48GB perform with different Llama models and quantization levels? We'll focus on two popular models: Llama 3 8B and Llama 3 70B.

Llama 3 8B Performance on L40S 48GB

| Model | Quantization Level | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 113.6 | 5908.52 |

| Llama 3 8B | F16 | 43.42 | 2491.65 |

Key Observations:

- Q4KM Reigns Supreme: The L40S 48GB achieves a remarkable 113.6 tokens/second generation speed with Llama 3 8B under the Q4KM quantization level. This means the model can process 113.6 words per second, which is incredibly fast for a powerful language model.

- F16 Offers Balance: While the F16 quantization level sacrifices some speed, it offers a significant boost in accuracy compared to Q4KM. The L40S 48GB still manages a respectable 43.42 tokens/second generation speed with this level of detail.

Llama 3 70B Performance on L40S 48GB

| Model | Quantization Level | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|---|

| Llama 3 70B | Q4KM | 15.31 | 649.08 |

| Llama 3 70B | F16 | No data available | No data available |

Key Observations:

- Q4KM Still Competitive: The L40S 48GB can still handle the massive Llama 3 70B model with decent performance, achieving 15.31 tokens/second generation speed under Q4KM quantization.

- F16 Data Missing: Unfortunately, we don't have data available for the F16 quantization level with Llama 3 70B on the L40S 48GB. This could be due to limitations in available benchmarks or the model's size demanding more resources.

Understanding the Numbers: What Does This Mean?

So, these token-per-second numbers are great... but what do they actually mean? They tell us about the speed at which the model generates text and how efficiently it processes the input.

To put it in simpler terms, imagine you're a writer, and your goal is to churn out a novel. The generation speed is like your writing speed, while the processing speed is how quickly your brain can comprehend and analyze the plot, characters, and setting.

Higher generation speeds mean the model can generate text faster, leading to quicker responses and smoother conversational flows.

Faster processing speeds translate to quicker analysis and more efficient understanding of complex language, enabling the model to handle more nuanced queries and generate more detailed responses.

Comparing Approaches: Q4KM vs. F16

Q4KM: Speed Demon

- Pros: Lightning-fast generation and processing speeds.

- Cons: Can lead to slight accuracy losses compared to F16.

F16: The Balanced Option

- Pros: More accurate than Q4KM, offering a richer and more nuanced experience.

- Cons: Slower generation and processing speeds compared to Q4KM.

In a nutshell:

Q4KM is like a speed demon, offering incredibly fast results but with a slight compromise on accuracy. F16 is like a marathon runner, taking a little longer but delivering high-quality, detailed results.

The best choice depends on your specific needs. If speed is paramount, Q4KM is your go-to option. If you prioritize accuracy and a more nuanced experience, F16 should be your choice.

The L40S 48GB: Is It the Right Fit for You?

Now, let's talk about the elephant in the room - cost. The L40S 48GB isn't cheap. But considering its impressive performance, it can offer a tangible return on investment for developers and businesses working with large language models.

Here's a scenario: imagine you're building a chatbot application. Your users expect quick responses, and you need a powerful LLM to handle complex conversations. With the L40S 48GB, you can provide a seamless and responsive user experience, even with demanding LLMs like Llama 3 70B.

Here's how the L40S 48GB shines in specific scenarios:

- Developing Custom LLM Applications: If you're creating a custom chatbot, AI assistant, or any other application that heavily relies on LLMs, the L40S 48GB can provide the horsepower needed for fast inference and effective real-time interactions.

- Research and Development: Researchers constantly pushing the boundaries of LLM technology can leverage the L40S 48GB to experiment with larger models and complex architectures, accelerating their research and development cycles.

- High-Performance LLMs: If you're working with large, complex LLMs like Llama 3 70B, the L40S 48GB's massive memory and processing power become invaluable assets.

FAQ

Q: What other hardware is available for LLM inference?

A: While the L40S 48GB is a powerful option, it's not the only player in the game. Other GPUs like the NVIDIA A100 and H100, as well as CPUs with specialized AI features like the AMD EPYC, offer excellent performance for LLM inference. The choice depends on your specific model, budget, and performance requirements.

Q: How does the L40S 48GB compare to other GPUs for LLM inference?

A: The L40S 48GB is a competitive option, offering strong performance and substantial memory capacity. Its advantage lies in its high memory bandwidth, which is critical for handling the massive datasets used in LLM models. However, its performance can vary depending on the specific LLM model and the chosen quantization level.

Q: Is the L40S 48GB suitable for everyone?

A: The L40S 48GB is a high-performance GPU with a high price tag. It's ideal for developers working with large LLMs or those who require substantial computational power. However, if you're working with smaller LLMs or have a limited budget, other GPUs may be more suitable.

Q: What are the trade-offs between different quantization levels?

A: Quantization levels are like a balancing act. You can sacrifice some accuracy for faster speeds with Q4KM, or prioritize accuracy with F16, but accept slower performance.

Q: What is the future of LLM hardware?

A: The field of AI hardware is constantly evolving. Expect even more powerful and specialized hardware to emerge, pushing the boundaries of what's possible with LLMs.

Keywords

NVIDIA L40S 48GB, LLM inference, Llama models, Llama 3 8B, Llama 3 70B, GPU performance, quantization, Q4KM, F16, token generation speed, processing speed, AI hardware, LLM development, research, custom LLM applications, conversational AI, chatbot, AI assistant, cost-benefit analysis, return on investment, AI landscape, future of AI hardware