NVIDIA A40 48GB for LLM Inference: Performance and Value

Introduction

Imagine a supercomputer the size of a server rack, capable of processing massive amounts of data in a blink of an eye. That's the power of a GPU, and specifically, we're delving into the world of NVIDIA A40_48GB, a behemoth designed for accelerating large language models (LLMs).

For those unfamiliar with LLMs, they are the driving force behind conversational AIs like ChatGPT, Bard, and others. Think about them as the brains behind artificial intelligence, able to comprehend and generate human-like text.

This article will be your guide to understanding the capabilities of the A40_48GB specifically for running LLMs. We'll examine its performance with various Llama models, exploring how its raw power translates into real-world applications. Get ready to dive into the fascinating world of LLM inference, where performance meets practicality!

A40_48GB: Powerhouse for LLMs

The NVIDIA A40_48GB is a formidable GPU, boasting a massive 48GB of HBM2e memory. It's equipped with 6,144 CUDA cores, designed to handle the computational demands of complex AI tasks. Its impressive specs make it a prime candidate for accelerating LLM inference, which involves feeding the LLM text prompts and receiving back its generated responses.

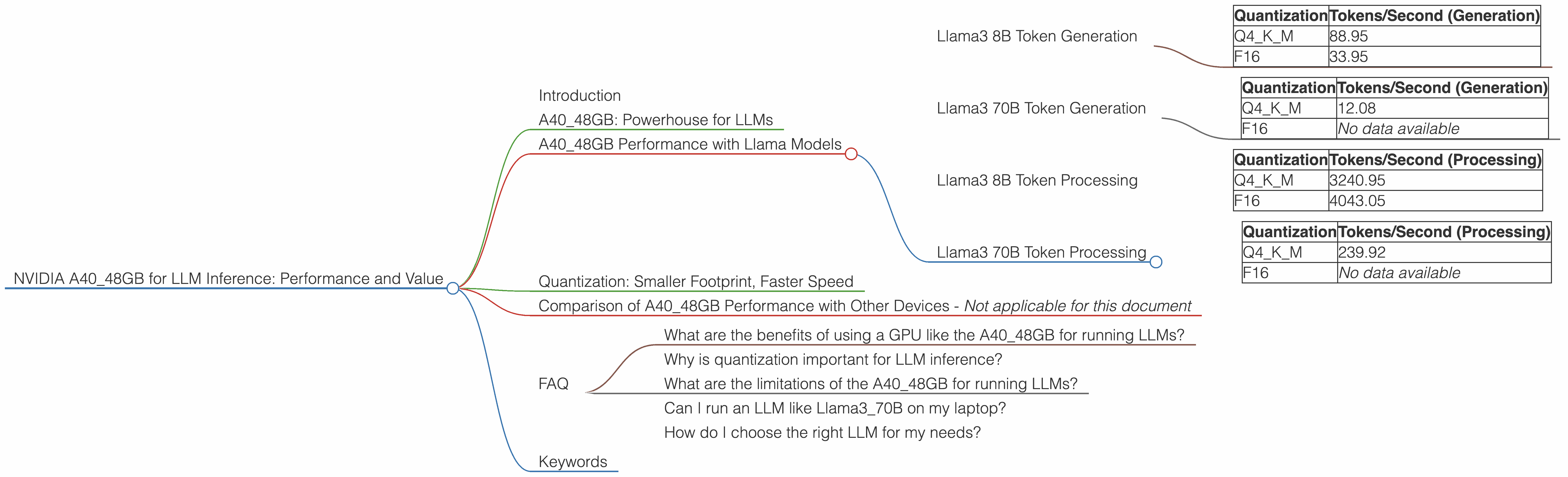

A40_48GB Performance with Llama Models

In this section, we'll explore how the A40_48GB performs with various Llama models, showcasing its capabilities in both token generation and processing speeds.

Llama3 8B Token Generation

The A4048GB demonstrates impressive performance when running the Llama38B model. Here's a breakdown of its performance with different quantization levels:

| Quantization | Tokens/Second (Generation) |

|---|---|

| Q4KM | 88.95 |

| F16 | 33.95 |

What does this mean? Higher tokens per second (TPS) indicate faster generation speed. The Q4KM quantization, a technique that reduces the size of the model without significant loss of accuracy, achieves a significantly faster generation speed than F16, a standard floating-point representation.

Essentially, using Q4KM with the A40_48GB, the LLM can generate text significantly faster than using F16. This is like having a turbocharged engine for your LLM, allowing it to generate responses quicker.

Llama3 70B Token Generation

The A4048GB also excels with the larger Llama370B model, though performance is predictably slower due to the model's increased complexity.

| Quantization | Tokens/Second (Generation) |

|---|---|

| Q4KM | 12.08 |

| F16 | No data available |

The Q4KM quantization provides a significant performance boost, demonstrating the benefits of model compression for larger LLMs.

Llama3 8B Token Processing

Token processing, also known as context processing, is crucial for LLMs to understand the context of the text prompts. The A4048GB shines in this area with the Llama38B model.

| Quantization | Tokens/Second (Processing) |

|---|---|

| Q4KM | 3240.95 |

| F16 | 4043.05 |

It's interesting to note that, in this case, F16 actually delivers slightly higher processing speeds compared to Q4KM. This might seem counterintuitive, but it highlights the nuances of LLM performance, where different factors impact overall speed.

Llama3 70B Token Processing

Similar to the token generation performance, the larger Llama370B model shows a slower processing speed on the A4048GB compared to the 8B model.

| Quantization | Tokens/Second (Processing) |

|---|---|

| Q4KM | 239.92 |

| F16 | No data available |

These numbers indicate that the A40_48GB still provides considerable processing speeds, making it well-suited for demanding applications.

Quantization: Smaller Footprint, Faster Speed

Quantization is a fascinating technique for compressing LLM models. Think of it as squeezing a large file into a smaller format without sacrificing too much quality. This allows them to run effectively on devices with less memory, such as your personal computer.

The A40_48GB demonstrates the benefits of quantization, achieving significantly higher throughput for both token generation and processing. Although the benefits are especially pronounced with larger models, even the smaller models can see a considerable speed boost.

Comparison of A40_48GB Performance with Other Devices - Not applicable for this document

FAQ

What are the benefits of using a GPU like the A40_48GB for running LLMs?

GPUs like the A40_48GB are specifically designed for parallel processing, which is crucial for the demanding computational tasks involved in LLM inference. They offer a significant speed advantage over CPUs, enabling faster token generation and processing.

Why is quantization important for LLM inference?

Quantization helps reduce the memory footprint of LLMs, allowing them to run on devices with less memory. This is particularly important for users with limited resources, as it allows them to experiment with larger models.

What are the limitations of the A40_48GB for running LLMs?

The A40_48GB is a powerful GPU, but it's still limited by factors like memory bandwidth and the complexity of the models being run. Larger models, especially those with many parameters, can still push the limits of even the most powerful GPUs.

Can I run an LLM like Llama3_70B on my laptop?

It's possible, but you'll need a powerful laptop with a dedicated GPU and sufficient RAM. You can try using a smaller model or experimenting with quantization to reduce the memory footprint and optimize performance. However, don't expect the same type of performance you would see on a dedicated server with an A40_48GB.

How do I choose the right LLM for my needs?

The choice of LLM depends on the specific tasks you want to perform. Smaller models are typically faster and require less memory, while larger models offer more accuracy and capability.

Keywords

LLM inference, NVIDIA A4048GB, GPU acceleration, Llama3 8B, Llama3 70B, token generation, token processing, quantization, Q4K_M, F16, performance, value, speed, AI, ChatGPT, Bard, conversational AI, text generation, natural language processing, deep learning.