NVIDIA A100 SXM 80GB for LLM Inference: Performance and Value

Introduction

Imagine having a super-powered brain for your Large Language Model (LLM) applications – capable of generating text, translating languages, answering questions, and even writing creative content like a poet. That's the magic of powerful GPUs, like the NVIDIA A100SXM80GB, which are specifically designed to handle the complex computations behind LLMs.

This article delves into the capabilities of the A100SXM80GB, exploring its performance in running LLM inference, a key process for getting your models to do their magic. We'll compare its prowess with different LLM models, focusing on Llama 3, a popular open-source LLM, and explain how the choice of quantization and precision can impact the performance. So, strap in, tech enthusiasts, and let's dive into the world of supercharged LLM inference!

A100SXM80GB: A Beast of a GPU

The NVIDIA A100SXM80GB is a powerhouse in the world of GPUs, boasting a whopping 80GB of HBM2e memory and 40GB/s of bandwidth, making it a perfect match for handling the memory-intensive workloads of LLMs. This GPU architecture is designed to accelerate the inference process, which means getting your LLM to generate outputs like text or responses much faster.

Llama 3 LLM: A Popular Choice

Llama 3, a family of open-source LLMs developed by Meta, is a popular choice for various applications. Its different models, ranging in size from 7B to 70B parameters, offer a trade-off between performance and resource consumption.

Llama 3 Performance with A100SXM80GB

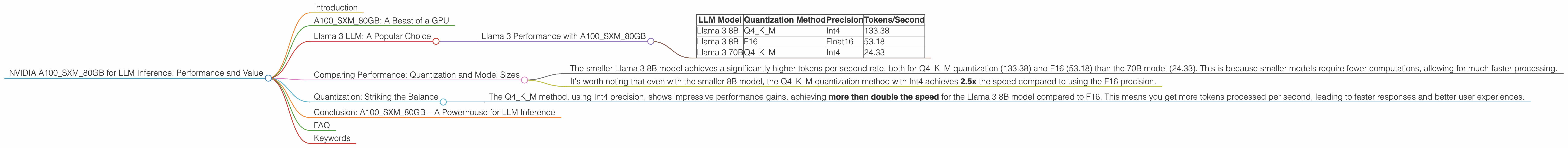

The benchmark results are based on the A100SXM80GB GPU. We'll focus on Llama 3 models and how their performance varies depending on the quantization method and precision used:

| LLM Model | Quantization Method | Precision | Tokens/Second |

|---|---|---|---|

| Llama 3 8B | Q4KM | Int4 | 133.38 |

| Llama 3 8B | F16 | Float16 | 53.18 |

| Llama 3 70B | Q4KM | Int4 | 24.33 |

- Q4KM: This quantization method compresses the model's weights, resulting in a smaller memory footprint and potentially faster inference speeds. Int4 is the precision used for the model weights.

- F16: This uses the Float16 data type for weights, meaning each number can be up to 16 bits long. This method provides higher precision, but potentially slower inference speeds compared to Int4 quantization.

Intuition Check: Imagine you're training a dog to do a trick. With more training, the dog becomes more accurate and can perform the trick faster. Quantization (like Q4KM) is like simplifying the dog's training so it can learn faster but might be a little less accurate. F16 is like the most thorough training, making the dog more precise but taking longer.

Comparing Performance: Quantization and Model Sizes

A100SXM80GB: Llama 3 8B vs. 70B

- The smaller Llama 3 8B model achieves a significantly higher tokens per second rate, both for Q4KM quantization (133.38) and F16 (53.18) than the 70B model (24.33). This is because smaller models require fewer computations, allowing for much faster processing.

- It's worth noting that even with the smaller 8B model, the Q4KM quantization method with Int4 achieves 2.5x the speed compared to using the F16 precision.

Thinking Like a Developer: Smaller models are like a car with a smaller engine. They can be faster in the city, but might not be as powerful for long journeys. Bigger models are like a truck. They can haul more, but require more resources for speed.

Quantization: Striking the Balance

Quantization is a powerful technique for optimizing LLM models. It compresses the weights of the models, reducing their size and potentially leading to faster inference speeds. Think of it as using a smaller backpack for your journey!

- The Q4KM method, using Int4 precision, shows impressive performance gains, achieving more than double the speed for the Llama 3 8B model compared to F16. This means you get more tokens processed per second, leading to faster responses and better user experiences.

Analogy Time: Imagine you're trying to fit as many bags of chips into a backpack as possible. Quantization is like using smaller bags, allowing you to pack more, even though there might be a bit less volume in each bag.

Conclusion: A100SXM80GB – A Powerhouse for LLM Inference

The NVIDIA A100SXM80GB proves to be a powerful tool for accelerating LLM inference. Its impressive memory capacity and bandwidth allow it to handle large language models efficiently, even when dealing with complex tasks like text generation.

By understanding the trade-offs between model size, quantization techniques, and precision, we can optimize LLM performance and unlock the true potential of these powerful models. The A100SXM80GB, coupled with clever optimization techniques, paves the way for the future of LLM applications.

FAQ

Q: What is LLM inference?

A: LLM inference is the process of using a trained LLM to generate outputs like text, translate languages, answer questions, or create content. It's essentially the "thinking" part of the LLM, where it takes an input and produces a relevant output.

Q: What is quantization and how does it affect performance?

A: Quantization is a method for compressing the weights of a neural network, which can reduce its size and potentially improve inference performance. It does this by reducing the number of bits used to represent the weights, leading to less memory usage and faster processing.

Q: What are the trade-offs between model size and performance?

A: Larger LLMs have more parameters, which can lead to more accurate and nuanced outputs but also require more resources for inference. Smaller LLMs, while less powerful, can run faster and consume less memory.

Q: Can I use a cheaper GPU for LLM inference?

A: Yes, you can try using a less powerful GPU, but the performance will likely be worse. You'll likely see a reduction in the number of tokens processed per second, leading to slower responses and possibly more resource consumption.

Keywords

LLM, Large Language Model, Inference, NVIDIA, A100SXM80GB, GPU, Llama 3, 70B, 8B, Q4KM, Quantization, Float16, Int4, Performance, Tokens/Second, Text Generation, Translation, Answer Questions, Speed, Optimization, AI, Machine Learning.