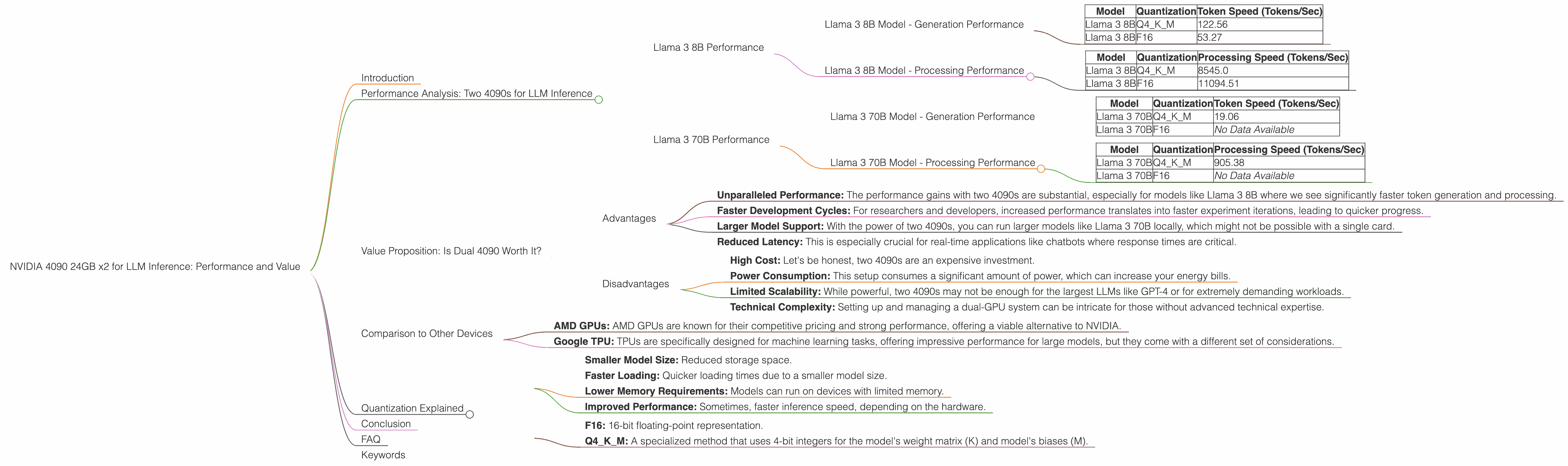

NVIDIA 4090 24GB x2 for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is booming, and with it the need for powerful hardware to handle their computationally demanding workloads. One popular choice for LLM inference is the NVIDIA GeForce RTX 4090, a high-end graphics card known for its raw processing power. But what about using two 4090s in tandem for even greater performance?

That's what we'll explore in this article. We'll dive into the performance and value proposition of using two NVIDIA 4090 24GB cards for running LLM inference, focusing on the popular Llama 3 models. We'll be looking at what makes this setup a potential game-changer for researchers, developers, and anyone looking to run LLMs locally.

Performance Analysis: Two 4090s for LLM Inference

This section will analyze the performance of two NVIDIA 4090 24GB cards running Llama 3 models. We'll be examining the tokens per second (tokens/sec) achieved for both generation (producing text) and processing (handling the underlying computations) for various models and quantization levels.

Important Notes:

- We'll only be focusing on the performance for the NVIDIA 4090 24GB x2 configuration in this article.

- This data is based on currently available benchmarks from the Llama.cpp community.

- Some combinations may not have data available.

Llama 3 8B Performance

The Llama 3 8B model is a popular choice for researchers and developers due to its manageable size while still exhibiting impressive performance.

Llama 3 8B Model - Generation Performance

| Model | Quantization | Token Speed (Tokens/Sec) |

|---|---|---|

| Llama 3 8B | Q4KM | 122.56 |

| Llama 3 8B | F16 | 53.27 |

What does this mean?

The table shows that using two 4090 24GB cards significantly boosts token generation speed for the Llama 3 8B model. The Q4KM quantization, a technique that reduces the model's memory footprint while maintaining relative performance, achieves over 122 tokens per second. This is approximately twice the speed of the F16 quantization.

Llama 3 8B Model - Processing Performance

| Model | Quantization | Processing Speed (Tokens/Sec) |

|---|---|---|

| Llama 3 8B | Q4KM | 8545.0 |

| Llama 3 8B | F16 | 11094.51 |

What does this mean?

The processing speeds for the Llama 3 8B model are even more impressive. Notice that, even with Q4KM quantization, the dual 4090s can manage a remarkable 8545 tokens per second for processing, while the F16 quantization achieves an even higher speed of 11094.51 tokens per second. This highlights the extraordinary potential of the dual card configuration for handling the complex computations underlying LLM inference.

Llama 3 70B Performance

The Llama 3 70B model is a much larger, more complex LLM, capable of generating higher quality outputs. However, its size also presents significant challenges in terms of memory consumption and processing demands.

Llama 3 70B Model - Generation Performance

| Model | Quantization | Token Speed (Tokens/Sec) |

|---|---|---|

| Llama 3 70B | Q4KM | 19.06 |

| Llama 3 70B | F16 | No Data Available |

What does this mean?

The dual 4090 24GB setup can handle the Llama 3 70B model with Q4KM quantization, achieving a respectable 19.06 tokens per second. However, it's important to note that F16 quantization data is currently unavailable for this model and configuration.

Llama 3 70B Model - Processing Performance

| Model | Quantization | Processing Speed (Tokens/Sec) |

|---|---|---|

| Llama 3 70B | Q4KM | 905.38 |

| Llama 3 70B | F16 | No Data Available |

What does this mean?

Similar to generation performance, processing data for the Llama 3 70B model using two 4090s and F16 quantization is currently unavailable. However, the dual 4090 setup can still manage a respectable 905.38 tokens per second for the Llama 3 70B with Q4KM quantization.

Value Proposition: Is Dual 4090 Worth It?

So, is the investment in two NVIDIA 4090s worth it for LLM inference? The answer depends on your use case and budget.

Advantages

Here's a breakdown of the key advantages of utilizing two 4090s:

- Unparalleled Performance: The performance gains with two 4090s are substantial, especially for models like Llama 3 8B where we see significantly faster token generation and processing.

- Faster Development Cycles: For researchers and developers, increased performance translates into faster experiment iterations, leading to quicker progress.

- Larger Model Support: With the power of two 4090s, you can run larger models like Llama 3 70B locally, which might not be possible with a single card.

- Reduced Latency: This is especially crucial for real-time applications like chatbots where response times are critical.

Disadvantages

- High Cost: Let's be honest, two 4090s are an expensive investment.

- Power Consumption: This setup consumes a significant amount of power, which can increase your energy bills.

- Limited Scalability: While powerful, two 4090s may not be enough for the largest LLMs like GPT-4 or for extremely demanding workloads.

- Technical Complexity: Setting up and managing a dual-GPU system can be intricate for those without advanced technical expertise.

Comparison to Other Devices

While this article focuses on the capabilities of two NVIDIA 4090 24GB cards, it's worth noting that other high-end GPUs can also be used for LLM inference.

- AMD GPUs: AMD GPUs are known for their competitive pricing and strong performance, offering a viable alternative to NVIDIA.

- Google TPU: TPUs are specifically designed for machine learning tasks, offering impressive performance for large models, but they come with a different set of considerations.

Important Note: This article doesn't compare the performance of other GPUs to the dual 4090 setup. Our focus is on understanding the performance and value proposition of this specific configuration for LLM inference.

Quantization Explained

Quantization is a technique commonly employed in LLMs to reduce the size of the model's weights, the core parameters that define the model's behavior. Basically, it converts the model's parameters, which are typically represented as 32-bit floating-point numbers, into smaller representations such as 8-bit or 16-bit integers.

Why is this useful? Here's a simple analogy:

Imagine you have a huge library filled with books. Each book represents a parameter in the LLM. Now, imagine you want to move this library to a smaller space. Quantization is like taking those heavy books and replacing them with lighter versions.

What are the benefits of Quantization?

- Smaller Model Size: Reduced storage space.

- Faster Loading: Quicker loading times due to a smaller model size.

- Lower Memory Requirements: Models can run on devices with limited memory.

- Improved Performance: Sometimes, faster inference speed, depending on the hardware.

Common Quantization Levels:

- F16: 16-bit floating-point representation.

- Q4KM: A specialized method that uses 4-bit integers for the model's weight matrix (K) and model's biases (M).

While quantization can bring significant advantages, it can also sometimes lead to a slight reduction in model accuracy.

Conclusion

The dual NVIDIA 4090 24GB setup delivers impressive performance for LLM inference, especially for models like Llama 3 8B. The increased token generation and processing speeds, coupled with the capability to run larger models, make it a valuable tool for researchers and developers. However, the high cost, energy consumption, and technical complexity are important factors to consider before investing in this configuration.

FAQ

Q: Can I run Llama 2 models with two 4090s?

Yes, you can run Llama 2 models on two 4090s, but the choice depends on the specific model size and your desired performance level.

Q: What about other LLMs like GPT-3 or GPT-4?

While the dual 4090 setup can handle larger LLMs, the performance might not be optimal for extremely large models like GPT-4.

Q: Is two 4090s the best option for LLM inference?

It depends on your specific needs. If you're looking for the absolute highest performance, two 4090s are a strong contender. However, other GPU options, like those from AMD, might offer better cost-to-performance ratios.

Q: What are some alternatives to using a dedicated GPU for LLM inference?

You can consider cloud-based services like Google Colab or Amazon SageMaker, which provide access to powerful infrastructure without the need for local hardware.

Keywords

NVIDIA 4090, LLM Inference, Llama 3, Token Speed, Generation, Processing, Quantization, Q4KM, F16, Performance, Value, Cost, GPU, AI, Machine Learning, Natural Language Processing, LLM, Deep Learning, GeForce RTX 4090, Dual 4090, GPU Benchmark, LLM Inference Performance, LLM Model Size