NVIDIA 4090 24GB vs. NVIDIA RTX 5000 Ada 32GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the fast-paced world of Large Language Models (LLMs), choosing the right hardware can be a game-changer for developers and researchers alike. This article delves into the performance comparison between two powerhouse GPUs, the NVIDIA 4090 24GB and NVIDIA RTX 5000 Ada 32GB, specifically in their ability to generate tokens for Llama 3 models. We'll examine the token generation speed across different model sizes and quantization levels, analyze their strengths and weaknesses, and provide practical recommendations for various use cases.

Imagine you're building a custom chatbot or developing a creative writing tool using a powerful LLM like Llama 3. Your choice of GPU can significantly impact the speed at which your application responds, ultimately influencing the user experience.

Performance Analysis: NVIDIA 4090 24GB vs. NVIDIA RTX 5000 Ada 32GB

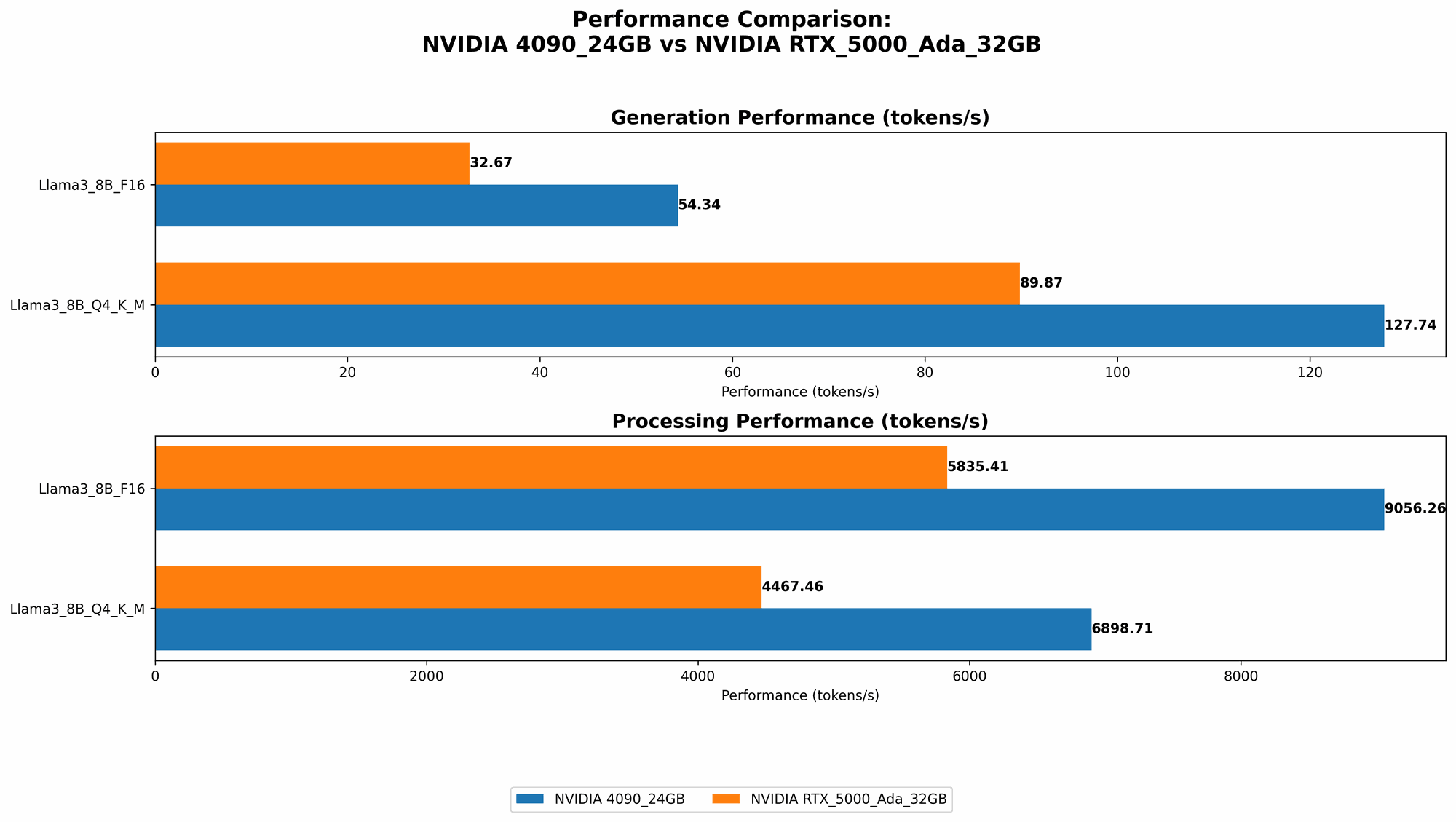

Token Generation Speed Comparison

| GPU | Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| NVIDIA 4090 24GB | Llama 3 8B | Q4KM | 127.74 |

| NVIDIA 4090 24GB | Llama 3 8B | F16 | 54.34 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | Q4KM | 89.87 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | F16 | 32.67 |

Note: The data for Llama 3 70B models is not available for either GPU in this benchmark analysis.

Observations:

- The NVIDIA 4090 24GB consistently outperforms the NVIDIA RTX 5000 Ada 32GB in terms of token generation speed for both quantization levels (Q4KM and F16) for the Llama 3 8B model.

- The 4090 24GB achieves nearly 42% higher token generation speed with Q4KM quantization and almost 66% faster with F16 quantization compared to the RTX 5000 Ada 32GB.

Understanding Quantization: A Simple Analogy

Think of quantization as compressing the model's information. Q4KM uses a smaller "file size" for the model, making it faster to load and process, but it might sacrifice a bit of accuracy. F16 uses a larger "file size," potentially leading to more accurate outputs but with slower processing times.

Token Processing Speed Comparison

| GPU | Model | Quantization | Token Processing Speed (tokens/second) |

|---|---|---|---|

| NVIDIA 4090 24GB | Llama 3 8B | Q4KM | 6898.71 |

| NVIDIA 4090 24GB | Llama 3 8B | F16 | 9056.26 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | Q4KM | 4467.46 |

| NVIDIA RTX 5000 Ada 32GB | Llama 3 8B | F16 | 5835.41 |

Observations:

- The NVIDIA 4090 24GB outperforms the RTX 5000 Ada 32GB in token processing speed for both quantization levels (Q4KM and F16) for the Llama 3 8B model.

- The 4090 24GB achieves 54% higher token processing speed with Q4KM quantization and 55% faster with F16 quantization compared to the RTX 5000 Ada 32GB.

Strengths and Weaknesses

NVIDIA 4090 24GB:

Strengths:

- Faster Token Generation and Processing: The 4090 24GB boasts significantly faster token generation and processing speeds for Llama 3 8B models, making it ideal for real-time applications requiring quick responses.

- Larger Memory Capacity: Its 24GB GDDR6X memory allows it to handle larger models and datasets, potentially supporting more complex LLMs in the future.

Weaknesses:

- Higher Price: The 4090 24GB is a high-end GPU with a premium price tag, which may present a barrier for some budgets.

- Power Consumption: This GPU is a power-hungry beast, demanding substantial power consumption, which might be a concern for some users.

NVIDIA RTX 5000 Ada 32GB:

Strengths:

- Affordable Price: Compared to the 4090 24GB, the RTX 5000 Ada 32GB offers a more budget-friendly option for developers and researchers.

- Lower Power Consumption: Its power consumption is considerably lower than the 4090 24GB, making it a more energy-efficient choice.

Weaknesses:

- Slower Token Speed: Although it has a larger 32GB memory, its token generation and processing speed lag behind the 4090 24GB, which might compromise performance for real-time applications.

- Limited Model Support: The 32GB memory might become a limitation for handling larger LLM models in the future, as these models continue to grow in size.

Use Case Recommendations

NVIDIA 4090 24GB:

- Real-time Chatbots: For building chatbots that require rapid responses, the 4090 24GB's speed advantage is essential.

- Large-Scale Text Generation: Tasks like creating complex stories, articles, or code require the 4090 24GB's processing power and larger memory to efficiently handle large datasets.

- Research and Development: Researchers exploring new LLM architectures or developing advanced text generation models could benefit from the 4090 24GB's capabilities.

NVIDIA RTX 5000 Ada 32GB:

- Beginner LLM Projects: Developers starting with LLMs and working with smaller models might find the RTX 5000 Ada 32GB's price and power efficiency attractive.

- Batch Processing: If you're dealing with bulk text generation or analysis tasks, the RTX 5000 Ada 32GB's larger 32GB memory can handle larger datasets, although you might need longer processing times.

- Budget-Constrained Projects: For projects with limited budgets, the RTX 5000 Ada 32GB offers a balance between performance and cost.

Conclusion

Choosing between the NVIDIA 4090 24GB and the RTX 5000 Ada 32GB depends on your specific use case. The 4090 24GB excels in speed and power but comes at a higher price. The RTX 5000 Ada 32GB offers a more affordable option with larger memory, but with slower processing times.

Ultimately, the best GPU for LLM model training and inference is the one that best fits your project's requirements, budget, and performance expectations.

FAQ

Q: What is the difference between token generation and token processing?

A: Token generation refers to the speed at which a model generates new text tokens - essentially, the speed of creating new words or phrases. Token processing refers to the speed at which a model processes existing tokens - essentially, the speed of understanding and working with the input text.

Q: How does quantization impact LLM performance?

A: Quantization is a technique used to reduce the memory footprint of LLMs, making them smaller and faster to load. However, it may also impact accuracy, as the model loses some precision during the quantization process.

Q: What are some other GPUs for LLM models?

A: Besides the NVIDIA 4090 24GB and RTX 5000 Ada 32GB, other popular GPUs for LLMs include the NVIDIA A100, A40, and H100. These GPUs offer different performance levels and memory capacities, so the optimal choice depends on your LLM model and use case.

Q: Is it possible to run LLMs on a CPU?

A: Yes, it is possible to run LLMs on a CPU, but it will be significantly slower than using a GPU, especially for larger models.

Q: Can I run LLMs on my personal computer?

A: Yes, you can run LLMs on your personal computer if it has a powerful enough GPU. For smaller models, you may not even need a GPU. However, running larger LLMs on a personal computer may require a dedicated GPU like the ones discussed in this article.

Keywords

NVIDIA 4090 24GB, NVIDIA RTX 5000 Ada 32GB, LLM, Large Language Model, Llama 3, Token Generation Speed, Token Processing Speed, Quantization, Q4KM, F16, GPU, Performance Benchmark, AI, Machine Learning, Deep Learning, Text Generation, Chatbot, Natural Language Processing, NLP.