NVIDIA 4090 24GB vs. NVIDIA A100 PCIe 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightly so! These powerful AI systems are revolutionizing how we interact with technology, from generating creative content to translating languages and answering complex questions. However, running LLMs locally can be resource-intensive, demanding powerful hardware to handle the massive computations involved. This is where the choice of hardware comes in, and two popular contenders are NVIDIA's GeForce RTX 4090 24GB and the A100 PCIe 80GB.

This article dives deep into the performance differences between the NVIDIA 4090 24GB and the A100 PCIe 80GB when it comes to running LLMs, focusing specifically on token generation speed. We'll analyze benchmark data, break down the results, and compare the strengths and weaknesses of each device to help you make an informed decision for your LLM endeavors.

Choosing the Right Hardware for LLMs: NVIDIA 4090 24GB vs. A100 PCIe 80GB

Deciding between these two powerhouse GPUs is a crucial step in setting up your local LLM environment. Both GPUs are known for their impressive performance, but they offer distinct advantages depending on your specific needs.

NVIDIA 4090 24GB: The Consumer-Focused Powerhouse

The NVIDIA 4090 24GB is a top-tier consumer-grade GPU aimed at gamers and enthusiasts. It boasts powerful processing capabilities and a generous 24GB of GDDR6X memory, making it a compelling choice for running LLMs, especially for smaller models.

NVIDIA A100 PCIe 80GB: The Data Center Champion

The NVIDIA A100 PCIe 80GB, on the other hand, is designed for the demanding world of data centers and high-performance computing. It's equipped with a massive 80GB of HBM2e memory, making it an ideal choice for handling large LLM models that require substantial memory to operate efficiently.

Benchmark Analysis: Token Generation Speed Showdown

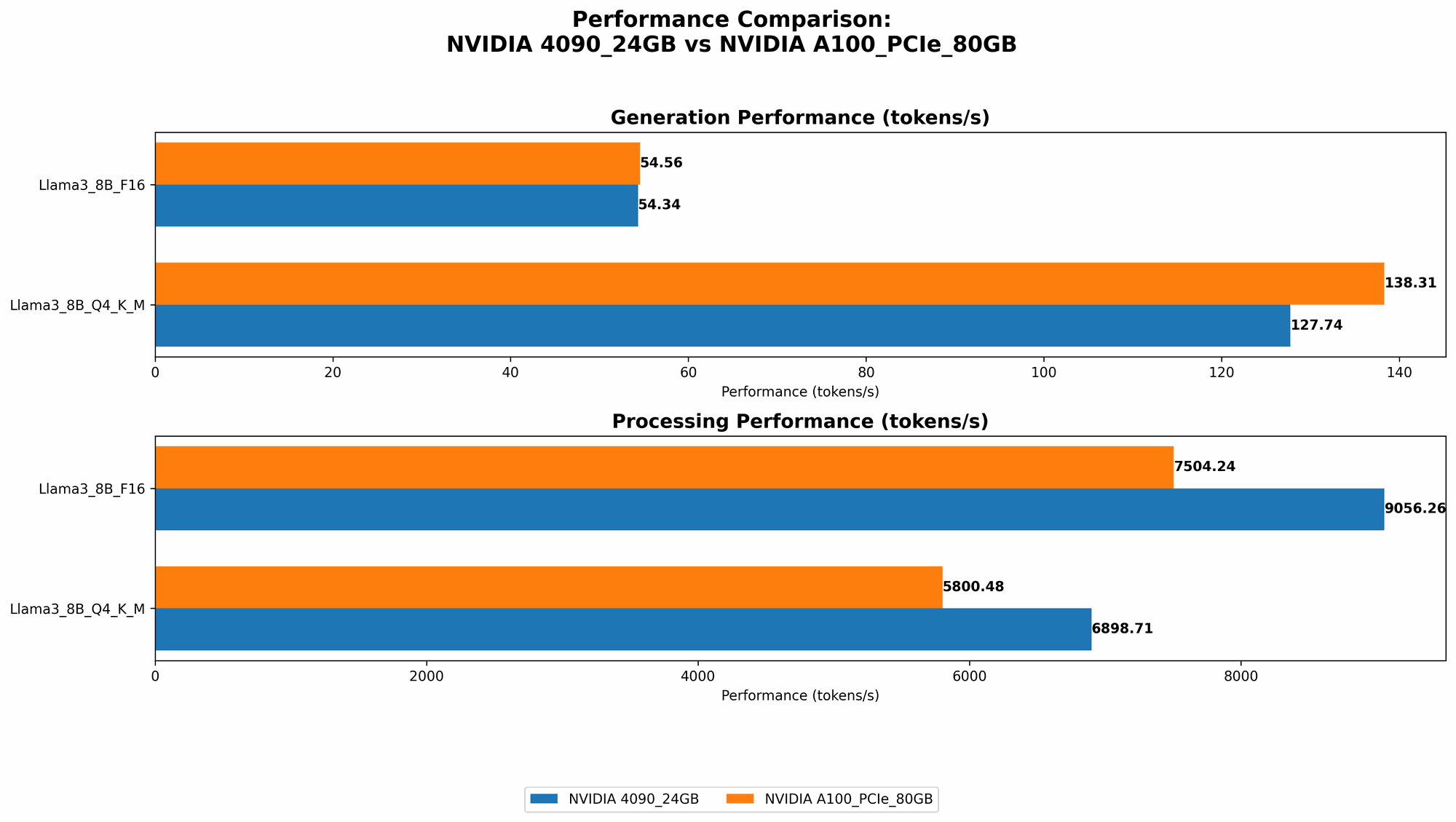

Now, let's get down to business and compare the token generation speed of these two GPUs using real-world benchmark data. We'll focus on the recently released Llama 3 models, which are known for their impressive performance and versatility.

Benchmark Data: Token Generation Speed (Tokens/Second)

The following table shows the token generation speed for different LLM models and quantization levels on both the NVIDIA 4090 24GB and A100 PCIe 80GB.

| NVIDIA 4090 24GB | NVIDIA A100 PCIe 80GB | |

|---|---|---|

| Llama 3 8B Q4KM Generation | 127.74 | 138.31 |

| Llama 3 8B F16 Generation | 54.34 | 54.56 |

| Llama 3 70B Q4KM Generation | N/A | 22.11 |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 8B Q4KM Processing | 6898.71 | 5800.48 |

| Llama 3 8B F16 Processing | 9056.26 | 7504.24 |

| Llama 3 70B Q4KM Processing | N/A | 726.65 |

| Llama 3 70B F16 Processing | N/A | N/A |

Note: "N/A" indicates that no benchmark data was available for that specific LLM model and device configuration.

Decoding the Data: What the Numbers Tell Us

- Smaller Models (Llama 3 8B): The 4090 24GB and A100 PCIe 80GB exhibit similar token generation speeds for the Llama 3 8B model, with the A100 having a slight edge.

- Larger Models (Llama 3 70B): The A100 PCIe 80GB shines when it comes to handling larger models like Llama 3 70B, boasting a significantly higher token generation speed than the 4090 24GB. This is likely due to the A100's larger memory capacity, allowing it to fit the entire model in memory and avoid the performance hit of constant data swapping.

- Quantization: Q4KM vs. F16: Both devices show significantly faster token generation with the "Q4KM" quantization scheme, indicating that using lower precision for model weights can lead to considerable speed gains. This is because lower precision requires less memory and computational power, which directly translates to faster inference speeds.

Performance Analysis: Strengths, Weaknesses, and Use Cases

Now, we'll delve deeper into the performance characteristics of each device and explore their strengths, weaknesses, and practical use cases.

NVIDIA 4090 24GB:

- Strengths:

- Excellent value: The 4090 24GB offers a good balance of performance and price, making it an attractive option for those on a budget.

- Excellent gaming performance: If you're also looking for a GPU that can handle the latest games at high resolutions and frame rates, the 4090 24GB is an excellent choice.

- Weaknesses:

- Limited memory: While 24GB of memory is generous, it might not be enough for running larger LLMs like Llama 3 70B effectively.

- Performance limitations with larger models: The 4090 24GB may struggle to keep up with the demands of larger LLMs, leading to slower token generation speeds and potentially increased latency.

Use Cases: * Smaller LLMs: The 4090 24GB excels at running smaller LLMs like the Llama 3 8B model, providing fast and efficient token generation. * Gaming and AI: If you're looking for a GPU that can handle both gaming and AI tasks, the 4090 24GB is a great option.

NVIDIA A100 PCIe 80GB:

- Strengths:

- Massive memory: The A100 PCIe 80GB's massive 80GB of memory makes it ideal for running large LLMs without the memory constraints faced by the 4090 24GB.

- Exceptional performance with large models: The A100 PCIe 80GB delivers impressive token generation speeds, even for large LLM models, thanks to its ample memory and powerful processing capabilities.

- Weaknesses:

- Higher price: The A100 PCIe 80GB is significantly more expensive than the 4090 24GB, making it a less attractive option for those on a budget.

- Limited availability: The A100 PCIe 80GB is typically used in data centers and may be difficult to purchase for individuals.

Use Cases: * Large LLMs: The A100 PCIe 80GB is the go-to GPU for running large LLMs like Llama 3 70B, providing optimal performance and stability. * Research and development: Researchers and developers working on LLMs will find the A100 PCIe 80GB a valuable tool for training and experimenting with large models.

Conclusion: Choosing the Right GPU for Your LLM Needs

The choice between the NVIDIA 4090 24GB and A100 PCIe 80GB ultimately boils down to your specific needs and budget.

- For those working with smaller LLMs or prioritizing gaming performance, the NVIDIA 4090 24GB is a great option. It offers a good balance of performance and price, making it a compelling choice for individuals and enthusiasts.

- For those who need to run larger LLMs and prioritize raw performance, the NVIDIA A100 PCIe 80GB is the clear winner. Its massive memory capacity and impressive processing power make it the go-to solution for handling large models efficiently.

FAQ

What is quantization and how does it affect LLM performance?

Quantization is a technique used to reduce the size of LLM models by representing their weights with lower precision numbers. This allows for faster inference speeds and reduces memory requirements. Think of quantization like this: Imagine you have a detailed picture of a flower, but for your project, you only need a rough sketch. Quantization takes the detailed image and simplifies it to a sketch, making it easier to process and store without losing too much information.

Can I use a GPU for both gaming and running LLMs?

Yes, both the NVIDIA 4090 24GB and A100 PCIe 80GB can be used for both gaming and running LLMs. However, keep in mind that the A100 PCIe 80GB, while capable of gaming, is primarily designed for high-performance computing tasks like running LLMs, making it an overkill for gaming.

What are the best resources for learning more about LLMs?

There are many resources available to learn more about LLMs, including: * Books: "Deep Learning with Python" by François Chollet, "Speech and Language Processing" by Daniel Jurafsky and James H. Martin * Online courses: "Natural Language Processing Specialization" on Coursera, "Deep Learning Specialization" on Coursera * Blogs and articles: Towards Data Science, The Batch, OpenAI Blog.

Keywords

Large Language Models, LLMs, NVIDIA 4090 24GB, NVIDIA A100 PCIe 80GB, GPU, Token Generation Speed, Benchmark Analysis, Performance, Llama 3, Quantization, Q4KM, F16, Processing, Generation, Memory, Data Center, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Gaming, Computer Vision, Research, Development, LLM Inference, Tokenization