NVIDIA 4090 24GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is exploding! These powerful AI models are revolutionizing how we interact with computers, from generating creative text to translating languages and even writing code. But running these models locally can be a challenge, often requiring powerful hardware that can handle their immense computational demands.

Enter the NVIDIA GeForce RTX 4090 24GB, a behemoth of a graphics card that's been making waves in the gaming world. But its capabilities extend far beyond games; it's also a powerhouse for LLM inference. In this article, we'll delve into the performance of the 4090 24GB for running popular LLMs and explore whether it's a worthwhile investment for your AI endeavors.

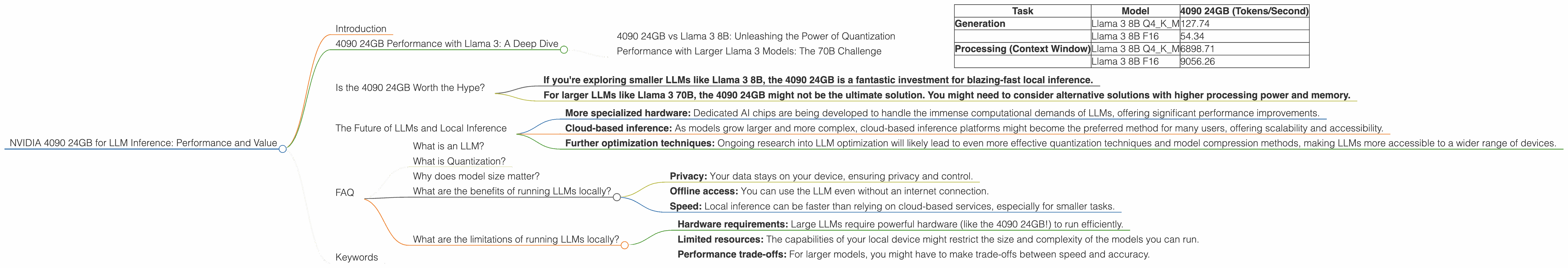

4090 24GB Performance with Llama 3: A Deep Dive

To gauge the 4090 24GB's real-world prowess, we'll focus on the popular Llama 3 model, available in various sizes. We'll analyze its performance with different quantization levels – a technique to reduce the LLM's size and computational needs.

4090 24GB vs Llama 3 8B: Unleashing the Power of Quantization

Quantization is like a diet for LLMs. It significantly shrinks the model's size, making it run faster without losing too much accuracy. Think of it as converting a high-resolution photo into a smaller version for your phone – the details might get lost, but it still conveys the essence.

We'll consider two quantization methods:

- Q4KM: This is the most aggressive quantization, sacrificing some precision but dramatically improving speed.

- F16: This is a less drastic reduction, offering a balance between accuracy and performance.

Here's the performance breakdown:

| Task | Model | 4090 24GB (Tokens/Second) |

|---|---|---|

| Generation | Llama 3 8B Q4KM | 127.74 |

| Llama 3 8B F16 | 54.34 | |

| Processing (Context Window) | Llama 3 8B Q4KM | 6898.71 |

| Llama 3 8B F16 | 9056.26 |

Let's break down these numbers:

- Generation refers to how fast the 4090 24GB can generate text from the LLM. As you can see, the Q4KM quantized version of Llama 3 8B achieves an impressive 127.74 tokens per second. That's over twice as fast as the F16 version!

- Processing (Context Window) represents the speed at which the 4090 24GB can process the LLM's internal state, which is crucial for maintaining context in longer conversations.

This data reveals that the 4090 24GB is a remarkably capable companion for Llama 3 8B, especially when combined with quantization techniques. You can expect smooth and efficient operations even with the Q4KM setting, which is a significant feat for a model of this size.

Performance with Larger Llama 3 Models: The 70B Challenge

Sadly, we don't have data on the 4090 24GB's performance with the larger Llama 3 70B model. This is because running such a massive model locally requires significant processing power and memory.

However, we can speculate on the potential performance. Considering the 4090 24GB's prowess with the 8B model, it's reasonable to assume it would still provide a noticeable boost in speed compared to mid-range GPUs for the 70B model.

But remember, the 70B model is a beast! Even with a powerful card like the 4090 24GB, you might face limitations in terms of memory and processing capabilities, especially with F16 quantization.

The 70B model is more suited for high-end servers or specialized hardware designed for large-scale LLM inference.

Is the 4090 24GB Worth the Hype?

The 4090 24GB undoubtedly packs a punch for LLM inference. It's a clear winner for smaller models like Llama 3 8B, delivering blazing-fast speeds, especially with Q4KM quantization.

For the 70B behemoth, the 4090 24GB might be a good starting point, but you'll likely need to consider more specialized hardware for optimal performance.

Ultimately, the decision depends on your needs and budget.

Here's a quick summary:

- If you're exploring smaller LLMs like Llama 3 8B, the 4090 24GB is a fantastic investment for blazing-fast local inference.

- For larger LLMs like Llama 3 70B, the 4090 24GB might not be the ultimate solution. You might need to consider alternative solutions with higher processing power and memory.

The Future of LLMs and Local Inference

The landscape of LLMs is constantly evolving, with new models and improvements emerging at an astounding pace. While GPUs like the 4090 24GB are formidable tools for local inference, the future of LLMs might involve:

- More specialized hardware: Dedicated AI chips are being developed to handle the immense computational demands of LLMs, offering significant performance improvements.

- Cloud-based inference: As models grow larger and more complex, cloud-based inference platforms might become the preferred method for many users, offering scalability and accessibility.

- Further optimization techniques: Ongoing research into LLM optimization will likely lead to even more effective quantization techniques and model compression methods, making LLMs more accessible to a wider range of devices.

The future of LLMs is exciting, and the 4090 24GB represents a powerful step in making these transformative technologies accessible to more developers and enthusiasts.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that can understand and generate human-like text. It's trained on vast amounts of text data, allowing it to perform tasks like translation, writing different kinds of creative content, and even answering your questions in a comprehensive and informative way.

What is Quantization?

Quantization is a technique used to reduce the size of an LLM without significantly impacting its performance. It's like converting a high-resolution photo into a smaller version for your phone – the details might get lost, but it still conveys the essence. This makes the model faster and more efficient to run on your device.

Why does model size matter?

The size of an LLM influences its performance and memory requirements. A larger model is generally more powerful but demands more processing power and memory to run effectively. Smaller models are often faster and more suitable for devices with limited resources.

What are the benefits of running LLMs locally?

Running LLMs locally provides several advantages:

- Privacy: Your data stays on your device, ensuring privacy and control.

- Offline access: You can use the LLM even without an internet connection.

- Speed: Local inference can be faster than relying on cloud-based services, especially for smaller tasks.

What are the limitations of running LLMs locally?

Running LLMs locally also has limitations:

- Hardware requirements: Large LLMs require powerful hardware (like the 4090 24GB!) to run efficiently.

- Limited resources: The capabilities of your local device might restrict the size and complexity of the models you can run.

- Performance trade-offs: For larger models, you might have to make trade-offs between speed and accuracy.

Keywords

NVIDIA 4090 24GB, LLM Inference, Llama 3, Llama 3 8B, Llama 3 70B, Quantization, Q4KM, F16, GPU, Token/second, Performance, Value, Local Inference, Cloud-based Inference, AI, Machine Learning, Deep Learning, Natural Language Processing