NVIDIA 4080 16GB vs. NVIDIA 3090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI models are revolutionizing the way we interact with computers, opening doors to new possibilities in natural language processing (NLP). One of the most critical aspects of running LLMs is the underlying hardware, which significantly influences performance.

In this article, we dive deep into the head-to-head comparison of two popular GPUs, the NVIDIA 4080 16GB and the NVIDIA 3090 24GB x2 configuration, specifically focusing on their token generation speed for different LLM models. We'll unravel the performance characteristics, explore the pros and cons of each setup, and provide practical recommendations for developers.

Benchmark Analysis: NVIDIA 4080 16GB vs. NVIDIA 3090 24GB x2 for LLMs

Our benchmark analysis compares the NVIDIA 4080 16GB and NVIDIA 3090 24GB x2 configuration for their token generation speed across various LLM models, including Llama 3 8B and Llama 3 70B. We'll analyze the performance based on different quantization levels (Q4KM and F16) and observe their performance across both generation and processing tasks.

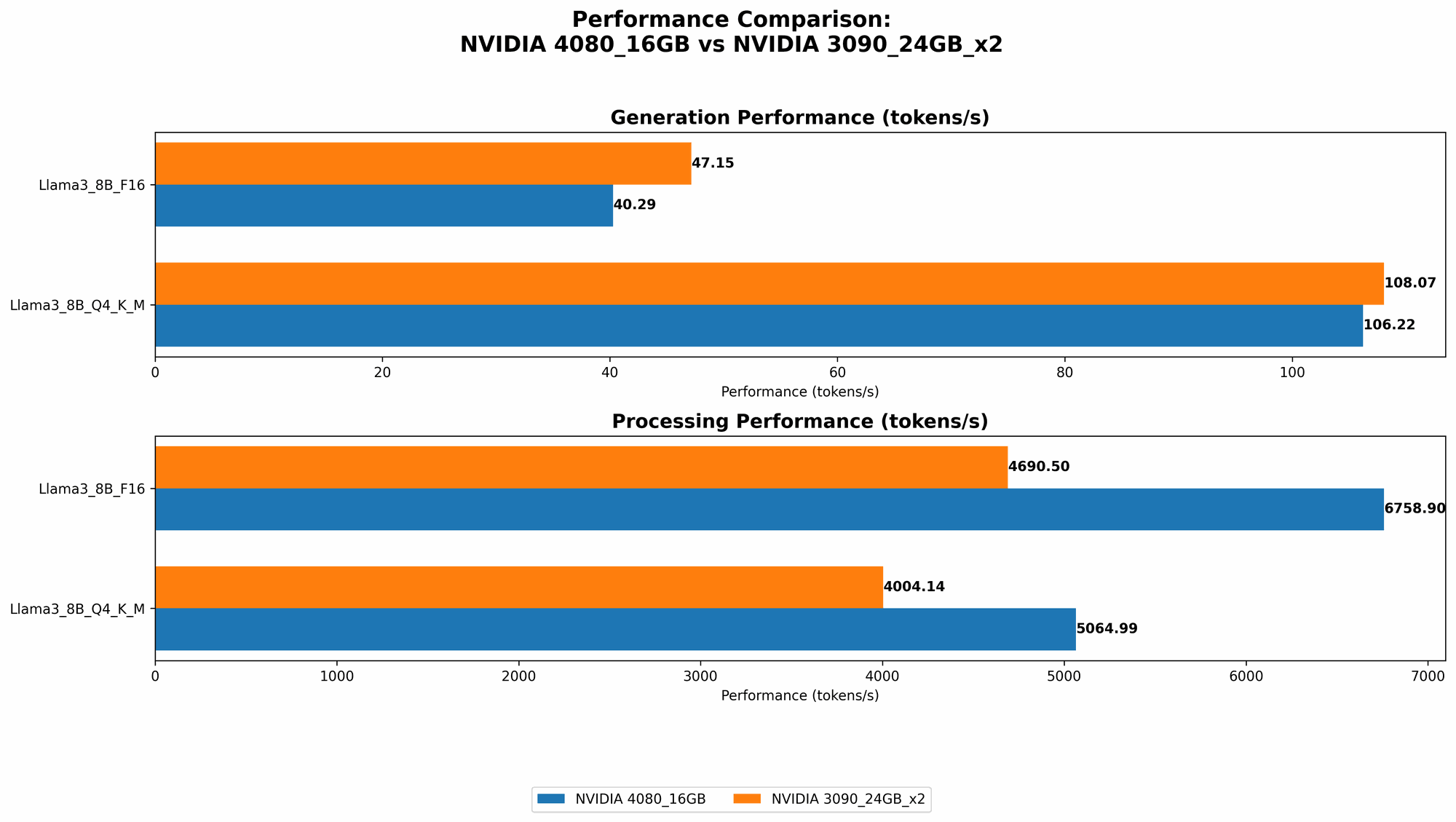

Performance Comparison: Token Generation Speed

| Model | NVIDIA 4080 16GB (tokens/second) | NVIDIA 3090 24GB x2 (tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 106.22 | 108.07 |

| Llama 3 8B F16 Generation | 40.29 | 47.15 |

| Llama 3 70B Q4KM Generation | N/A* | 16.29 |

| Llama 3 70B F16 Generation | N/A* | N/A* |

*Note: The benchmark data for Llama 3 70B with the NVIDIA 4080 16GB is unavailable due to memory limitations.

Analysis of the Results

The benchmark results show that both GPUs exhibit impressive performance in token generation. While the NVIDIA 3090 24GB x2 configuration slightly edges out the NVIDIA 4080 16GB in token generation speed for both Llama 3 8B Q4KM and F16, the difference is marginal.

Here's a breakdown of the key takeaways:

- Llama 3 8B: The NVIDIA 3090 24GB x2 configuration consistently outperforms the NVIDIA 4080 16GB, achieving higher token generation speed in both Q4KM and F16 quantization.

- Llama 3 70B: The NVIDIA 3090 24GB x2 configuration is the clear winner for the Llama 3 70B model. It can handle the larger model size thanks to its increased memory capacity.

Understanding Quantization for Token Generation:

Quantization is like a diet for your LLM. It involves reducing the size (precision) of the model's weights, thereby reducing the memory footprint. In our case, Q4KM is a very compressed format, requiring less memory but potentially sacrificing some accuracy, while F16 uses half-precision floating-point numbers, striking a balance between precision and memory efficiency.

How fast is this really?

Think of it like this: imagine you're reading a book at a lightning-fast speed. The NVIDIA 4080 16GB reads about 106 words per second for the 8B Llama model in Q4KM format. The NVIDIA 3090 24GB x2 configuration reads about 108 words per second for the same model, just a little faster.

Performance Comparison: Token Processing Speed

| Model | NVIDIA 4080 16GB (tokens/second) | NVIDIA 3090 24GB x2 (tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Processing | 5064.99 | 4004.14 |

| Llama 3 8B F16 Processing | 6758.9 | 4690.5 |

| Llama 3 70B Q4KM Processing | N/A* | 393.89 |

| Llama 3 70B F16 Processing | N/A* | N/A* |

*Note: The benchmark data for Llama 3 70B with the NVIDIA 4080 16GB is unavailable due to memory limitations.

Analysis of the Results

The picture changes when we consider token processing speed. Here, the NVIDIA 4080 16GB shines, delivering significantly faster processing speeds for both Llama 3 8B models in both quantization formats.

Key Insights:

- Llama 3 8B: The NVIDIA 4080 16GB consistently outperforms the NVIDIA 3090 24GB x2 in token processing – a clear indication that the NVIDIA 4080 16GB is exceptionally efficient in handling the internal operations of the LLM.

- Llama 3 70B: While the NVIDIA 3090 24GB x2 can handle the larger 70B model, it still falls behind the NVIDIA 4080 16GB in processing speed for the Q4KM format.

Why is processing speed so important?

Token processing is the behind-the-scenes work that the GPU does to understand the meaning and context of the text. Think of it like the LLM's brain, interpreting the information to generate responses.

Strengths and Weaknesses of Each GPU

NVIDIA 4080 16GB

Strengths:

- Excellent token processing speed: The NVIDIA 4080 16GB demonstrates superior performance in token processing, making it ideal for scenarios where computational efficiency is paramount.

- Power efficiency: The NVIDIA 4080 16GB is generally known for its power efficiency compared to the NVIDIA 3090 24GB x2 configuration (which has two GPUs).

- Cost-effectiveness: For single-GPU setups, the NVIDIA 4080 16GB offers a good balance of performance and price.

Weaknesses:

- Limited memory: The 16GB memory is a limitation for larger models like Llama 3 70B, preventing it from running efficiently.

- Not ideal for multi-GPU setups: The NVIDIA 4080 16GB is designed for single-GPU use cases and may not be the most efficient choice for multi-GPU setups.

NVIDIA 3090 24GB x2

Strengths:

- Vast memory capacity: The 48GB total memory from two NVIDIA 3090 24GB GPUs excels in handling large LLM models like Llama 3 70B.

- Scalability: The multi-GPU configuration allows for greater scalability, enabling potential performance gains by running multiple models simultaneously.

Weaknesses:

- Higher power consumption: The multi-GPU setup consumes more power than the single-GPU NVIDIA 4080 16GB.

- Higher cost: The price of two NVIDIA 3090 24GB GPUs is significantly higher than that of a single NVIDIA 4080 16GB.

Practical Recommendations for Use Cases

When to choose NVIDIA 4080 16GB:

- Smaller LLM models: For models like Llama 3 8B, the NVIDIA 4080 16GB offers excellent performance and is a cost-effective option.

- Applications prioritizing processing speed: Use cases that require fast token processing, such as real-time chatbots or speech recognition, benefit from the NVIDIA 4080 16GB's computational efficiency.

- Single-GPU setups: For developers working with a single GPU, the NVIDIA 4080 16GB is a reliable choice.

When to choose NVIDIA 3090 24GB x2:

- Large LLM models: If you're working with models like Llama 3 70B or larger, the NVIDIA 3090 24GB x2 configuration is necessary due to its significant memory capacity.

- Multi-GPU setups: For developers who need to run multiple LLMs simultaneously or desire the potential for higher performance through multi-GPU parallelism, the NVIDIA 3090 24GB x2 configuration is the way to go.

- Applications demanding substantial memory: Use cases that require intensive memory usage, like complex code generation or scientific research applications, will benefit from the ample memory of the NVIDIA 3090 24GB x2 configuration.

Conclusion

The choice between the NVIDIA 4080 16GB and the NVIDIA 3090 24GB x2 configuration comes down to your specific needs and the LLM you're working with. If you prioritize cost-effectiveness and speed in token processing, the NVIDIA 4080 16GB is a solid choice for smaller LLMs. On the other hand, if you require large memory capacity and the potential for higher performance through multi-GPU setups, the NVIDIA 3090 24GB x2 configuration is the better option.

Ultimately, understanding the strengths and weaknesses of each GPU will help you make an informed decision based on your specific application and budget.

FAQ

What are LLMs?

LLMs, or Large Language Models, are powerful AI models designed to understand and generate human-like text. They're trained on massive datasets of text and code, making them capable of tasks like translation, summarization, writing different creative text formats, and answering your questions in an informative way.

What is token generation speed?

Token generation speed refers to how quickly a GPU can process and generate text tokens. Tokens are like building blocks of text, representing words, punctuation, and other elements of language. A higher token generation speed means the GPU can produce text faster.

What is quantization?

Quantization is a technique used to reduce the size and memory footprint of LLM models. It involves representing the model's weights with lower precision, resulting in smaller file sizes and faster inference times.

Keywords

LLMs, NVIDIA 4080 16GB, NVIDIA 3090 24GB x2, token generation, token processing, Llama 3 8B, Llama 3 70B, Q4KM, F16, quantization, benchmark analysis, performance, GPU, inference, NLP, natural language processing, AI, machine learning, deep learning, model size, memory capacity, power consumption, cost, recommendations, use cases