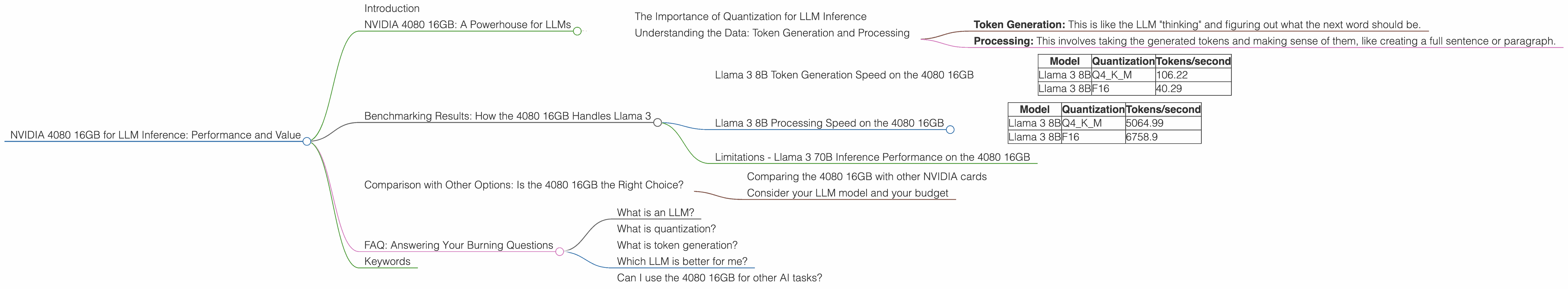

NVIDIA 4080 16GB for LLM Inference: Performance and Value

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes the need for powerful hardware to run these models efficiently. One of the leading contenders in the GPU market is NVIDIA, and their 4080 16GB card has been gaining popularity for its performance and value.

This article explores the capabilities of the NVIDIA 4080 16GB for LLM inference, focusing on its performance in handling models like the Llama 3 series. We'll delve into the details of token generation speeds and processing capabilities, examining different quantization levels to see how they affect performance. This will give you insights into whether the 4080 16GB is the right choice for your LLM inference needs.

NVIDIA 4080 16GB: A Powerhouse for LLMs

Imagine trying to run a complex AI model on a laptop. It would be like trying to fit an elephant in a shoebox. The 4080 16GB is like having a spacious warehouse for your AI operations!

The 4080 16GB is a high-end graphics card designed by NVIDIA, boasting a powerful architecture and 16GB of GDDR6X memory. This makes it a strong contender for the demanding task of LLM inference.

The Importance of Quantization for LLM Inference

Think of quantization like compressing a video file: it reduces the file size, making it easier to share and load quickly. The same principle applies to LLMs. Quantization reduces the size of the model but doesn't compromise its accuracy significantly.

Understanding the Data: Token Generation and Processing

LLM inference involves two main steps:

- Token Generation: This is like the LLM "thinking" and figuring out what the next word should be.

- Processing: This involves taking the generated tokens and making sense of them, like creating a full sentence or paragraph.

The data in this article shows tokens per second (tokens/second), which is a measure of how many tokens an LLM can produce in a second. This metric highlights the speed and efficiency of the model on a specific device.

Benchmarking Results: How the 4080 16GB Handles Llama 3

The 4080 16GB shines in LLM inference, especially for the Llama 3 series. Let's dive into the specific performance metrics:

Llama 3 8B Token Generation Speed on the 4080 16GB

The 4080 16GB delivers impressive performance for generating tokens with the 8B Llama 3 model:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 106.22 |

| Llama 3 8B | F16 | 40.29 |

What do these numbers tell us?

- Q4KM quantization significantly boosts the 4080 16GB's token generation speed to 106.22 tokens/second, showcasing its ability to handle this task with impressive efficiency.

- F16 quantization leads to a more conservative performance with 40.29 tokens/second. This might be a valuable consideration if you prioritize memory usage or require lower precision.

Llama 3 8B Processing Speed on the 4080 16GB

The 4080 16GB also demonstrates its power in processing the generated tokens for the Llama 3 8B model:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 5064.99 |

| Llama 3 8B | F16 | 6758.9 |

Observations:

- The 4080 16GB achieves significantly higher processing speeds with both quantization levels.

- F16 quantization surprisingly outperforms Q4KM in processing tokens. This highlights that there can be specific scenarios where certain quantization levels offer advantages for certain tasks.

Limitations - Llama 3 70B Inference Performance on the 4080 16GB

Unfortunately, data for the 4080 16GB with the Llama 3 70B model is not currently available. This suggests that inference on such a large model might be limited by either the available memory or the processing capabilities of the 4080 16GB.

Comparison with Other Options: Is the 4080 16GB the Right Choice?

While the 4080 16GB offers good performance, it's crucial to compare it with other options to assess its value. Consider these factors:

Comparing the 4080 16GB with other NVIDIA cards

The 4080 16GB sits comfortably in the higher-end NVIDIA GPU family. Determining its value depends on your specific needs. For example, the 4090 24GB might offer even higher performance, but at a premium price. On the other hand, the 4070 Ti 12GB might provide a more budget-friendly option with slightly lower performance.

Consider your LLM model and your budget

Ultimately, the best choice for your setup hinges on the size of the LLM you're running and your budget constraints. If you're working with smaller models like Llama 3 8B, the 4080 16GB might be a solid choice.

FAQ: Answering Your Burning Questions

What is an LLM?

LLMs are powerful AI models trained on vast datasets. They learn to understand and generate human-like text, enabling tasks like translation, writing different creative content, and answering your complex questions.

What is quantization?

Imagine you have a large photo and want to share it online. Quantization is like compressing the photo to make it smaller, but still clear enough to recognize the details. Similarly, quantization reduces the size of the LLM, making it faster to load and run, while maintaining its accuracy.

What is token generation?

Think of token generation as the LLM's "brain" making decisions about what the next word should be. It's like picking the right word from a giant dictionary based on the context of the conversation.

Which LLM is better for me?

The best LLM depends on your needs. Smaller models like Llama 3 8B are good for basic tasks, while larger models like Llama 3 70B excel at more complex ones.

Can I use the 4080 16GB for other AI tasks?

Yes! The 4080 16GB is a versatile card suitable for various AI tasks, including machine learning, image recognition, and video processing.

Keywords

NVIDIA 4080 16GB, LLM inference, Llama 3, token generation, processing speed, quantization, F16, Q4KM, GPU, performance, benchmarking, comparison, value, NVIDIA 4090, NVIDIA 4070 Ti, AI, deep learning, machine learning, AI hardware, AI development, inference, LLMs, GPU benchmarks, LLM inference performance, LLM inference benchmarks, data science, NLP, large language model inference, best GPU for LLM inference, NVIDIA GPU for LLMs