NVIDIA 4070 Ti 12GB vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Large Language Models (LLMs) are revolutionizing how we interact with technology, powering everything from chatbots and text generation to code completion and translation. However, running these complex models locally requires powerful hardware, and choosing the right GPU can be a significant factor in achieving optimal performance.

This article delves into a head-to-head comparison of two popular GPUs – the NVIDIA GeForce RTX 4070 Ti 12GB and the NVIDIA RTX A6000 48GB – to determine which reigns supreme in token generation speed for local LLM inference. We'll analyze benchmark data for several Llama 3 models and explore their strengths and weaknesses to guide you in making an informed decision, whether you're a developer, researcher, or simply curious about the inner workings of these AI marvels.

Understanding Token Generation Speed

LLMs process text as a sequence of tokens, which are basically small units of language like words, punctuation, or even parts of words (like "sub" in "submarine"). Token generation speed measures how quickly a GPU can process these tokens, which directly impacts the fluency and responsiveness of your LLM applications.

Imagine token generation as a conveyor belt carrying language pieces. The faster the belt moves, the quicker you can understand and interact with the LLM.

Benchmark Analysis: NVIDIA 4070 Ti 12GB vs. NVIDIA RTX A6000 48GB

To compare the performance of these two GPUs, we'll analyze benchmark data for token generation speed using the popular Llama.cpp implementation for Llama 3 models. We'll focus on the following key metrics:

- Llama 3 8B (Quantized 4-bit): This is a smaller and more efficient LLM suitable for devices with limited memory.

- Llama 3 8B (Float16): This model uses a higher precision, resulting in potentially better accuracy, and offers higher efficiency than the quantized version.

- Llama 3 70B (Quantized 4-bit): This model is significantly larger than the 8B versions, offering potentially higher accuracy but demanding more memory.

Note: No data was available for Llama 3 70B F16 model on both GPUs, and Llama 3 8B F16 model for the NVIDIA 4070 Ti 12GB. Therefore these are excluded from the analysis.

Comparison of NVIDIA 4070 Ti 12GB and NVIDIA RTX A6000 48GB

| Model | NVIDIA 4070 Ti 12GB (Tokens/Second) | NVIDIA RTX A6000 48GB (Tokens/Second) |

|---|---|---|

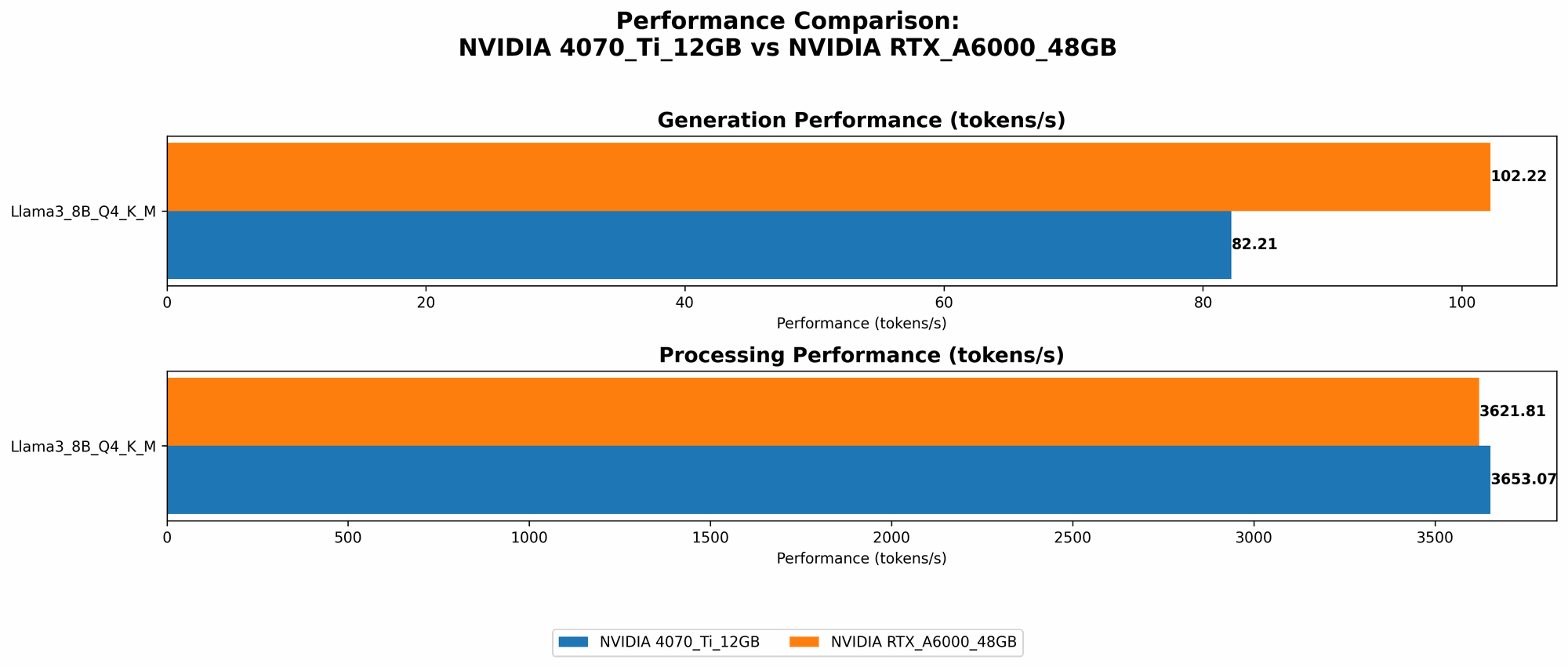

| Llama 3 8B Q4 K_M Generation | 82.21 | 102.22 |

| Llama 3 8B F16 Generation | N/A | 40.25 |

| Llama 3 70B Q4 K_M Generation | N/A | 14.58 |

- Llama 3 8B (Quantized 4-bit): The RTX A6000 48GB performs better than the 4070 Ti 12GB with a token generation speed of 102.22 tokens/second compared to 82.21 tokens/second. This translates to a roughly 24% performance advantage for the A6000.

- Llama 3 8B (Float16): The RTX A6000 48GB significantly outperforms the 4070 Ti 12GB, achieving a token generation speed of 40.25 tokens/second. Although no data was available for the 4070 Ti 12GB, this suggests that the A6000 might be a more suitable choice for models requiring higher precision.

- Llama 3 70B (Quantized 4-bit): The A6000 boasts a significant performance advantage over the 4070 Ti 12GB, with a token generation speed of 14.58 tokens/second. The 4070 Ti 12GB lacks the memory capacity to handle this larger model.

Performance Analysis: Strengths and Weaknesses

NVIDIA 4070 Ti 12GB

Strengths:

- Lower cost: The 4070 Ti is significantly more affordable than the A6000, making it a good choice for users on a budget.

- Solid performance for smaller models: The 4070 Ti handles smaller LLMs like the Llama 3 8B with impressive speed.

- Good for gaming and other tasks: Being a consumer-grade card, it's also excellent for gaming and other computationally demanding tasks.

Weaknesses:

- Limited memory: The 12GB VRAM might not be enough for larger LLMs like the Llama 3 70B, potentially leading to performance bottlenecks or crashes.

- Not as powerful for larger models: The A6000 significantly outperforms the 4070 Ti for models like Llama 3 8B F16 and 70B, highlighting the need for a dedicated professional-grade card for those applications.

NVIDIA RTX A6000 48GB

Strengths:

- Exceptional performance: The A6000 shines with its powerful processing capabilities, effortlessly handling both smaller and larger LLMs.

- Vast memory: The 48GB VRAM allows it to run even the most memory-intensive LLMs without breaking a sweat.

- Professional-grade: Designed for demanding workloads, it offers stability, reliability, and a longer lifespan compared to consumer-grade cards.

Weaknesses:

- High cost: The A6000 comes at a significantly higher price point, potentially putting it out of reach for some users.

- Limited gaming performance: While it can handle games, its primary focus is on professional workloads, making it less ideal for gaming enthusiasts.

Use Case Recommendations

NVIDIA 4070 Ti 12GB

- Ideal for:

- Users on a budget who want to run smaller LLMs like Llama 3 8B for tasks like text generation, summarization, and basic chatbots.

- Gamers and content creators who also want to experiment with smaller LLMs.

NVIDIA RTX A6000 48GB

- Ideal for:

- Researchers and developers working with large-scale LLMs like the Llama 3 70B and beyond.

- Professionals requiring high-performance computing for AI workloads, machine learning, and data analysis.

Quantization: A Key Efficiency Booster

Quantization is a technique used to reduce the memory footprint of LLMs by representing weights and activations with fewer bits. Think of it as using a smaller palette to paint the same picture.

While a full-precision model might require 32 bits per value, a quantized model might use only 4 bits, significantly reducing memory usage and boosting performance.

Quantization for LLMs Explained

Let's simplify this using an analogy. Imagine building a model car. You can use a high-precision set of tools, but it'll take more time and effort. Quantization is like using a simplified set of tools – maybe a smaller hammer or a less detailed screwdriver – that still gets the job done but requires less effort. The smaller tools might not have as many details, but you can build the model car more efficiently.

In LLMs, quantization helps reduce the amount of data that needs to be processed, allowing GPUs to work faster and consume less power.

Comparing NVIDIA 4070 Ti 12GB and RTX A6000 48GB for LLM Processing

| Model | NVIDIA 4070 Ti 12GB (Tokens/Second) | NVIDIA RTX A6000 48GB (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4 K_M Processing | 3653.07 | 3621.81 |

| Llama 3 8B F16 Processing | N/A | 4315.18 |

| Llama 3 70B Q4 K_M Processing | N/A | 466.82 |

- Llama 3 8B (Quantized 4-bit): The 4070 Ti 12GB slightly outperforms the A6000 in this case with a processing speed of 3653.07 tokens/second compared to 3621.81 tokens/second. This shows that both GPUs are equally capable in handling the processing of smaller models.

- Llama 3 8B (Float16): The A6000 shows its dominance with a processing speed of 4315.18 tokens/second for the Llama 3 8B F16 model. The 4070 Ti 12GB does not have enough VRAM to support this model.

- Llama 3 70B (Quantized 4-bit): The A6000 outperforms the 4070 Ti 12GB again due to its ability to manage larger models. It achieves a processing speed of 466.82 tokens/second while the 4070 Ti 12GB cannot support this large model.

Key Findings

- The A6000 excels with its memory capacity, allowing it to run larger models, which directly translates into better performance for both generation and processing.

- The 4070 Ti 12GB proves its competency in handling smaller LLMs. However, its limited memory becomes a bottleneck for larger models, hindering performance and potentially leading to memory errors.

- The choice between these GPUs ultimately depends on your specific use case and budget. If you're primarily focused on smaller LLMs, the 4070 Ti 12GB can be a great value option. But if you need to handle larger models or require the raw power of a dedicated professional-grade card, the A6000 is the clear winner.

Conclusion: Choosing the Right GPU for Your LLM Needs

The NVIDIA 4070 Ti 12GB offers a compelling blend of performance and affordability, making it a compelling choice for users dealing with smaller LLMs. On the other hand, the NVIDIA RTX A6000 48GB stands out with its unmatched performance and vast memory, making it the go-to option for professionals and researchers working with the most demanding large language models.

Ultimately, the best GPU for you depends on your individual needs and budget. If you're a budget-conscious developer working with smaller LLMs and need a GPU for other tasks, the 4070 Ti 12GB is a great choice. If you're tackling large-scale LLMs and need the unrivaled power of a dedicated professional-grade card, the A6000 is the way to go.

FAQ

What is the difference between a consumer-grade GPU (like the 4070 Ti) and a professional-grade GPU (like the A6000)?

Consumer-grade GPUs are designed for gaming and general-purpose tasks like video editing. They prioritize performance-per-dollar and often have features that enhance gaming experiences. Professional-grade GPUs are designed for demanding workloads like AI training, scientific computing, and complex rendering. They typically have better stability, reliability, and longer lifespans.

Can I run LLMs on a CPU?

Yes, but CPUs are generally not as efficient as GPUs for running LLMs. GPUs are designed for parallel processing, which is essential for handling the massive computations required by LLMs. However, depending on the size of the model and your processing needs, a powerful CPU might be sufficient.

What is the best way to choose a GPU for LLMs?

Consider your LLM size, memory requirements, performance targets, and budget. For smaller models, a consumer-grade GPU might be sufficient. But, for large LLMs and high-performance workloads, a professional-grade GPU is recommended.

Is it necessary to have a powerful CPU for running LLMs?

While GPUs are the primary workhorses for LLMs, a powerful CPU is still important for tasks like data loading, preprocessing, and post-processing. A well-balanced system with a powerful CPU and a dedicated GPU will offer optimal performance.

What other factors should I consider when choosing a GPU for LLMs?

- Memory bandwidth: Higher memory bandwidth translates to faster data transfer between the GPU and memory, improving overall performance.

- GPU architecture: Newer GPU architectures often offer improved efficiency and performance compared to older generations.

- Software compatibility: Ensure the GPU is compatible with the software and frameworks you plan to use for your LLM tasks.

Keywords

LLMs, GPU, NVIDIA, 4070 Ti, RTX A6000, token generation, benchmark, performance, memory, quantization, Llama 3, processing, speed, use case, recommendation, developer, researcher, professional-grade, consumer-grade, gaming, AI, machine learning, data analysis, efficiency, cost, budget.