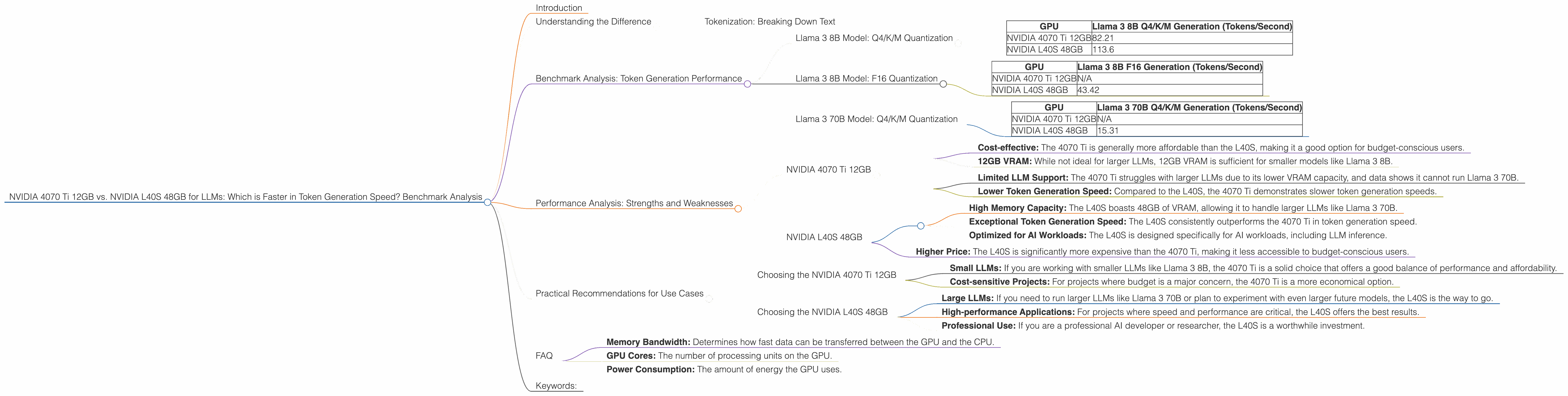

NVIDIA 4070 Ti 12GB vs. NVIDIA L40S 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, and running these powerful models locally is becoming increasingly popular. However, the computational demands of LLMs require powerful hardware, especially when it comes to generating text. Two popular GPUs, the NVIDIA 4070 Ti 12GB and the NVIDIA L40S 48GB, are often considered for this task. This article will delve into a benchmark analysis comparing these two GPUs, specifically focusing on their performance in token generation speed for different LLM models. We'll examine the strengths and weaknesses of each GPU and provide practical recommendations for choosing the right device for your LLM needs.

Understanding the Difference

Before diving into the benchmark results, let's clarify what we mean by "token generation speed." In simple terms, it refers to how fast a GPU can process text and generate new tokens (words or sub-words) based on the input prompt.

Tokenization: Breaking Down Text

Think of it like this: Imagine you have a sentence, "The quick brown fox jumps over the lazy dog." To process this sentence, an LLM needs to break it down into individual units called tokens.

For example, the sentence could be tokenized as: "The", "quick", "brown", "fox", "jumps", "over", "the", "lazy", "dog". These tokens can be single words or sub-words depending on the tokenization method used by the LLM. The faster the GPU can process these tokens and generate new ones, the faster the LLM can generate text.

Benchmark Analysis: Token Generation Performance

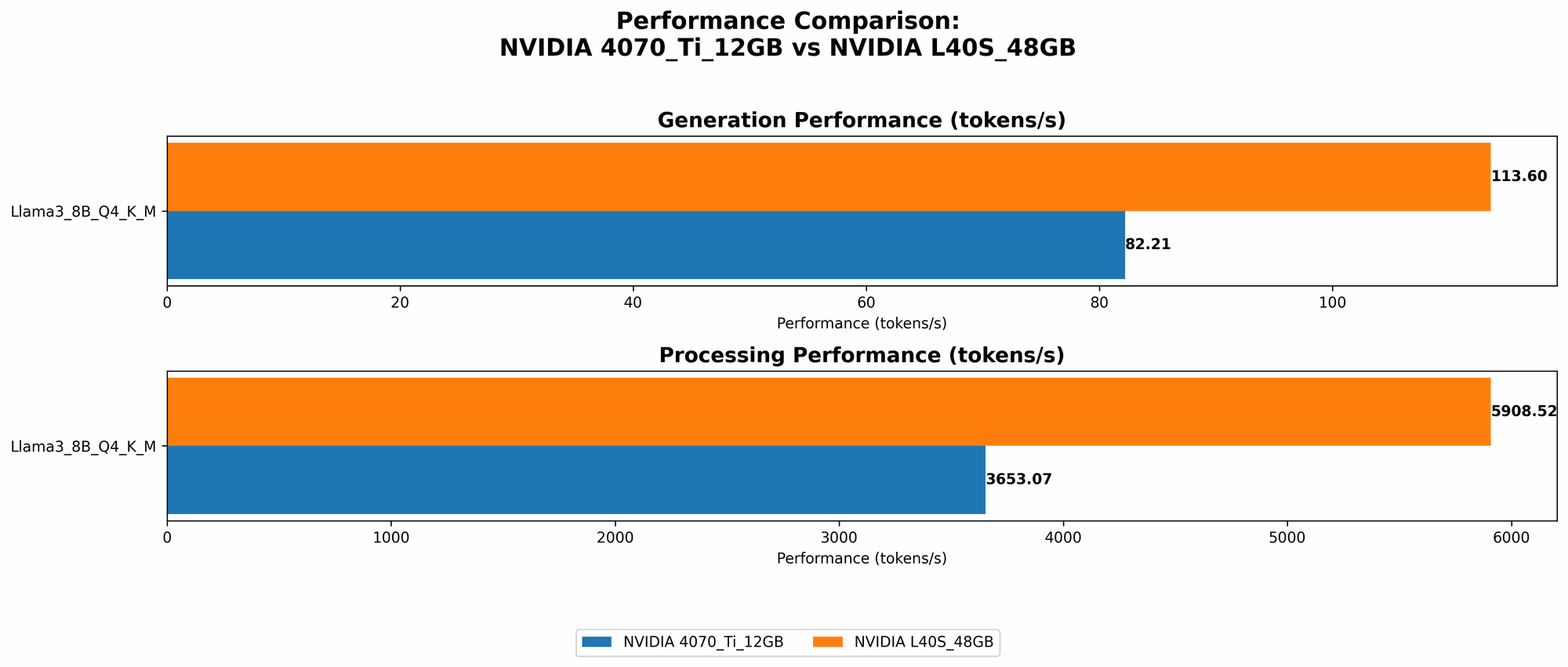

Llama 3 8B Model: Q4/K/M Quantization

| GPU | Llama 3 8B Q4/K/M Generation (Tokens/Second) |

|---|---|

| NVIDIA 4070 Ti 12GB | 82.21 |

| NVIDIA L40S 48GB | 113.6 |

Key Observations:

- The NVIDIA L40S 48GB clearly outperforms the NVIDIA 4070 Ti 12GB in generating tokens for the Llama 3 8B model with Q4/K/M quantization.

- L40S achieves a 38% higher token generation speed than the 4070 Ti.

- This difference is significant, especially when dealing with large amounts of text.

Llama 3 8B Model: F16 Quantization

| GPU | Llama 3 8B F16 Generation (Tokens/Second) |

|---|---|

| NVIDIA 4070 Ti 12GB | N/A |

| NVIDIA L40S 48GB | 43.42 |

Key Observations:

- The 4070 Ti 12GB does not have benchmark data available for this configuration.

- The L40S 48GB performs at a notable speed of 43.42 tokens/second with F16 quantization.

Llama 3 70B Model: Q4/K/M Quantization

| GPU | Llama 3 70B Q4/K/M Generation (Tokens/Second) |

|---|---|

| NVIDIA 4070 Ti 12GB | N/A |

| NVIDIA L40S 48GB | 15.31 |

Key Observations:

- The 4070 Ti 12GB does not have benchmark data available for this configuration.

- The L40S 48GB achieves a token generation speed of 15.31 tokens/second for the Llama 3 70B model.

Performance Analysis: Strengths and Weaknesses

NVIDIA 4070 Ti 12GB

Strengths:

- Cost-effective: The 4070 Ti is generally more affordable than the L40S, making it a good option for budget-conscious users.

- 12GB VRAM: While not ideal for larger LLMs, 12GB VRAM is sufficient for smaller models like Llama 3 8B.

Weaknesses:

- Limited LLM Support: The 4070 Ti struggles with larger LLMs due to its lower VRAM capacity, and data shows it cannot run Llama 3 70B.

- Lower Token Generation Speed: Compared to the L40S, the 4070 Ti demonstrates slower token generation speeds.

NVIDIA L40S 48GB

Strengths:

- High Memory Capacity: The L40S boasts 48GB of VRAM, allowing it to handle larger LLMs like Llama 3 70B.

- Exceptional Token Generation Speed: The L40S consistently outperforms the 4070 Ti in token generation speed.

- Optimized for AI Workloads: The L40S is designed specifically for AI workloads, including LLM inference.

Weaknesses:

- Higher Price: The L40S is significantly more expensive than the 4070 Ti, making it less accessible to budget-conscious users.

Practical Recommendations for Use Cases

Ultimately, the best GPU for your LLM needs depends on your specific use case and budget.

Choosing the NVIDIA 4070 Ti 12GB

- Small LLMs: If you are working with smaller LLMs like Llama 3 8B, the 4070 Ti is a solid choice that offers a good balance of performance and affordability.

- Cost-sensitive Projects: For projects where budget is a major concern, the 4070 Ti is a more economical option.

Choosing the NVIDIA L40S 48GB

- Large LLMs: If you need to run larger LLMs like Llama 3 70B or plan to experiment with even larger future models, the L40S is the way to go.

- High-performance Applications: For projects where speed and performance are critical, the L40S offers the best results.

- Professional Use: If you are a professional AI developer or researcher, the L40S is a worthwhile investment.

FAQ

Q1. What does "quantization" mean in the context of LLMs?

A. It's a technique used to reduce the size of LLM models. Imagine an LLM as a giant recipe book. Quantization is like taking that recipe book and writing it with a smaller font. This makes the book smaller and easier to store, but it doesn't change the recipes themselves.

Q2. Why are token speeds different between Q4/K/M and F16 quantization?

A. F16 is a smaller data type than Q4/K/M. This means that the GPU needs to process less data with F16 quantization, leading to faster speeds. However, F16 quantization can sometimes result in a slight decrease in accuracy.

Q3. How can I test the performance of my GPU with different LLMs?

A. You can use benchmarking tools like the ones listed in the references section. These tools allow you to measure the performance of your GPU with various LLMs and quantization levels

Q4. What are the other factors to consider besides token generation speed?

A. Other important factors include:

- Memory Bandwidth: Determines how fast data can be transferred between the GPU and the CPU.

- GPU Cores: The number of processing units on the GPU.

- Power Consumption: The amount of energy the GPU uses.

Q5. Can I run LLMs on my CPU?

A. Yes, you can run LLMs on your CPU, but it will be significantly slower than using a dedicated GPU.

Keywords:

NVIDIA 4070 Ti, NVIDIA L40S, LLM, Token Generation Speed, Benchmark, Performance Analysis, Llama 3 8B, Llama 3 70B, Q4/K/M Quantization, F16 Quantization, GPU, Text Generation, AI Workloads, Deep Learning, Machine Learning, Generative AI.