NVIDIA 4070 Ti 12GB vs. NVIDIA A40 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the rapidly advancing world of large language models (LLMs), efficient hardware is paramount for unleashing their full potential. Two popular choices for running LLMs locally are the NVIDIA GeForce RTX 4070 Ti 12GB and the NVIDIA A40 48GB. This article delves into a comparative analysis of these GPUs, focusing on their token generation speed for various LLM models, to help you decide which GPU best suits your LLM needs.

Benchmarking: A40 vs. 4070 Ti 12GB for LLM Token Generation

This benchmark analysis focuses on the token generation speed of two NVIDIA GPUs: the RTX 4070 Ti 12GB and the A40 48GB. We'll compare their performance on the Llama 3 model, considering both 8B and 70B variants, and will compare the results for two different quantization levels: Q4KM (4-bit quantization with a block size of 128) and F16 (half-precision floating point).

Understanding Token Generation Speed

Token generation speed refers to the rate at which an LLM can generate new tokens, which are the basic units of text in language models. In simpler terms, it's how fast the model can produce words or characters during text generation.

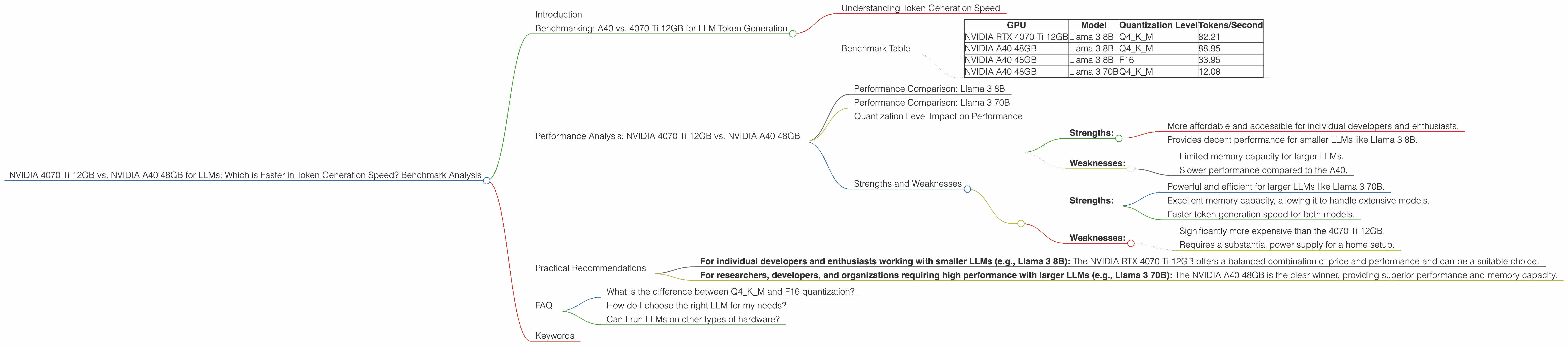

Benchmark Table

| GPU | Model | Quantization Level | Tokens/Second |

|---|---|---|---|

| NVIDIA RTX 4070 Ti 12GB | Llama 3 8B | Q4KM | 82.21 |

| NVIDIA A40 48GB | Llama 3 8B | Q4KM | 88.95 |

| NVIDIA A40 48GB | Llama 3 8B | F16 | 33.95 |

| NVIDIA A40 48GB | Llama 3 70B | Q4KM | 12.08 |

Note: The data for NVIDIA RTX 4070 Ti 12GB, F16, and A40 48GB, F16 for Llama 3 70B is not available, as it was not benchmarked at the time of data collection.

Performance Analysis: NVIDIA 4070 Ti 12GB vs. NVIDIA A40 48GB

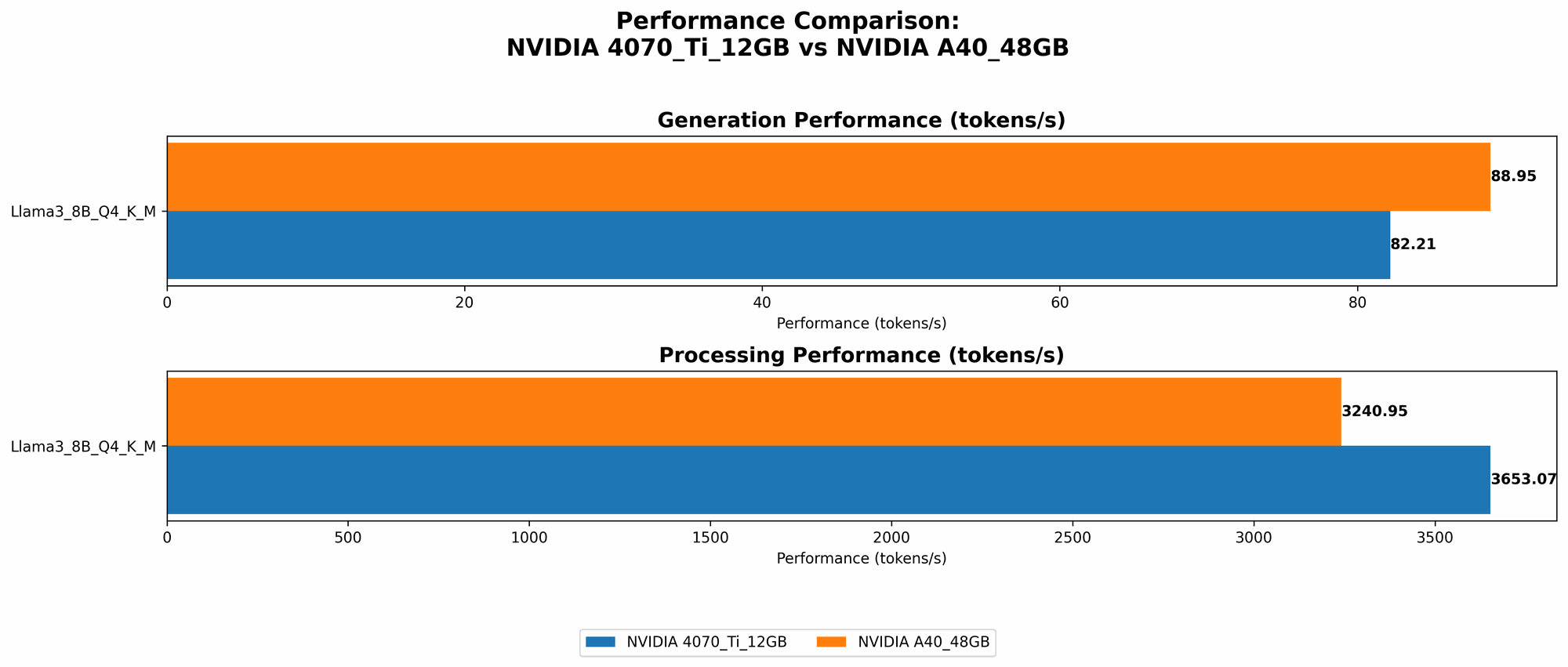

Performance Comparison: Llama 3 8B

The NVIDIA A40 48GB outperforms the 4070 Ti 12GB in token generation speed for the Llama 3 8B model. This is evident in the data, where the A40 achieves a rate of 88.95 tokens/second using Q4KM, compared to 82.21 tokens/second for the 4070 Ti. This difference in performance can be attributed to the A40's superior memory bandwidth and computational power.

Performance Comparison: Llama 3 70B

The A40 48GB again dominates in performance when running the Llama 3 70B model. With Q4KM, it delivers 12.08 tokens/second compared to the 4070 Ti 12GB, which doesn't have any recorded performance data available for this specific model.

Quantization Level Impact on Performance

Looking at the results, we can see that the token generation speed varies significantly based on the quantization level employed. For the Llama 3 8B model, the A40 48GB running with Q4KM is about 2.62 times faster than when using F16. This highlights the importance of choosing the right quantization level for your model and hardware to optimize performance.

Strengths and Weaknesses

NVIDIA RTX 4070 Ti 12GB:

- Strengths:

- More affordable and accessible for individual developers and enthusiasts.

- Provides decent performance for smaller LLMs like Llama 3 8B.

- Weaknesses:

- Limited memory capacity for larger LLMs.

- Slower performance compared to the A40.

NVIDIA A40 48GB:

- Strengths:

- Powerful and efficient for larger LLMs like Llama 3 70B.

- Excellent memory capacity, allowing it to handle extensive models.

- Faster token generation speed for both models.

- Weaknesses:

- Significantly more expensive than the 4070 Ti 12GB.

- Requires a substantial power supply for a home setup.

Practical Recommendations

The choice between the NVIDIA RTX 4070 Ti 12GB and the NVIDIA A40 48GB heavily depends on your specific LLM use case, budget, and performance requirements.

- For individual developers and enthusiasts working with smaller LLMs (e.g., Llama 3 8B): The NVIDIA RTX 4070 Ti 12GB offers a balanced combination of price and performance and can be a suitable choice.

- For researchers, developers, and organizations requiring high performance with larger LLMs (e.g., Llama 3 70B): The NVIDIA A40 48GB is the clear winner, providing superior performance and memory capacity.

Remember: If you're unsure about the specific LLM you'll be using or your performance needs, consider the A40 48GB for its flexibility and future-proofing capabilities.

FAQ

What is the difference between Q4KM and F16 quantization?

Quantization is a technique used to reduce the memory footprint and computational requirements of large models. Q4KM refers to 4-bit quantization with a block size of 128, while F16 represents half-precision floating point. Q4KM quantization results in a smaller model size and faster inference, but might slightly impact accuracy compared to F16.

How do I choose the right LLM for my needs?

The selection of an LLM depends on your use case and requirements. Consider factors like model size, accuracy, and your computational resources. For text generation tasks, a smaller model might suffice, whereas for complex tasks like code generation, a larger model might be needed.

Can I run LLMs on other types of hardware?

Yes, you can run LLMs on various hardware, including CPUs, GPUs, and specialized AI accelerators. The optimal choice depends on the LLM size, your budget, and the desired performance.

Keywords

LLM, Large Language Model, NVIDIA RTX 4070 Ti, NVIDIA A40, token generation speed, benchmark analysis, quantization, Llama 3, Llama 3 8B, Llama 3 70B, Q4KM, F16, inference, performance, GPU, AI accelerator, memory, bandwidth