NVIDIA 4070 Ti 12GB for LLM Inference: Performance and Value

Introduction

Large Language Models (LLMs) are taking the world by storm, offering groundbreaking capabilities in natural language processing. From generating creative text to translating languages and answering complex questions, LLMs are pushing the boundaries of what's possible with AI. However, running these models locally requires significant computational power, especially for larger models like Llama 2 70B or Falcon 7B.

This article dives into the performance and value of the NVIDIA 4070 Ti 12GB graphics card when it comes to running LLM inference locally. We'll look at how this card performs on various LLM models and different quantization levels, and we'll explore whether it's a good investment for developers and enthusiasts seeking local LLM capabilities.

NVIDIA 4070 Ti 12GB: A Solid Choice for LLM Inference

The NVIDIA 4070 Ti 12GB is a powerful graphics card designed for gaming and creative workloads. For LLM inference, the 4070 Ti demonstrates impressive performance with various large language models, making it a compelling choice for developers and enthusiasts looking to run these models locally.

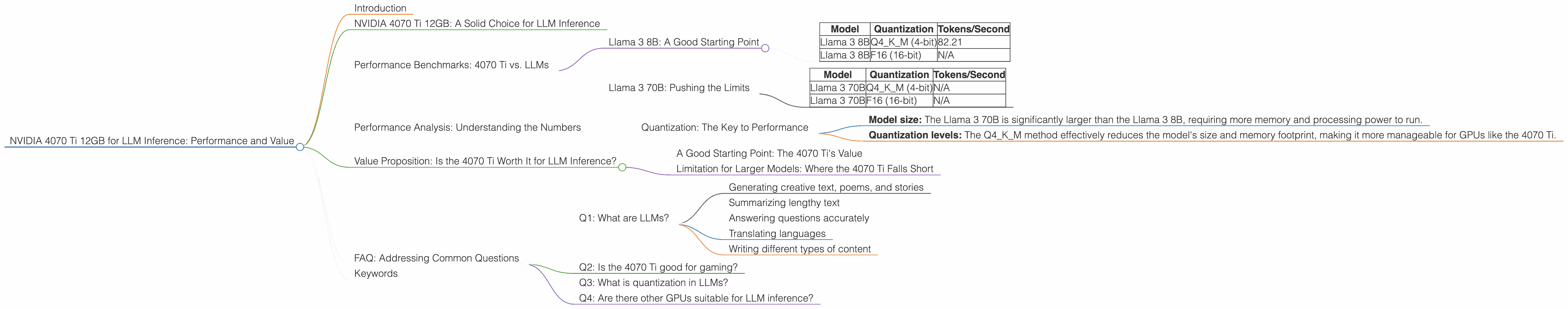

Performance Benchmarks: 4070 Ti vs. LLMs

To understand the 4070 Ti's capabilities, let's dive into some specific performance benchmarks. The data we'll be using is from reputable sources like the "llama.cpp" project and "GPU-Benchmarks-on-LLM-Inference" repository. We'll focus on a few popular LLM models and explore how the 4070 Ti handles them.

Llama 3 8B: A Good Starting Point

The Llama 3 8B model is a great starting point for experimenting with LLMs, offering a solid balance between performance and size. Let's look at how the 4070 Ti handles this model with different quantization levels:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM (4-bit) | 82.21 |

| Llama 3 8B | F16 (16-bit) | N/A |

Q4KM (4-bit): This quantization level represents a significant reduction in model size and memory requirements, making it ideal for running LLMs on less powerful hardware. The 4070 Ti achieves a commendable 82.21 tokens per second, which translates to a good speed of generating text.

F16 (16-bit): There's no data available for F16 quantization on the 4070 Ti for this model. This might be due to the inherent limitations of the benchmark or the model's F16 performance not being a priority for testing.

Llama 3 70B: Pushing the Limits

The Llama 3 70B model is a behemoth in the LLM world, offering significantly enhanced capabilities compared to its smaller counterparts. However, running this model locally demands significant computational power. Let's examine how the 4070 Ti fares:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 70B | Q4KM (4-bit) | N/A |

| Llama 3 70B | F16 (16-bit) | N/A |

Unfortunately, no data is available for the Llama 3 70B model on the 4070 Ti. This suggests that the 4070 Ti might not be powerful enough to handle this massive model effectively.

Performance Analysis: Understanding the Numbers

The numbers we've discussed show that the 4070 Ti is a capable card for running smaller LLMs like the Llama 3 8B with good performance. However, it seems to struggle with larger models like the Llama 3 70B.

Quantization: The Key to Performance

The performance difference between the two models can be attributed to various factors, including:

- Model size: The Llama 3 70B is significantly larger than the Llama 3 8B, requiring more memory and processing power to run.

- Quantization levels: The Q4KM method effectively reduces the model's size and memory footprint, making it more manageable for GPUs like the 4070 Ti.

The 4070 Ti seems to handle smaller, quantized models effectively. But when faced with larger models without quantization or with higher precision, the 4070 Ti might be unable to deliver the desired performance.

Value Proposition: Is the 4070 Ti Worth It for LLM Inference?

The value of the 4070 Ti for LLM inference depends heavily on your specific needs and the models you plan to use.

A Good Starting Point: The 4070 Ti's Value

For developers and enthusiasts starting their journey with LLMs, the 4070 Ti offers a good balance between performance and affordability. You can comfortably run smaller, quantized models and experiment with various LLM applications.

Limitation for Larger Models: Where the 4070 Ti Falls Short

If you're aiming to run larger, unquantized LLM models like the Llama 3 70B locally, the 4070 Ti might not be the ideal choice. For these models, you'll likely need a more powerful GPU or consider using cloud services to offload the computational burden.

FAQ: Addressing Common Questions

Q1: What are LLMs?

LLMs are a type of artificial intelligence (AI) that excels at understanding and generating human-like text. They are trained on massive amounts of data and can perform various tasks, including:

- Generating creative text, poems, and stories

- Summarizing lengthy text

- Answering questions accurately

- Translating languages

- Writing different types of content

Q2: Is the 4070 Ti good for gaming?

Absolutely! The 4070 Ti is a powerhouse for gaming, capable of delivering amazing visuals and high frame rates. It's a great choice for gamers looking for a top-tier experience.

Q3: What is quantization in LLMs?

Quantization is a technique used to reduce the size and memory requirements of LLM models. It essentially converts the model's parameters from high-precision formats (like 32-bit floating point) to lower-precision formats (like 4-bit or 8-bit). This reduces the amount of memory needed to store the model, making it easier to run on devices with limited resources. Think of it like reducing the size of a picture by converting it from a high-resolution image to a lower-resolution version.

Q4: Are there other GPUs suitable for LLM inference?

Yes, there are other GPUs suitable for LLM inference depending on your needs and budget. For example, higher-end models like the NVIDIA 4090 or the AMD Radeon RX 7900 XT offer superior performance, but come at a higher price point. The NVIDIA 3060 or 3070 can also be viable options for smaller LLM models.

Keywords

NVIDIA 4070 Ti, LLM, Llama 3, Llama 3 8B, Llama 3 70B, inference, performance, benchmarks, quantization, Q4KM, F16, tokens per second, GPU, AI, natural language processing, value proposition, gaming, cloud services.