NVIDIA 3090 24GB x2 vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and for good reason! These powerful AI models can generate convincing text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running these models locally requires serious hardware muscle, especially when dealing with large models like Llama 7B and Llama 70B.

This article dives deep into the performance comparison of two popular GPUs, the NVIDIA 309024GBx2 (two GeForce RTX 3090 24GB GPUs) and the NVIDIA RTXA600048GB, for running LLMs. We'll analyze token generation speed for different model sizes, including the Llama 3 8B and Llama 3 70B, and see which GPU emerges as the champion!

Comparison of NVIDIA 309024GBx2 and NVIDIA RTXA600048GB for LLM Token Generation

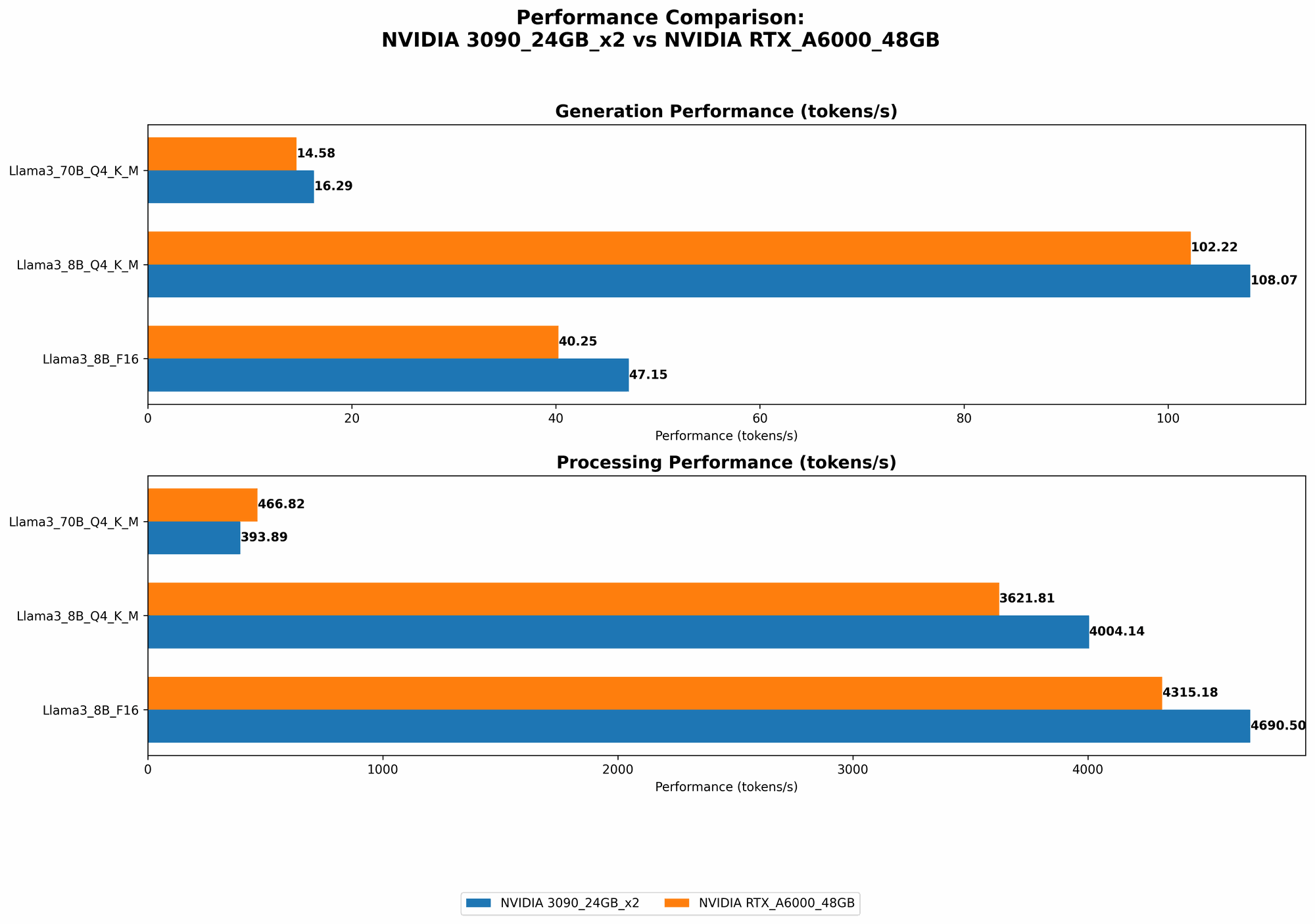

Token Generation Speed Comparison: Llama 3 8B

The Llama 3 8B model is a popular choice for enthusiasts because it offers impressive performance while still being manageable on a single powerful GPU. Here's a breakdown of the token generation speeds for both GPUs:

| GPU | Llama 3 8B Q4KM Generation (Tokens/second) | Llama 3 8B F16 Generation (Tokens/second) |

|---|---|---|

| NVIDIA 309024GBx2 | 108.07 | 47.15 |

| NVIDIA RTXA600048GB | 102.22 | 40.25 |

As you can see, both GPUs perform admirably with the Llama 3 8B model, showcasing impressive token generation speeds. However, the NVIDIA 309024GBx2 edges out the NVIDIA RTXA600048GB with a slightly faster speed, especially in the Q4KM (quantized) configuration. This difference might not seem significant at first glance, but it can add up to a noticeable improvement in overall processing time, especially for longer prompts and complex tasks.

Token Generation Speed Comparison: Llama 3 70B

Now, let's move on to the heavyweight champion – the Llama 3 70B model! This behemoth requires a lot of horsepower, and the difference between the two GPUs becomes more apparent here.

| GPU | Llama 3 70B Q4KM Generation (Tokens/second) | Llama 3 70B F16 Generation (Tokens/second) |

|---|---|---|

| NVIDIA 309024GBx2 | 16.29 | N/A |

| NVIDIA RTXA600048GB | 14.58 | N/A |

In the case of the Llama 3 70B, the NVIDIA 309024GBx2 once again takes the lead, showcasing a higher token generation speed than the NVIDIA RTXA600048GB. Interestingly, we don't have F16 (half-precision floating point) data for the Llama 3 70B model for either GPU. This is likely due to the significant memory requirements of the model, making it difficult to run efficiently in F16 mode.

Performance Analysis

Strengths and Weaknesses

NVIDIA 309024GBx2:

- Strengths: Offers superior performance for both the Llama 3 8B and Llama 3 70B models, especially in the Q4KM mode.

- Weaknesses: More expensive than the RTXA600048GB, requiring two GPUs. This can add to the overall cost and complexity of your setup.

NVIDIA RTXA600048GB:

- Strengths: More cost-effective option compared to the 309024GBx2. Offers a single powerful GPU solution, simplifying setup and reducing power consumption.

- Weaknesses: Slightly slower performance compared to the 309024GBx2, particularly with the Llama 3 70B model.

Practical Recommendations

- For running smaller models like Llama 3 8B: Both GPUs are viable options, and the choice boils down to budget and preference. The RTXA600048GB provides a cost-effective solution with good performance, while the 309024GBx2 offers slightly better performance but at a higher cost.

- For running larger models like Llama 3 70B: The 309024GBx2 emerges as the clear winner. Its superior performance, especially in Q4KM mode, makes it ideal for handling the demanding computational requirements of these larger models. However, remember the higher cost and complexity of the setup.

Quantization: Making LLMs More Efficient

What is Quantization?

Imagine you have a huge library full of books, each with detailed information but taking up a lot of space. Quantization is like summarizing the important parts of each book into a shorter version. It reduces the size of the library while still retaining essential information.

Similarly, quantization in LLMs takes large, high-precision numbers representing model parameters and converts them into smaller, less precise numbers. This reduces the model's memory footprint without significantly affecting its accuracy.

Why is Quantization Important for LLM Performance?

Smaller models mean less memory required, allowing them to run on less powerful hardware or in more efficient configurations. Quantization also speeds up processing by allowing the GPU to work with smaller numbers more quickly.

How Does Quantization Affect Token Generation Speed?

Quantization can significantly improve token generation speed, especially for larger models. The Q4KM mode we discussed earlier uses a quantization technique that allows for faster processing without sacrificing too much accuracy.

Conclusion

In the battle of the titans, the NVIDIA 309024GBx2 emerges as the champion for running larger LLMs like the Llama 3 70B. Its superior performance, especially with quantization, enables faster token generation and smoother processing. However, for smaller models like the Llama 3 8B, both GPUs offer excellent performance, and the choice depends on your budget and setup preferences.

Remember, understanding the nuances of LLMs, quantization, and hardware requirements is crucial for optimizing your setup and getting the most out of these powerful language models.

FAQ:

Q: What is the difference between an RTX 3090 and an A6000?

A: The RTX 3090 is a consumer-grade GPU designed for gaming and content creation, while the A6000 is a professional-grade GPU designed for demanding workloads like scientific computing, machine learning, and AI. The A6000 has more memory, a higher clock speed, and better power efficiency than the RTX 3090.

Q: What is token generation speed?

A: Token generation speed refers to the number of tokens a GPU can process per second when running an LLM. In simpler terms, it measures how fast the GPU can produce text or complete other AI tasks.

Q: What does Q4KM mean?

A: Q4KM is a specific type of quantization where the model parameters are converted to 4-bit integers. This reduces the memory footprint and speeds up processing without sacrificing too much accuracy.

Q: What are some other GPUs I could consider for running LLMs?

A: Other popular GPUs for LLM inference include the NVIDIA RTX 4090, the AMD Radeon RX 7900 XTX, and various cloud-based GPU solutions.

Keywords:

NVIDIA 309024GBx2, NVIDIA RTXA600048GB, LLM, Llama 3 8B, Llama 3 70B, token generation speed, benchmark, performance, quantization, Q4KM, F16, GPU, AI, machine learning, deep learning, inference, processing, cost-effective, efficiency, speed, computational power